Storage Peer Incite: Notes from Wikibon’s February 24, 2009 Research Meeting

On Tuesday, Feb. 24, 2009, the Wikibon community came together for a panel discussion of practical approaches to cutting data center and specifically data storage energy use, both to save expenses in a time of tight budgets and to improve the corporate carbon footprint, given that most electricity in North America is generated from coal. One interesting fact that came out in the discussion is that even small changes -- and in particular a reduction in the amount of data stored or a switch to even slightly more energy-efficient storage systems, can have a major effect.

The reason for that is an unstated truth behind the discussion: Any change in energy use is doubled. The reason for that goes back to high school physics and specifically the Law of Conservation of Energy. What that tells us is that when IT equipment uses a watt of power, it does not actually consume anything. What it does is transform that energy from electricity into waste heat. And all that waste heat then must be removed from the data center least the heat buildup damage the equipment. That means that for every watt of energy used by IT equipment, roughly another watt must be burned by the cooling system.

This is a very important basic fact. In the present economy few organizations are in a good position to launch major green power initiatives. They have no budget for instance to install solar or wind generators on the data center roof or to replace huge amounts of equipment with more efficient models. Actually, many ITOs will probably be stretching their replacement schedules to cut costs.

But IT can do small things, particularly when they require little capital investment and pay off quickly in added efficiency. Deleting unnecessary data, for instance, can have a surprising impact on energy use and save money on the capital budget at a very small expense. And because of the Law of Conservation of Energy, that impact will be doubled.

The articles below examine the issues surrounding energy use on the storage farm and lay out the advantages and costs of various strategies. The overall message, however, is that even ITOs hard hit by the recession can make significant advances in energy efficiency, both saving bottom line expenses and moving toward a greener future. Do not underestimate the importance of small steps. G. Berton Latamore

Reducing data center costs through energy efficiency

On February 24th 2009, the Wikibon community convened to continue a panel discussion started at last fall’s Storage Networking World in Dallas. Participating in the call were:

- Daryl Molitor - Senior Architect at JCPenney

- Phil Bullinger - Executive Vice President, Engenio Storage Group, LSI

- Clod Barrera - Distinguished Engineer and Chief Technical Strategist at IBM Systems & Technology Group

- Ken Osterberg - Director, Enterprise PLM Portfolio Strategy at Seagate

More than 80 Wikibon members joined the call. Daryl Molitor kicked things off and shared some excellent metrics around energy efficiency at his organization.

Eight noteworthy themes emerged from the research meeting:

- U.S.-based companies, while behind European and Japanese counterparts, are getting serious about energy efficiency and are beginning to demonstrate meaningful results.

- Green IT generally and green storage specifically, remain bottom line exercises, inextricably linked to overall data-center efficiency.

- The economic downturn is definitely (negatively) impacting longer term energy initiatives; however high double-digit efficiency gains are being reported by users implementing many small but collectively meaningful improvements.

- By simply advancing technology, users are gaining the benefits of 'going green.'

- However, getting rid of useless data remains the greatest opportunity for users to improve efficiencies and cut costs. Good tools (e.g. data classification) remain limited, with busy IT organizations often choosing the convenience of adding more storage over cleaning up the mess.

- Tiered storage systems exploiting higher capacity, slower spin-speed devices and automated data movement are being supported by storage virtualization architectures.

- Tier 0 solid state disk and flash technologies are gaining mindshare and hold substantial promise to improve energy efficiency. Consolidating short-stroked FC devices remains the near-term opportunity, while longer term exploitation will require substantial architectural change.

- Standards are evolving from organizations such as SNIA to foster repeatable, consistent measurements for power consumption of storage arrays, with the goal of ultimately allowing users to trade workload for power and ideally align data, workload and device power characteristics. Drive standards around power management are moving forward to support this effort, however users should not expect subsystem-level results until the 2010 timeframe.

In addition, technology innovations including thin provisioning, data de-duplication, wide-striping, and spindown continue to gain momentum as field data increasingly suggests improved utilization and other benefits result from implementing these capabilities. IT management must be cautioned however not to allow these technologies to supersede cleaning house of unnecessary data. Defensible policies to eliminate 'junk' data remain vital.

Finally, organizations should look for measurement technologies to emerge, first in the form of intelligent power distribution units (PDU's), which, while more expensive, will provide CIO's with better visibility on energy consumption at the device level. Over time, expect embedded instrumentation at the device level to measure power consumption in near real time.

Action item: Driven by economics, energy efficiency is moving beyond the hype phase into the meaningful adoption stage. In difficult times, with tight capital spending, IT organizations must find the resources and time to clean out unnecessary data. Advanced storage technologies will naturally progress and be adopted but users must endeavor to practice disciplined approaches to storage retention and deletion.

Footnote: Special thanks to Daryl Molitor, Phil Bullinger, Ken Osterberg, Clod Barrera, Amber Strong and Brian Garabedian for helping make this Peer Incite possible.

Data Classification – Key to Staying Green in the Data Center

While emerging standards are encouraging and users like JCPenney have successfully lowered energy costs, the real challenge remains automated data classification. Below is a summary of Wikibon articles that can be used as ITIL-type tips in addressing this challenge:

- Classifying Data – A Primer

- Forget About Manual Classification

- Smart Data Classification

- Store It in the Cloud

- Move It

- Get Rid of It

Action item: Energy efficiency goals need to be matched to the storage and applications supported. The ability to automate data placement is a key aspect of this as well as the ability to enforce policies to defensibly delete information.

CIO's don't own the power budget: Same old refrain

At the February 24th, 2009 Wikibon Peer Research Meeting, we asked if there's been a change in the situation that CIO's don't own the power budget. The answer-- somewhat but not nearly enough to affect change. Wikibon user estimates from the spring of 2008 indicate that less than 10% of CIO's have responsibility for the IT portion of the power bill, while December 2008 Forrester data pegs the figure at a whopping 11%.

Heightened sensitivity to data center energy efficiency, continued uncertainty around energy prices, and the global recession will increase scrutiny of data center environmentals. Moreover, as heat densities for IT equipment increase, more and more legacy data centers are up against the threshold of energy capacity and floorspace. These realities threaten to expose CIOs to unforeseen budget items. The best advice is to act now.

Action item: CIOs need to get a handle on data center power consumption before it becomes a critical issue. Start by getting a copy of the energy bill and estimating the percent consumption from the IT equipment and forecast consumption over time. Work with facilities professionals to develop plans and practices to manage energy thresholds on an ongoing basis, with the goal of freeing up more space, creating electricity consumption headroom, and forestalling new data center build outs.

Enterprise Flash Drive Cost and Technology Projections

In January a year ago, EMC surprised the IT world with the introduction of flash drives. In the Wikibon peer incite this Tuesday (2/24/2009) we heard that EMC had introduced 200GB and 400GB flash drives and reduced the price of flash drives relative to disk drives. Other leading vendors such as HDS, IBM, HP, and Sun have all introduced flash drives, and most if not all storage vendors plan to introduce them in 2009.

Flash drives have two major benefits for reducing storage and IT energy budgets.

- The ability to perform hundreds of times more I/O that traditional disks and replace large numbers of disks that are I/O constrained. This allows the remaining data that is I/O light to be spread across fewer, high-capacity, lower-speed SATA hard drives. The impact is fewer actuators, fewer drives, and more efficient storage controllers, leading to lower storage and energy costs.

- The ability to increase system throughput by reducing I/O response times. Flash can have a profound effect for workloads which are elapsed-time sensitive. One EMC customer was able to avoid purchasing 1,000 system Z mainframe MIPS and software by reducing batch I/O times with flash drives. Others have placed critical database tables on flash volumes and significantly improved throughput. By eliminating a large proportion of “Wait for I/O” time, Wikibon estimates that between 2% and 7% of processor power and energy consumption can be saved.

In the peer incite, Daryl Molitor of JC Penney articulated a clear storage strategy of replacing FC disks with flash while meeting the rest of the storage requirements with high density SATA disks. Daryl’s objective was to reduce storage costs and energy requirements. This bold strategy begs two questions:

- In what time scale will flash drives replace FC drives?

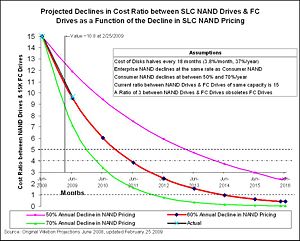

- In June 2008 I wrote a Wikibon article "Will NAND storage obsolete FC drives?". The update of the projection chart in the original article is shown in chart 1 below. It shows that the actual reduction in prices of NAND storage is coming down at about 60%/year. At this rate of comparative reduction, FC drives will be obsolete in less than three years. Significant opportunity to move some data to flash drives exists today, and by starting now Daryl is placing himself in a good strategic position.

What architectural, infrastructure and/or ecosystem changes must be available to implement this strategy? Some vendors and analysts have predicted that flash technology will profoundly change the way systems are designed, leading to flash being implemented in multiple places in the systems architecture. However, such fundamental architectural changes will also require significant changes in the operating systems, database software and even application software to exploit it. Gaining industry agreement to such changes will not happen within three years. Disk drives are currently the standard technology for non-volatile secure access to data and will remain the standard for at least the next three to five years. EMC was right to introduce flash technology as a disk drive as the simplest way to introduce the technology within the current software ecosystem.

That is not to say that technology changes are not required. Vendors and analysts have pointed out that the architecture of all current array systems are not designed to cope with flash storage devices that operate at such low latencies. This leads to limited numbers of flash drives being supported within an array, and less than optimal performance from the flash drives. Vendors are moving to fix this, and this will happen within three years.

The most fundamental architectural change required is to ensure that the right data is placed on flash storage. To begin with, specific database tables and high activity volumes are being moved to flash drives manually on an individual basis. The next stage will be to automate the dynamic movement of data to and from flash drives to optimize overall I/O performance. A prerequisite is to be able to track I/O activity on blocks of data and hold the metadata. Virtualization architectures will have a head start in providing the infrastructure to provide monitoring and automated dynamic movement of data blocks.

So which vendors will provide the flash technology that operates efficiently in a storage array and provides automated dynamic (second by second) data balancing? At the moment, none can. Clearly EMC has a head-start in understanding the technology, understanding customer usage, and understanding the storage array requirements. The storage vendors offering virtualization are also well positioned; Compellent has probably the most versatile architecture with its unique ability to dynamically move data within a storage volume to different tiers, and IBM has broken the 1 million IOPS barrier for an SPC workload with flash storage connected to an IBM San Volume Controller (SVC). Other storage virtualization vendors such as 3PAR, NetApp, HP, and Hitachi are also well positioned.

Action item: The race is on. Storage executives should be exploring the use of flash drives for trouble spots in the short term on existing arrays in order to build up knowledge and confidence in the technology for different types of workload. For full-scale implementation, storage executives should wait for solutions that provide storage arrays modified to accommodate low-latency flash drives and automated dynamic placement of data blocks to optimize the use of flash.

Standards can help improve energy conservation efforts.

Energy conservation is all about saving energy, and within data centers storage is one of the biggest energy hogs driving ever increasing energy costs. So how do you know which storage solution is really the most energy efficient? How do you penetrate the fog of greenwash and get usable, competitive energy consumption numbers? The answer is standards, and the one group that is driving standards for storage network products is the Green Storage Initiative (GSI) Team within the Storage Network Industry Association, SNIA.

A draft publication, the “SNIA Green Storage Power Measurement, Technical Specification”, is the latest attempt to establish a standardized methodology that enables accurate and comparable measurements of the power consumption of commercial storage systems. This objective, metric-based approach should enable the user community to demand that vendors supply energy consumption data that can be used to contrast competitive solutions and effectively eradicate or at least minimize vendor market speak. Note this is still a draft document and as such is not yet a practical reality, but it is heading in the right direction.

The GSI group have developed frameworks for six broad taxonomy categories. Online, Near-Online, Removable Media, VTL, Infrastructure Appliance, and Infrastructure Interconnect Elements. Within these frameworks are the guidelines for measurements and data collection including the metrics and most importantly how to audit, verify and publish the measurements.

Metrics

The metrics proposed by GSI are designed to evaluate the energy impact of storage network products. The initial metrics focus on idle power measurements, a future intent is to include active devices.

Average Idle Power (P) = sum of the sample power measurement (W) / number of sample measurements (N)

SNIA Idle Power Metric = Capacity of the storage unit/ Average Idle Power

Action item: While this may be a basic metric, it is a standardized format and should be useful when comparing competitive storage solutions. As a tool for storage buyers, its usefulness will increase significantly as it matures to include active devices. The primary beneficiary of this standard is the end-user. Storage purchasers should pressure vendors to ensure universal support and willingness to implement this standard and push SNIA to make this a readily accessible standard.

Footnotes: SNIA Green Storage Power Measurement Specification, Jan 20th, 2009

Standards Implications on Improving Power Consumption and Power Efficiency

For end users and the industry to fully get behind and realize the full potential of green initiatives, standards are imperative - especially if the standards recognize both dimensions available for improvement: reducing power consumption and increasing power efficiency.

Reducing power consumption is just what it says - eliminating power demand and usage. The additional implication is that it's reducing power while doing the same amount of work. Ultimately it's measured and compared in watts, although there are advantages in normalizing some kind of work to watts (GB/W, IOPs/W). SNIA's Idle Test Specification employs a GB/W metric for measurement and comparison purposes.

Increasing power efficiency refers to doing more with the amount of power that's available - some power reduction may also be realized, but the focus is on doing more work with the power you have (as opposed to doing the same amount of work with less power). The comparative metric for power efficiency will be whatever work you're concerned about relative to the amount of power consumed performing that work.

Which dimension is most important to you is highly dependent on the application and operating environment. High performance, primary storage systems being utilized on mission critical systems are going to be concerned about getting the most work done with the amount of power available, whereas archive or secondary storage systems are going to be more concerned about minimizing the overall power utilized.

At the end of the day, it ultimately about economics. Can you save money or get more done within your existing budget by deploying "green" storage?

Action item: While storage isn't the primary drive of power utiliziation within a datacenter, it is a substantial enough power consumer to warrant attention. Datacenter managers should be keenly aware of the type of application and operating environment running on the storage system, and drive appropriate and relevant power reduction and power efficiency requirements back to their storage providers. Be specific on which dimension(s) are important to you and demand continuous improvement.

Getting Rid of Data Helps Data Centers Go Green

The Wikibon Project continues to identify strategies, technologies, and best practices for managing the business and environmental impact of rising energy consumption, and the consequences of not taking proactive steps to address these challenges.

Reducing storage energy consumption and possibly even energy costs are usually thought of in terms of improving the hardware infrastructure by shrinking footprint or by replacing aging UPS, chillers, and air conditioning gear with newer and more efficient technologies. While these will help address the mounting energy challenges facing today's data centers, other strategies also need to be considered.

Beyond the issues of hardware infrastructure, focus has been shifting to a strategy of “getting rid of stuff.” Clearly server virtualization and storage consolidation have received much attention in the past year. What about getting rid of duplicate or redundant data? What about improving poor storage device utilization? What about optimizing the location of data using the tiered storage hierarchy? It is a matter of taking proactive versus reactive steps to minimize the amount of stored data.

Businesses are re-architecting their storage systems with a variety of new capabilities that improve storage efficiency by reducing the number of devices, improving I/O performance, increasing allocation levels, and adding stronger security measures. Each of these gets rid of stuff in a different way, directly attacking the goal of making the data center greener. An expected range of storage savings is provided below.

| Storage Issue | Solution | Avg Savings |

|---|---|---|

| Too many devices | Virtualization/Consolidating storage | Varies |

| Over-allocation of storage | Thin provisioning | 25%-30% |

| Removing redundancy | Compression | 50%-66% |

| Eliminating duplicate data | Deduplication | >80% |

| Optimizing data placement | HSM software | 10%-50% |

| Short-stroke disks (IOs) | Flash disk drives | 5%-10% |

Although available on mainframes since 1965, thin provisioning is now a staple for non-mainframe systems and allows physical disk storage to be reserved only when data is written, not when the application is first configured. In traditional storage provisioning, application teams guess at how much storage they might consume and reserve that full amount on day one. The amount reserved can be used only by that application and is not available to others. This means that within a data center, large portions of costly storage go unused, and consume power and cooling resources even though no data is actually written to the disk.

Compression and deduplication reduce data in different ways. With compression you are using an algorithm to reduce the size of a particular file by eliminating redundant bits. If the exact same file is stored multiple times, no matter how good your compression method is your backup storage will end up with multiple copies of the compressed files. Typical compression ratios range from 2x to 4x. File deduplication eliminates redundant data copies, storing only one, reducing data for many applications beyond what compression can accomplish alone. Proactive data reduction capabilities are expected in a future generation of deduplication technology, moving the process from a reactive to a proactive data reduction approach that serves a larger range of file types.

Flash disk drives are low energy consumers and are becoming a viable solution to traditional short-stroke or partially allocated disks that improve performance by reducing disk arm contention. The high performance applications suited for flash memory can represent as much of 10% of disk storage at current flash pricing levels. Flash SSDs are non-volatile; have low power consumption, much higher read performance than magnetic disk, low heat output, and a small size with pricing moving into the disk drive realm.

Classifying data has immense value in enabling and identifying what needless data to eliminate. All data is not created equal, and the value of data can change throughout its lifetime. For non-mainframe systems, data classification can be a big step, but it is quickly becoming an essential one for most data management functions. Data classification encompasses aligning data with the most efficient cost-effective storage architectures and services based on the changing value of data. Defining policies to map application requirements to storage tiers is assisted by existing HSM type software as well as emerging data classification and policy-based management software from a variety storage software companies.

Action item: Though challenging and becoming increasingly complex as storage requirements grow, re-architecting the data center yields much improved operational efficiencies and cost savings. Technologies including HSM (Hierarchical Storage Management), deduplication, thin provisioning, virtualization, and snapshot copies can play a role but at the end of the day the root issue is the ability to defensibly get rid of useless data. This means you have to be able to make smart decisions about your information, and that starts with the ability to classify it. Soon data classification will become a requirement for most enterprise and SMB data centers if they are to have any hope of managing their ever growing data. The time to start is now.

JCPenney tiers away energy costs

On February 24th 2009, the Wikibon community convened to continue a panel discussion started at last fall’s Storage Networking World in Dallas. Participating in the call were:

- Daryl Molitor - Senior Architect at JCPenney

- Phil Bullinger - Executive Vice President, Engenio Storage Group, LSI

- Clod Barrera - Distinguished Engineer and Chief Technical Strategist at IBM Systems & Technology Group

- Ken Osterberg - Director, Enterprise PLM Portfolio Strategy at Seagate

More than 80 Wikibon members joined the call. What follows is a summary of Daryl Molitor’s opening remarks:

According to Daryl Molitor, JCPenney has spent more than $100M to improve energy efficiency in its stores and data centers. At the corporate level, JCPenney is working toward a goal of having more than 200 stores ENERGY STAR certified by 2011. The organization has replaced CRAC units and installed new lighting systems in 200 stores accounting for a savings of about 27 million kWh annually. The company is experimenting with small rooftop wind turbines in Reno and solar panels in some CA and NJ stores.

JCPenney has taken several small steps in its data centers that are adding up, specifically, the IT groups have taken the following actions which have resulted in a 40% reduction in energy costs:

Data Center Infrastructure

- Sealed gaps in tiles with cold locks and sealed air leaks;

- Implemented hot and cold aisle designs in the server area;

- Replaced 20 ton HVAC units with 30 ton units;

- Installed heat monitoring systems;

- Replaced lighting (which will yield a two year payback);

- Eliminated unused equipment;

- Installing software to manage air flow (future).

Server Actions

JCPenney is pursuing an aggressive server consolidation plan underpinned by virtualization. The company runs between 400-500 VMware images with 18-20 images per ESX. The organization hopes to be at 700-800 by year’s end. JCPenney also runs more than 200 images on seven AIX or Solaris platforms and is using LPAR and VIO wherever possible. It plans to virtualize standalone servers through attrition where possible.

Storage Strategy

JCPenney has implemented a three-tier storage architecture. The 2008 installed profile was:

- 1,500 SAN ports

- ~500TB of T1

- 450TB of T2

- 25TB’s of T3

The firm uses IBM’s SAN Volume Controller to virtualize about 200TB’s of T2 and T3 storage. Virtualized storage is expected to increase by 67% in 2009. While the pyramid looks skewed, with T3 being light, this tier increased 47% in 2008 and over time JCPenney plans to more aggressively move to T3 storage.

In the near term, the company has replaced all 73GB drives with 146GB devices on T1 and completely eliminated 146GB drives in T2, moving to 300GB drives. All T3 storage is placed on 1TB devices. The company has been able to increase drive capacities while decreasing its T1 storage by 12%. These storage actions have allowed JCPenney to reduce core space requirements by 20% and head and electricity by 22%.

Longer term the company is planning to implement solid-state devices, which it believes declined 75% from initial announcement price (approximately one year ago). JCPenney plans to aggressively implement flash drives for performance applications and over the longer term, implement a T1/T3 architecture with flash doing the bulk of T1 work and ultra capacity drives (e.g. 4-8TB) storing T3 data.

As well, the company is doing a proof of concept for data de-duplication in 2009 and will reducing its tape footprint, freeing up more floor space.

Action item: JCPenney's green IT initiatives started largely as a set of actions designed to improve data center efficiency. These coincided with major corporate sustainability efforts and have coalesced into a more coherent strategy over the past six months. In this tight economy IT management should seek to align data center efficiency and corporate sustainability efforts, focusing on low-risk initiatives that payback inside of 12 - 18 months.