UPDATE please see Flash Pricing Trends Disrupt Storage for revised projections as of May 2010.

In January a year ago, EMC surprised the IT world with the introduction of Flash drives. In the Wikibon Peer Incite this Tuesday (2/24/2009) we heard that EMC had introduced 200GB and 400GB flash drives, and reduced the price of flash drives relative to disk drives. Other leading vendors such as HDS, IBM HP and Sun have all introduced flash drives, and most if not all storage vendors have plans to introduce them in 2009.

Flash drives have two major benefits for reducing storage and IT energy budgets.

- The ability to perform hundreds of times more I/O that traditional disks and replace large numbers of disks that are I/O constrained. This allows the remaining data that is I/O light to be spread across fewer high capacity lower speed SATA hard drives. The impact is fewer actuators, fewer drives and more efficient storage controllers., leading to lower storage and energy costs.

- The ability to increase system throughput by reducing I/O response times. Flash can have a profound effect for workloads which are elapsed time sensitive. One EMC customer was able to avoid purchasing 1,000 system Z mainframe MIPS and software by reducing batch I/O times with flash drives. Others have placed critical database tables on flash volumes and significantly improved throughput. By eliminating a large proportion of “Wait for I/O” time, Wikibon estimates that between 2% and 7% of processor power and energy consumption can be saved.

In the Peer Incite, Daryl Molitor of JC Penney articulated a clear storage strategy of replacing FC disks with flash, and meeting the rest of the storage requirements with high density SATA disks. Daryl’s objective was to reduce storage costs and energy requirements. This bold strategy begs two questions:

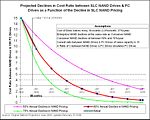

In what time scale will Flash drives replace FC drives? In June 2008 I wrote a Wikibon article "Will NAND storage obsolete FC drives?" The update of projection chart in the original article is shown in chart 1 below.

It shows that the actual reduction in prices of NAND storage is coming down at about 60%/year. At this rate of comparative reduction, FC drives will be obsolete in less than three years time. There is already significant opportunity to move some data to flash drives, and by starting now Daryl is placing himself in a good strategic position.

What architectural, infrastructure and/or ecosystem changes must be available to implement this strategy? Some vendors and analysts have predicted that Flash technology will profoundly change the way that systems are designed, leading to flash being implemented in multiple places in the systems architecture. However, such fundamental architectural changes will also require significant changes in the operating systems, database software and even application software to exploit it. Gaining industry agreement to such changes will not happen within three years. Disk drives are currently the standard technology for non-volatile secure access to data and will remain the standard for at least the next three to five years. EMC was right to introduce flash technology as a disk drive as the simplest way to introduce the technology within the current software ecosystem.

That is not to say that technology changes are not required. Vendors and analysts have pointed out that the architecture of all current array systems are not designed to cope with flash storage devices that operate at such low latencies. This leads to limited numbers of flash drives being supported within an array, and less than optimal performance from the flash drives. Vendors are moving to fix this, and this will happen within three years.

The most fundamental architectural change required is to ensure that the right data is placed on flash storage. To begin with specific database tables and high activity volumes are being moved to flash drives manually on an individual basis. The next stage will be to automate the dynamic movement of data to and from flash drives to optimize overall IO performance. A prerequisite is to be able to track I/O activity on blocks of data and hold the metadata. Virtualization architectures will have a head-start in providing the infrastructure to provide monitoring and automated dynamic movement of data blocks.

So which vendors will provide the flash technology that operates efficiently in a storage array and provides automated dynamic (second by second) data balancing? At the moment, none can. Clearly EMC have a head-start in understanding the technology, understanding customer usage and understanding the storage array requirements. The storage vendors offering virtualization are also well positioned; Compellent has probably the most versatile architecture with its unique ability to dynamically move data within a storage volume to different tiers, and IBM has broken the 1 million IOPS barrier for an SPC workload with flash storage connected to an IBM San Volume Controller (SVC). Other storage virtualization vendors such as 3PAR, NetApp, HP and Hitachi are also well positioned.

Action Item: The race is on. Storage executives should be exploring the use of flash drives for trouble spots in the short term on existing arrays in order to build up knowledge and confidence in the technology. For full scale implementation, storage executives should wait for solutions that provide storage arrays modified to accommodate low-latency flash drives and automated dynamic placement of data blocks to optimize the use of flash.