Tip: Hit Ctrl +/- to increase/decrease text size)

Storage Peer Incite: Notes from Wikibon’s June 8, 2010 Research Meeting

For a decade the explosion of data, and particularly of e-mail and other unstructured data, has been a huge headache for CIOs. This started with the advent of e-mail in the 1980s but became a budget issue with the addition of attached files in the 1990s. IT fought back by restricting the size of attachments and of the size of individual e-mail boxes, forcing users to delete older e-mails. Then compliance arrived in the wake of Enron, requiring publicly traded companies to preserve those old e-mails and attached files for years. The result was an explosion of storage capacity that in extreme cases forced the construction of new data centers, just as the economy has put a huge squeeze on budgets and particularly CapEx. The lack of optimization tools for unstructured data, however, has left IT with few options.

Now, finally, good news is on the horizon in the form of highly efficient new data dedupe technologies that can be added to the storage arrays themselves. Dedupe can reduce the size of unstructured data stores by as much as 90% automatically, with no loss of data and no noticeable increase in response times. And it can be applied as to primary as well as older archived data.

This can be a game changer in the storage arena. Now instead of devoting a large part of the IT CapEx budget to buying storage devices, IT can cut that growth back to a reasonable level. It is something to smile about. G. Berton Latamore

Permabit Albireo: A new Model for Primary Storage Optimization

On Monday June 7, 2010, Permabit announced Alberio, a new technology that speeds deduplication for primary storage. Albireo is positioned as an offering to enable storage array manufacturers to compete with NetApp's Deduplication (formerly A-SIS; Advanced Single Instance Storage). NetApp has cornered the market on deduplication for primary storage by bundling deduplication into its arrays at no additional charge. This has increased the appeal of NetApp products and allowed the company to market storage efficiency effectively as a major theme.

Albireo is different in concept because it is delivered as a software development kit (SDK). While this means OEMs must do some integration, the upshot is the flexibility of an all-software platform is enticing. Albireo is the first product in the primary deduplication space that promises to deliver the following:

- A flexible array of deduplication services,

- The ability to optimize file-, block- or object-based storage systems,

- Operate at the file or sub-file level,

- Optimize inline (i.e. real-time) or post-process,

- Eliminate application performance concerns,

- Scale from a single storage controller to a cluster of storage controllers or a cluster of deduplication appliances communicating with a storage system over an industry standard Ethernet interface.

How Does Albireo Work?

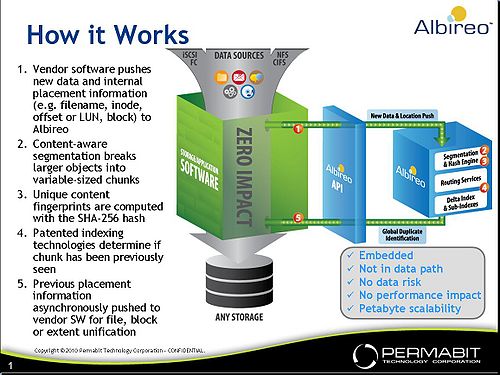

Figure 1 shows a diagram presented by Jered Floyd, Permabit's co-founder and CTO who presented to the community. This diagram shows Albireo's parallel process:

- The green box in the center represents the vendor's existing storage stack. At the top of the box are the vendor's existing interfaces (e.g. block would be iSCSI or FC; file would be NFS or CIFS). Data flows from those interfaces to the existing file system stack through the vendor's data placement and data protection scheme (e.g. RAID) into their existing storage infrastructure stack. The key point is Albireo doesn't interfere with the write or read path in any way. When an array vendor does an integration, it takes the data into the system and copies it to the Albireo API along with internal placement information (e.g. a virtual LUN or an iNode offset in a file system).

- Next on the diagram is Albireo's segmentation engine which allows the system to identify boundaries within a file. Note: in a block situation the vendor may just choose a standard 4K block size. The segmentation engine breaks larger objects into variable-sized chunks to improve dedupe efficiency.

- Once data is segmented it is then 'fingerprinted' using a known hash algorithm (SHA-256), which assigns a unique identifier to determine if the data exists already in the storage system.

- Next is the 'secret sauce' of Albireo and one of the most difficult challenges in primary deduplication-- namely determining quickly whether the system has seen the information before across a large storage pool. Conventional hash tables only work well for small data sets. Across hundreds of terabytes or even multiple petabytes, a hash table data structure will require too much overhead (e.g. memory) to maintain the data structure and will require paging to disk which causes unacceptable performance. Albireo uses a proprietary indexing method to determine if the data chunk has been seen before very quickly. Albireo does this very efficiently with data in memory; avoiding the need to go to disk. This index lookup operation occurs in 10 microseconds, and the end-to-end process with all latencies takes about 40 microseconds in total.

- Once the index lookup is complete-- if the information has not been seen before, it's added to the index. If it has been seen, the system makes an asynchronous callback to the vendor's system providing a 'duplicate advisory' or a 'deduplication notification' - e.g. the data that was just stored in block X was previously stored in block Y or file A in this offset is also stored in this location. It is then the responsibility of the storage vendor's stack to free up the duplicate space and make it available to the pool as free space. Permabit claims this is a 'lightweight' integration exercise with a weeks or months effort.

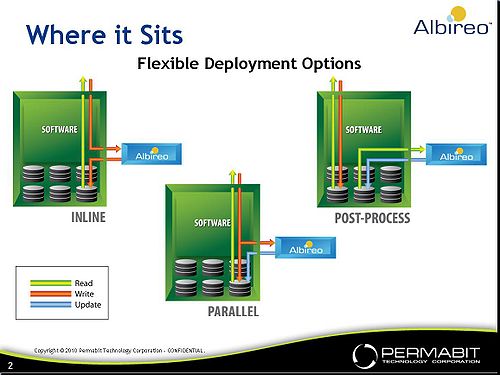

Figure 2 shows the deployment options for Albireo. There are three deployment options for the technology that span inline, parallel, and post-process. The parallel and the post-process implementations have no performance impact; inline deployment will create some latency on each request, which Permabit and its OEMs will attempt to mask with parallelism.

Changing Storage Optimization Landscape

Storage optimization technologies generally and specifically data deduplication and compression are moving to primary storage. Key considerations as to where these technologies apply include use case, performance, costs, and effectiveness. Wikibon has developed the concept of CORE - Capacity Optimization Ration Effectiveness to assess the business value of optimization solutions. Our preliminary take on CORE as applied to primary storage optimization was published last quarter; where we concluded for primary storage - speed is critical. We received significant feedback suggesting: 1) CORE is highly dependent on use case and data type; 2) the methodology over-weights performance and 3) we need to better account for read:write ratios in our assumptions.

Albireo has advanced our thinking on CORE in a number of ways, which were brought out in community comments. Specifically, the flexibility to support multiple use cases has clear value, and we have not explicitly accounted for that in CORE. Storwize, for example, scored very well in CORE because it has very low latency in primary storage use cases. However there are other values that Albireo brings that we must assess in addition to latency; not the least of which is the flexibility and embedded nature of the product. As well, the cost of products like Albireo and NetApp Deduplication are 'fuzzy' because they may not be charged for explicitly. Our intent is to run Albireo through CORE once we have had an opportunity to further assess performance overheads, costs, likely deduplication ratios and use cases.

On balance, the Wikibon community was in agreement on the call that storage optimization function will increasingly become embedded as a feature of arrays. Albireo's ability to support block, file and object-- unified storage essentially-- is unique in the market and also adds business value to OEMs looking for flexibility. The keys to Albireo as we see them are: 1) the software-based model and 2) its proprietary indexing scheme. The next key for Permabit is to announce customers which it expects to do in the second half of 2010.

Action item: Primary storage optimization has been popularized by NetApp's Deduplication and has given the company a strategic advantage relative to other array suppliers-- making deduplication a standard feature set of arrays. Products like Permabit's Albireo pivot off this trend and represent the next generation deployment model for storage optimization in primary systems. CIOs should expect this capability to be a fundamental offering of primary storage systems and push vendors to demonstrate a roadmap where data reduction IP can reside throughout the storage stack without disruption.

CIOs avoid duplication of effort and cost with embedded Dedupe for Primary Storage

In response to the necessity to manage the barrage of data and content inundating the enterprise more effectively, CIOs and CTOs have increasingly turned to vendors offering de-duplication (dedupe), compression and single instancing technologies in order to boost storage capacity and reduce costs.

With vendors touting dedupe ratios as high as 50:1 on back up and archived data and the availability of abundant horsepower to process up to 3TBs of data per hour, the case for bringing in a stand-alone appliance seemed clear. Vendors such as NetApp with A-SIS and EMC with Celerra also targeted primary storage capacity optimization. Today, dedupe and compression features and functions are an essential part of any storage or information management (IM) vendor’s list of capabilities and go to market strategy because they have become an essential check list item for buyers.

Key Dedupe Trends

As the dedupe market matures, two key trends will emerge by the end of 2010:

- A focus on unified or global storage optimization to include backup, archive as well as primary storage.

- The inclusion of dedupe as an embedded feature in existing storage and information management solutions.

Raising the Dedupe Bar for Primary Storage

On June 7th, Permabit announced its Albireo High Performance Data Optimization Software to address primary storage optimization. According to Permabit CTO and Founder Jered Floyd, “Companies have been wrestling with the concept of primary data de-duplication for some time now but have been unwilling to sacrifice performance in order to achieve this benefit. In order for de-duplication to operate effectively at the primary level it must have no performance impact, no feature set loss, and no risk of data integrity. Albireo makes this possible.” Floyd shared his thoughts on dedupe and vision for the Albireo platform with the Wikibon community during a June 8th Peer Incite research meeting.

EMC, NetApp and Permabit believe dedupe will become an embedded system feature, and even a commodity feature with affordable price points with little impact on overall performance.

Floyd, addressed participants concerns regarding what he referred to as “Bump in the Wire” technologies that slow processing speeds. “Albireo Scalable Data Reduction architecture supports both fixed and variable block deduplication with fixed block sizes down to 4k are supported by Albireo. In variable block deduplication, data is analyzed more intelligently, in a “content aware” manner, resulting in more efficient deduplication. For variable block deduplication, Albireo utilizes specific content “scanners” to identify and optimize deduplication of objects within files (e.g., Office® documents, images, zip files, backup files, VM.”

Futures and Concerns

How quickly storage and IM vendors or OEMs will embed deduplication functionality into their solutions remains to be seen. Stand-alone solutions such as Data Domain, now EMC, have sold extremely well and according to IT industry research sources, adoption of existing deduplication and compression technologies is yet to reach even 50% of enterprises, leaving the market wide open for a variety of approaches (in-line, post-processing, unified, etc) with primary storage dedupe adoption even lower. Meanwhile, other storage optimization vendors such as Ocarina Networks today sell appliances but one of their blog posts suggests the company sees dedupe technology as an embedded capability in the future.

Bottom line

Despite the immaturity of the dedupe market in general, thus far the potential achievable results are compelling enough to drive a robust market populated with many innovative products and advanced features for which earlier adopters have been more than willing to pay a premium. However, as more vendors adopt and develop de-duplication functionality and more competition enters the market, prices will level off and more products will include embedded storage optimization capabilities. By the end of 2011, storage vendors in particular will have to include embedded dedupe for primary storage as part of their storage optimization offering, or they will not make buyer short lists.

Action item: As de-duplication technologies become more widely available by the end of 2011, storage optimization will be a fundamental offering embedded into storage arrays. CIOs and CTOs should expect it and demand it from array suppliers.

Integration Alternatives for Storage Optimization

In our research on integration of the storage optimization stack into storage arrays, Wikibon concluded that primary storage optimization technologies such as Permabit's Alberio de-duplication should be integrated into the storage array. However, there are other alternatives places where the same technologies could be integrated into other locations in the infrastructure as well, including:

- Integration into the virtualization layer:

- Products such as IBM’s SVC, HP’s SVSP, Hitachi’s USP and now EMC’s VPLEX have a virtualization layer, and can also virtualize heterogeneous arrays. For all those products except VPLEX, the system also delivers additional storage optimization functionality such as thin provisioning and space-efficient copies. This virtualization layer is a sensible place to integrate storage optimization technologies.

- Virtualization systems like the NetApp WAFL System and systems from 3PAR and Compellent and IBM’s XIV all include a virtualization layer and other space-efficient technologies. NetApp already includes the A-SIS de-duplication feature, for free! Implementation of space-saving features like de-duplication is usually significantly easier in a virtualized environment, as "data holes" can be more easily filled.

- In theory this technology could be implemented at the Hypervisor level. This would add a lot of additional code into what is theoretically meant to be a thin layer. A more promising approach would be to implement thin copy techniques that would allow nearly identical copies of desktop and server operating systems to share a single copy of duplicate data in the first place.

- Integration into the database stack:

- Some storage optimization techniques (such as Oracle’s Columnar Compression in Exadata) are included in the database stack. The advantage of placing storage optimization in the database stack is that data can be compressed at a higher level, and less data has to be moved around. This is particularly useful for distributed databases. The other advantage is that the storage optimization can be implemented before security technologies (storage optimization after encryption is an oxymoron).

- The disadvantage of this approach is that an increasing percentage of data is unstructured and not held in databases. The cost overheads of databases are significantly higher than the cost overheads of array software.

- Integration into the application stack:

- Large ISVs such as SAP and Microsoft are under increasing pressure to reduce the cost of the computing infrastructure to run their applications. With SAP’s purchase of Sybase and Microsoft’s ownership of SQL, it is likely that they will offer a software alternative. This could be particularly appealing for small and very small systems.

The most obvious place to start with integrating storage optimization is in the storage array, as the array is almost always shared among many servers. With the advent of clustered storage controllers, this approach is likely to be the simplest to manage and the most cost effective. Other approaches will also have niches but are unlikely to gain major traction.

Action item: There will be a rich set of alternatives for implementing storage optimization, but the initial focus should be the storage arrays. Look for storage vendors of high integrity and reputation that can perform the technology integration and testing for you. Ensure these vendors have in place the vision, infrastructure and roadmap to get the job done.

The only alternative not to take is do-it-yourself integration, unless there is a compelling short-term business case

Data Security and De-dupe Interoperability

Duplicative anything – data, processes, business functions, etc, if not specifically designed, means cost and risk to the organization. For data, and the growing tidal wave of databases, content repositories, files shares, backup files, etc., the requirement to eliminate duplicate data across all significant applications must be sewn into the fabric of information management policy. Storage administrators, data architects, information security managers, and application heads collectively own the responsibility for ensuring efficient information management policy throughout an organization, and the elimination of duplicate data in online storage, backup, and archive is a primary goal of this policy. The requirements for data security and privacy protection are owned in similar ways. Here are a few key points to consider:

- The use of data de-duplication features in storage arrays is one way of putting good information management policy into practice.

- The same can be said about the use data encryption and key management. We've seen important moves in this direction in recent years from most storage and switch providers, including IBM, EMC, 3Par, Emulex, HP, and others.

- The placement of encryption and de-duplication features in the information lifecycle become an important organizational and strategic consideration when technologies like Alberio become embedded into arrays, and solutions from firms like Ocarina and others hit the market.

De-duplication features are now becoming embedded into the IT infrastructure / storage stack. At the same time, the use of data encryption features at the desktop, application, file, and database level is increasing as the threats to information security and privacy continue to plague organizations. It is critical for most use cases, however, that encryption occur after data is de-duplicated to ensure overall integrity of the data. This means that practitioners must ensure that they consider the impacts on their data security and protection strategies as vendors leverage their R&D for de-duplication and make these capabilities part of both the infrastructure and application stacks.

Action item: Organizations should create an information management strategy that meets both the requirements to protect the security and privacy of data end-to-end, and to eliminate duplicate data. CISOs who own encryption and CIMOs who own information management should collaborate with storage teams, application heads, and data architects to avoid type A errors that preclude the interoperability of encryption and de-duplication practices of an information management policy.

Deduplication is Becoming Table Stakes for Primary Storage

In a large part, due to NetApp’s success with dedupe on primary storage (i.e. A-SIS) there is a strong demand for storage optimization technologies to be embedded into primary storage arrays. The market is rapidly moving toward placing optimization intellectual property (e.g. compression, dedupe) as a fundamental component of arrays and delivering optimization as a transparent and non-disruptive function.

All major primary storage OEMs will have to have a story here. Today, as evidenced by NetApp, it is an advantage. By 2011, primary storage optimization will become a fundamental market requirement. By 2012, primary vendors without this capability will be at a disadvantage.

Permabit's Albireo announcement underscores the pace of this trend, and we expect to see OEMs adopting the technology or developing strategies that are comparable. This capability will rapidly move from nice to have to glaring white space.

Action item: The need for storage optimization reached front and center with the economic crisis of 2008 and 2009. It is now rapidly becoming an expected capability in 2010 as efficiency is becoming an important theme for users. Major storage suppliers must endeavor to deliver primary storage optimization function that is 1) embedded and transparent; 2) non-disruptive-- i.e. IP placed throughout the stack and 3) fast. The time to market imperative is now.

Commoditizing De-duplication

Getting Rid of Data Made Easy

Embedding and optimizing de-duplication for block and file primary storage solutions is an important milestone in delivering an improved information management stack to end-users. With data duplication ratios, particularly for unstructured data, the fastest growing segment of the digital universe, still hovering at 10:1-to-20:1, the availability of fast and efficient storage optimization capability as a software library (aka a commodity) for primary storage original equipment manufacturers is an important turn in the road for de-duplication and effective enterprise information management practices.

For end-users, CTOs, CIOs and application heads alike, dedupe as a software commodity means storage efficiency and optimization can be obtained as a basic feature from storage providers, no longer requiring a bump in the wire appliance. De-dupe is finally going the way of data encryption and compression – no longer a proprietary, inline device, nice to have or even desirable feature. The future of data de-duplication is as a basic, mandatory, check box function designed to be embedded first into the infrastructure stack and shortly thereafter further up into applications.

Market Timeline

For storage OEMs, the market timeline looks something like this:

- Today inline dedupe as a no/low cost, high performance basic feature provides strategic differentiation in the storage market,

- By 2011, it is table stakes to get into the market, and,

- By 2012, if you don’t provide an integrated, invisible, and optimized solution, you’re at a significant competitive disadvantage.

But the commoditization of de-dupe and its general availability as a library of capability to the storage market place doesn’t solve the problem of data over retention, poor records retention policies, or ineffective information management programs. Over-retention of data is still a big problem and risk for most enterprises. However de-duplication optimized for and embedded with primary storage solutions is an important CIMO tool for improving information practices across the enterprise.

It’s a must have for getting rid of unwanted and unnecessary data.

Action item: Get a plan together to aggressively get rid of unnecessary data and storage. Make de duplication a commodity part of that plan for all storage use cases – primary/OLTP, backup, and archive. Measure the success of the initiative to affect ongoing information management improvement.

Footnotes: Peer Incite on Storage Optimization