Storage Peer Incite: Notes from Wikibon’s January 22, 2013 Research Meeting

Video & Audio recordings from the Peer Incite:

Multi-petabyte and exabyte sized data repositories, until now largely the province of large government agencies and cloud/social media service providers like Facebook and Shutterfly, are quickly coming to large and even medium-sized companies, Russ Kennedy, VP of Product Strategy at Cleversafe, told the Wikibon Peer Incite community on January 22. Increasing numbers of companies are seeing data quantities expand rapidly as part of a major shift in the way companies operate to a more networked, social media approach. Databases that once were mainly written documents, such as e-mail repositories, now contain an increasing percentage of very large audio, video, and still image files, even as the total volume of files increases. Bert Latamore, Editor

Hyperscale Storage: Not If, When

On January 22, 2013, the Peer Incite gathered to discuss commercial applications and hyper-scale storage. Joining the community was Russ Kennedy, Vice President at Cleversafe, winner of the Wikibon CTO award for the Best Storage Technology Innovations of 2009.

Hyperscale storage was discussed previously in the July 26, 2011 Peer Incite: Cloud Archiving Forever without Losing a Bit, during which Justin Stottlemyer, the Storage Architect Fellow at Shutterfly, reviewed the drivers behind the company’s selection of Cleversafe. As companies such as Facebook, YouTube, and Shutterfly know only too well, the growth in the quantity and the increase in the quality of digital image content, both still images and video, are major drivers behind the continued growth in demand for hyperscale storage. At Shutterfly, the storage requirements have doubled in only 18 months, reaching 80 petabytes.

Demand for hyperscale storage is not confined to Web 2.0 social-media and photo-sharing sites, however. Hyperscale storage requirements can also be found within agencies that monitor weather conditions and forecast weather-related disasters, and governmental security and defense applications, including video surveillance and satellite imaging drive hyperscale storage requirements. Unfortunately, due to security concerns and compliance requirements, these applications and requirements are rarely discussed in the public domain. However, both social media and government security and defense applications are predictors of the coming demand for hyperscale storage in more traditional businesses.

Businesses have historically transmitted information in written form, such as emails and written documents, but increasingly are leveraging a combination of video and text to improve the communications and retention of information. Video has become a core component of the educational system, particularly for online corporate education and rapidly-growing online universities and trade schools. Even traditional universities with a large campus for in-person classrooms are migrating classes to video and making more of their classes available completely online through portals such as Coursera.

Multi-site collaboration has also become a core requirement for organizations with large product design and software application development projects. Tight deadlines and limitations in human capital availability make it necessary to collaborate across locations and timezones, while working on large repositories of design content or application code. Cloud-based service providers have begun to deliver these infrastructure on demand to support these follow-the-sun development requirements.

Once storage requirements reach hyperscale, however, traditional RAID-based and replication-protected storage architectures will become too expensive, too unmanageable, and too expensive, to meet the requirements of organizations. This has led Facebook to develop its own IT infrastructure stack, including storage, and to encourage others to join it in decreasing the cost of supporting and managing hyperscale compute and storage requirements through the Open Compute Project. Others have turned to companies such as Cleversafe to meet their requirements.

Forecasting the breaking point of traditional storage architectures may be more art than science, particularly when assessing the requirements in the multidimensional moving target of growing capacity, changes in data access density, increased write requirements, and limits on IT budgets. That said, the time to evaluate and prepare for transitioning to a new architecture for hyperscale storage requirements is best done well before the requirement has arrived, and if your organization is already managing petabytes of data, the best time to evaluate new architectures may already be in your rear-view mirror.

Hyperscale archival storage begets additional storage requirements with different performance demands. Particularly within the realm of digital image content, metadata is becoming increasingly important. From consumer focused, event-specific photo journals, such as memory albums, to news-driven content retrieval requests, and security and defense applications, second-order products are being built on top of massive archives. These applications increase the frequency and the variability of requirements to rapidly locate images and video. The need to search, sort, arrange, and aggregate content rapidly will drive both the demand for an increased volume of metadata and may demand separate architectures for managing data and metadata. For many hyperscale applications, large, read-intensive image and video archives are perfectly acceptable when delivering the first byte of data within a few 100s of a second, but the metadata search may need to be accomplished within a few milliseconds or even microseconds in the ad-tech industry, for example.

Action item: There is little doubt that regardless of industry, modern enterprises will become increasingly dependent upon data-intensive applications for product development, customer acquisition, employee and customer education, and service delivery. Well before reaching hyperscale requirements, organizations should evaluate, test, and implement proof-of-concept installations to avoid crash-and-burn scenarios that result from waiting too long.

CIOs must watch hyperscale trends and jump when ready

On January 22, 2013, the Wikibon Community participated in a Peer Incite discussion with Cleversafe around the concept of commercial applications and hyperscale storage. It was an intriguing discussion about the challenges that face providers as they grow up to and beyond the petabyte scale, a scenario that is taking place with more companies more often.

I will admit that I was initially skeptical of the direct lessons that mainstream CIOs could take away from a discussion on hyperscale storage, a problem that initially seems to be confined to the very large enterprise and very large provider space on the scale of Facebook, Shutterfly, and Zynga. After all, those companies have massive storage and architectural requirements that most mainstream CIOs will never see.

However, after a discussion with my Wikibon colleagues and a couple of days to ponder the question, there are some clear lessons and benefits that CIOs can take away from the kinds of hyperscale projects on which companies like Cleversafe work.

Most importantly, CIOs must maintain an open mind as times change and as new opportunities and offerings make their way to market. Further, CIOs are constantly looking for ways to improve the services they offer, whether such improvements come from lower costs, higher availability, or simply more capability and capacity. Lessons learned in the hyperscale space can be leveraged to assist making these items a reality in the midmarket. Case in point: The drive toward commoditization of hardware. It has been partially through lessons learned by the likes of Google and Facebook that commodity server-based availability mechanisms have come into being. As a result of these educational efforts, we’re also seeing a drive toward hyperconverged architectures (i.e. Nutanix and Simplivity) that don’t have any single point of failure, but that embrace failure as just another operational event through the deployment of redundant hardware. We’re seeing similar trends as storage companies (i.e. Tegile, Tintri, Pure Storage and many others) embrace commodity hardware in their designs. Of course, it doesn’t hurt that processors have become insanely powerful in recent years.

In keeping an open mind about the future, it’s also important to remember that the way things work today is not necessarily the way things will work tomorrow. After all, in 1999, who would have thought that we could run 100 servers worth of workload on just 5 physical hosts with room to spare? Hyperscale architectures are harbingers of the kinds of workloads that many more CIOs may be running in the future, particularly those who are running data-intensive workloads. Today, for example, many organizations rely on replication-based performance and availability management mechanisms, but as organizations approach hyperscale-level workloads, this management method becomes untenable. There is a point at which the workloads simply become too great for this operational paradigm and need to be shifted to leverage operational methods inherent in the hyperscale space. CIOs must keep an eye on their environments and ensure ongoing understanding of workload needs.

Politically, CIOs need to understand that their CxO partners keep watch on their industries to ensure that they’re staying current, and many will continue to hear the virtues of “cloud” and “scale.” Even if they’re not 100% certain about how to interpret what they hear, they will understand the benefits, and CIOs will be encouraged to consider these options as time goes on. Therefore, CIOs must remain abreast of trends that may be leveraged.

Larger organizations will probably find it easier, ultimately, to take advantage of hyperscale opportunities and be able to compete at that scale. Mid-sized and smaller organizations, though, will find it necessary to partner with a service provider that is already at the hyperscale level, since getting there on their own will prove too costly.

Action item: In essence, the primary takeaway for CIOs with regard to hyperscale is to keep an eye on the market and the trend and ensure that the organization is always leveraging tools that are appropriate for the business at the present time. However, do so with caution. If you jump too soon, you risk bearing the cost of too much trial and error, but if you never jump, you become a roadblock in the eyes of many. For smaller organizations that need to partner with a provider, always make sure that you choose carefully and understand thoroughly any service level agreements, contracts, and security arrangements that are put into place by your provider.

Software-led Storage: Hyperscale Storage Requirements

Hyperscale and Big Data are two trends that necessitate new methods of protecting data. Specifically, traditional approaches of data backup are inappropriate for large, scale out infrastructures such as those being popularized by Internet giants (e.g. Facebook), cloud service providers and many government agencies. Simplified storage approaches based on object stores, combined with erasure coding as a means of protecting large quantities of data will dramatically lower storage costs. Moreover, flash will play an increasingly important role in this new storage paradigm to house metadata and enable "in-time" (i.e. near real-time) analytics to be performed on large data repositories.

As Data Grows Recovery Becomes Impossible

Data today is backed up by taking copies. The number of copies, whether by snapshots or physical, is growing out of control increasing complexity. But as data volumes grow, the real problem becomes one of recovery.

The key questions for hyperscale storage are:

- How are petabytes or exabytes of data backed up?

- How are petabytes or exabytes of data restored?

- How are petabytes or exabytes of data accessed?

The simplest analysis of the elapsed time and telecommunication costs for transporting magnetic media will show that traditional methods of backup are prohibitive and over time will become unsustainable. As costs decline and capacities rise due to Moore’s Law, elapsed time only becomes more problematic because access times and transfer rates from magnetic media barely improve.

The bottom line is that as data volumes grow, it takes too long to backup and recover data, increasing costs and the probability of data loss.

How to Protect a Petabyte

As one Wikibon practitioner phrased the problem: "How do you backup a petabyte? You don't."

Currently, the only technology available to address these issues is erasure coding, which uses compute cycles to split up and transform the data into n slices with the ability to recover from only m slices ( where n>m). The slices can be distributed locally (e.g. within a data center) or geographically, and the most advanced implementations combine enough redundant slices (n-m) so that the data can be recovered either locally or remotely.

Traditional methods of reading hyperscale volumes of data from disk to find and analyze patterns are no longer viable. The most important challenge that has to be addressed is how to create metadata for each object that describes where it is, what it is, when it was stored, and how it is related to other objects. This metadata must be accessible at very high speed. As such, it has to be held centrally (mainly in non-volatile memory). The huge benefit of getting this right is that the object store can be used for multiple purposes-- a single store that can contain data warehouses, backups, and multiple archives.

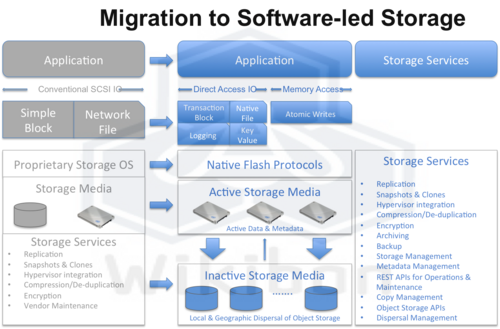

The combined technologies of erasure coding, object storage, and high-performance, flash-resident metadata are the only technologies available (at the moment) that will address the three challenges above. These will be integrated as services within a software-led storage architecture as shown in Figure 1. The potential benefit is to reduce the amount of data stored by a factor of five to ten times, dramatically improving storage efficiencies while at the same time delivering substantially more business value.

Action item: Hyperscale storage must use erasure coding techniques to allow the following:

- Avoid backing up petabytes/exabytes of data: redundancy of data should be included in how it is stored locally and remotely,

- Avoid restoring petabytes/exabytes of data. Rather restores should be completely integrated into the storage system so that no change of access method is required,

- Use an object storage foundation for storing and accessing petabytes/exabytes of data.

This software-led storage model will allow storage services to deliver the lowest cost of storing self-protecting, self-restoring data and high-performance metadata that can self-protect, self-restore, and allow multiple uses of historic data.

Ensuring High Reliability and Availability for Hyperscale Storage Systems

When storage requirements approach the hyperscale level, drive failures and errors present a constant challenge. An organization storing a petabyte or more of data can expect hundreds of drives to fail every year, requiring week-long rebuild times. Knowing that a portion of its drives are certain to fail during any given interval, how can an organization protect against data loss and ensure that data is online and remains available? The traditional approach to guarding against these failures is replication. However, at the petabyte level and beyond, creating copies of the data eventually becomes impossible from a technical, financial, and organizational standpoint.

Information dispersal is an alternative approach for achieving high reliability and availability for large-scale unstructured data storage and is based on the use of erasure codes as a means to create redundancy for transferring and storing data. An erasure code transforms a message of k symbols into a longer message with n symbols, such that the original message can be recovered from a subset of the n symbols (k symbols). Simply speaking, erasure codes use advanced math to create “extra data” that allows a user to need only a subset of the data to recreate it.

An Information Dispersal Algorithm (IDA) builds on the erasure code and goes one step further. It splits the coded data into multiple segments (called slices), that can then be stored on different devices or media to attain a high degree of failure independence. For example, using erasure coding alone on files on your computer won’t do much to help if your hard drive fails, but if you use an IDA to separate pieces across machines, you can now tolerate multiple, simultaneous failures without losing the ability to reassemble that data.

Information dispersal eliminates the need for replication. Because the data is dispersed across devices, it is resilient against natural disasters or technological failures, such as drive failures, system crashes, and network failures. And because only a subset of slices is needed to reconstitute the original data, multiple simultaneous failures across a string of hosting devices, servers or networks, will still leave data availability intact.

Action item: Storage requirements for most commercial organizations have not yet reached hyper-scale, but will within the next several years. CIOs and storage-decision makers at organizations managing large amounts of unstructured data should be alert to signs that their storage infrastructures are reaching the reliability and availability “breaking point:” reliability and availability exposure, the need to constantly add admin staff to fight fires, and rapidly increasing hardware and facilities costs.

Footnotes: For more on information dispersal, visit www.cleversafe.com.

Cleversafe Tackles Big Data with Software-led Hyperscale Computing Paradigm

For the moment, the world’s largest data repositories are measured in multiple petabytes which have already strained the limits of traditional IT architectures and infrastructures. The move to hyperscale computing, advanced by the likes of Amazon, Facebook and Google, promises to change the way large IT shops and cloud service providers manage and deliver their data in the near future when exabytes of data will need to be served up to users or customers in a fraction of a second.

Need for Speed

The cost of commodity servers and storage are continually dropping. However, demand for additional compute power and, in particular, high performance storage along with operational expenses is outpacing any savings advantage. But the bigger issue is speed or time to first bid or byte.

Amazon estimates that just a one second delay in page-load can cause a 7% loss in customer conversions. Put another way, Amazon estimated that every 100 milliseconds of latency cost them 1% in sales. Google found an extra .5 seconds in search page generation time dropped traffic by 20%. A broker could lose $4 million in revenues if their electronic trading platform is 5 milliseconds behind the competition.

Meanwhile, backup windows are shrinking – or no longer exist – in a world that demands 24x7 access to information and services not to mention backing up, replicating or mirroring several petabytes of data is not easy or cost effective with traditional approaches.

Information Dispersal Approach

During Wikibon’s Peer Incite focused on Commercial Applications and Hyper-Scale Storage, Russ Kennedy, Vice President of Product Strategy, Marketing and Customer Solutions for Cleversafe discussed the merits of their approach to economically managing very large object-stores.

Kennedy explained how Cleversafe’s software and appliances leverage existing storage assets whether in a single data center or geographically dispersed throughout the enterprise. “Storage utilizing Cleversafe technology is based on a simple or named object approach that efficiently stores billions of data objects in a single namespace and exposes the data through REST, a standard HTTP based protocol.”

Traditional storage file systems such as NAS expose data via NFS and CIFS protocols. This approach works well for provisioning space for individual users or calling up specific objects or documents but begins to run into performance bottlenecks when billions of objects are in the object store. While file system based storage is closely tied to location, object-based storage overcomes this limitation by decoupling data from its physical placement in the storage system.

Cleversafe also solves a well known big data reliability and scalability problem by pairing Hadoop MapReduce with its Dispersed Storage Network (dsNET) system on the same platform replacing the Hadoop Distributed File System (HDFS), which relies on 3 copies to protect data, with Information Dispersal Algorithms. In addition, according to Cleversafe CEO and President Chris Gladwin, “Current HDFS deployments utilize a single server for all metadata operations and the failure of a single node could render data inaccessible or permanently lost.”

Kennedy also referenced a Shutterfly case study where Cleversafe technology is helping to manage over 70 petabytes of information – so far. “Our solution is architected to handle Exabyte-scale object stores securing data in motion or at rest, and we can drive up to 90% of the cost out of storage needed in a traditional solution all while data and objects are always online, available and utilizing existing storage assets.”

Advantages of Object-Based Storage

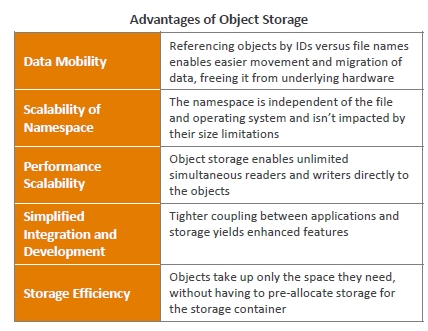

Object storage as defined by Cleversafe would appear to have several advantages over traditional file-based approaches including:

Object-based Storage Implications

Assuming the Cleversafe approach - as well as similar approaches for deploying object-based storage from OpenStack Swift, Scality and Caringo among others – becomes pervasive, the implications would potentially include:

- Reduction or elimination of RAID, Replication, Backup for Petabyte-scale data stores

- Significant reduction in cost of traditional storage and storage admin costs

- Increased pressure on large enterprises to move to software-led hyperscale solutions

- Increased pressure on IT to cut costs and improve efficiencies or move to cloud

- Paradigm shift for hyperscale hardware providers to simplify offerings, go “barebones” obviating first generation value-added, purpose built hardware solutions

- Move to open compute architectures including Facebook OCP

- Increased service provider focus on industry specific, cloud-based offerings. Examples: FinQloud and Bloomberg Vault

- Managing and serving up metadata in the cloud for specific industries becomes an even more pervasive business model

Conclusion

Software-led hyperscale computing has the potential to revolutionize multi-petabyte-scale storage architectures for applications with billions of objects and may very well impact how servers, memory and storage are delivered at scale for cloud service providers and large enterprise data centers in the near term.

On the other hand, it would appear the impact on and implications for many traditional enterprise-class tier 1 apps including high performance trading systems, relational database solutions and other sub-petabyte-scale storage reliant solutions will be minimal for the foreseeable future. That is, until SSD flash memory and storage become so inexpensive and pervasive an enterprise can cost-effectively deploy multiple petabytes.

Action item: Vendors need to determine the verticals in which they will compete and build an ecosystem within those verticals. Applications and data access patterns will determine what to do with the metadata. Many of the target customers may be in the business of marking up data. Industries such as Finance, Healthcare, Insurance, and Pharmaceuticals are already well down the path of marking up hyperscale data repositories. Storage vendors need to understand application requirements well enough to help the customer differentiate beyond what can be done using a more generic storage solution.

Rack Level Architectures and Hyperscale Operations

Last week at the Open Compute Summit Winter 2013 event, Facebook shared insight into its IT infrastructure. While hyperscale companies like Google, Amazon, and Facebook are quite different from the typical enterprise, it is Wikibon’s belief that those companies are driving key changes to how infrastructure is consumed that will filter down to the service provider and enterprise worlds in the near future.

Standardization of Building Blocks

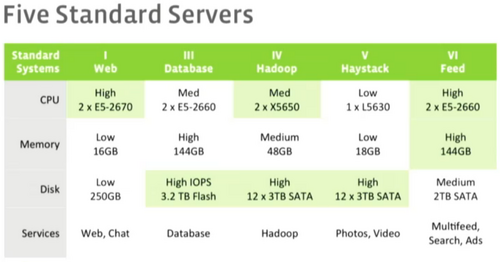

Jason Taylor, Facebook’s Director of Capacity Engineering Analysis (see his entire presentation here) shared the design practices for Facebook’s infrastructure. Like service providers and other large Web companies, Facebook uses homogenous building blocks rather than specialized silos that are custom built for applications wherever possible. As shown in Figure 1, Facebook has five server designs that it builds in rack architectures (each rack holding 20-40 servers). Everything is done at massive scale; in the first nine months of 2012, Facebook spent $1B for capital expenditures including servers, networking, storage and construction of data centers.

The “vanity free servers” (not a name brand or even a whitebox solution) are designed bare-bones to meet the compute, RAM, disk, flash and connectivity needs of the design without any other features that are normally built into a platform. Jason Taylor listed the following benefits of server standardization:

- Volume pricing,

- Repurposing (gives the ability to not just scale up, but also remove to scale down),

- Easier operations (simpler repairs, drivers and DC headcount),

- According to Delfina Eberly, Facebook’s Director of US DC Operations, it can now service over 20,000 servers per technician!

- Servers allocated in hours rather than months.

The drawbacks are that not every application fits perfectly into the available options, and as solutions and technology change over time, Facebook needs to make adjustments or risk more waste.

On the storage side, Facebook considers flash to be critical (the company is Fusion-io’s largest customer), while disk is still useful as an inexpensive price point for large capacity. See David Floyer’s write-up of how the new Fusion-io ioScale fits into the hyperscale market.

Disaggregated Rack rather than Virtualization

Facebook’s proposed solution to overcome the drawbacks of the current architecture is the creation of the Open Compute Project to develop a Disaggregated Rack, which allows independent management a replacement of the individual components of the server. The OCP project (see the press release) includes participation from Intel and the ODM supplier Quanta Computer (who is popping up in lots of places). Taylor characterized Distributed Rack as the “opposite of virtualization”. Virtualization is good for heavy idle workloads (an easy efficiency win for most enterprises), while disaggregated rack pushes servers as hard as they go and scaling/trimming is then done for efficiency. Virtualization is good for unpredictable workloads and outsourced environments. Disaggregated rack allows custom configurations (fix the issue of five servers), speeds tech refreshes and speed of innovation. Issues: physical changes and interface overhead.

Hyperscale operations

The typical enterprise spends more than 70% of budget on operations; way too much to just keep the lights on and running. At hyperscale deployments, staffing ratios must change. Budgets will not be able to support the staffing requirements for managing thousands of devices or having to deal with intricacies such as data management with traditional storage arrays.

In understanding the current staffing requirements, organizations need to look at the people involved in procuring, installing, provisioning, and maintaining storage. In addition, organizations need to understand staffing requirements for performance management, data migration, data backup, data recovery, disaster protection, security, and compliance. New architectures will enable a single individual to manage petabytes of storage, but in order to take advantage of this step-wise improvement, budgets may have to be consolidated. Just as Facebook is managing orders of magnitude more servers than an enterprise environment due to automation, toolsets and simplicity, Shutterfly manages about 70PB of object storage with 3 administrators.

Action item: Disaggregated rack is an interesting idea, but it remains to be seen if the industry will create a solution that targets a small number of large users. Service providers and enterprise users can learn from the example of managing infrastructure at the rack level. The elimination of custom-built architecture and breaking down of silos is something that all CIOs should be looking at. The old ways of doing things are too complex and have too much overhead. Two paths of simplification are to either look at moving to a service provider that can provide IT-as-a-service or the adoption of converged infrastructure.

Eliminating Backup and Replication in Hyper-Scale Storage

We back up data to protect against storage system failures and data corruption. We replicate data to protect against site-wide disasters and to enable high availability. But backup and replication have a significant impact on capacity planning, procurement, facilities management, electrical and cooling costs, networking, and staffing. What if we could eliminate the need for backup? What if we could eliminate the need for replication? That is precisely the promise of erasure coding and data dispersal found in the Cleversafe solution.

In the January 22, 2013 Peer Incite, Cleversafe VP Russ Kennedy discussed the breaking point of traditional approaches to protecting large object stores. The breaking point, he said, will differ by organization and will depend on budgets, facilities, available networks, and staffing. But one thing is clear; most organizations are on a collision course with the breaking point, as they build ever-larger repositories of digital images, video objects, and files.

Update-intensive applications such as active transactional databases are not the target for this new approach to data storage. Those will continue to be housed in traditional storage systems using well-known and well-tested backup and replication techniques. But backing up and replicating the petabytes of data found in some of today’s largest object stores, is, for most, neither affordable nor possible.

Action item: Organizations with large repositories of digital images, videos, and files should pay close attention to the growth rate and remain mindful of the impending breaking point. The investment in erasure coding and data dispersal solutions will probably be more than offset by returns from reduced hardware, facility, electrical, cooling, networking, and staffing cost. The time to evaluate is now.