Hyperscale and Big Data are two trends that necessitate new methods of protecting data. Specifically, traditional approaches of data backup are inappropriate for large, scale out infrastructures such as those being popularized by Internet giants (e.g. Facebook), cloud service providers and many government agencies. Simplified storage approaches based on object stores, combined with erasure coding as a means of protecting large quantities of data will dramatically lower storage costs. Moreover, flash will play an increasingly important role in this new storage paradigm to house metadata and enable "in-time" (i.e. near real-time) analytics to be performed on large data repositories.

As Data Grows Recovery Becomes Impossible

Data today is backed up by taking copies. The number of copies, whether by snapshots or physical, is growing out of control increasing complexity. But as data volumes grow, the real problem becomes one of recovery.

The key questions for hyperscale storage are:

- How are petabytes or exabytes of data backed up?

- How are petabytes or exabytes of data restored?

- How are petabytes or exabytes of data accessed?

The simplest analysis of the elapsed time and telecommunication costs for transporting magnetic media will show that traditional methods of backup are prohibitive and over time will become unsustainable. As costs decline and capacities rise due to Moore’s Law, elapsed time only becomes more problematic because access times and transfer rates from magnetic media barely improve.

The bottom line is that as data volumes grow, it takes too long to backup and recover data, increasing costs and the probability of data loss.

How to Protect a Petabyte

As one Wikibon practitioner phrased the problem: "How do you backup a petabyte? You don't."

Currently, the only technology available to address these issues is erasure coding, which uses compute cycles to split up and transform the data into n slices with the ability to recover from only m slices ( where n>m). The slices can be distributed locally (e.g. within a data center) or geographically, and the most advanced implementations combine enough redundant slices (n-m) so that the data can be recovered either locally or remotely.

Traditional methods of reading hyperscale volumes of data from disk to find and analyze patterns are no longer viable. The most important challenge that has to be addressed is how to create metadata for each object that describes where it is, what it is, when it was stored, and how it is related to other objects. This metadata must be accessible at very high speed. As such, it has to be held centrally (mainly in non-volatile memory). The huge benefit of getting this right is that the object store can be used for multiple purposes-- a single store that can contain data warehouses, backups, and multiple archives.

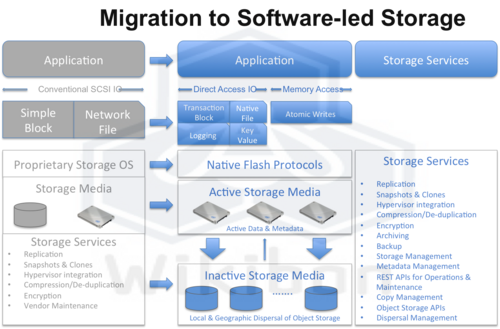

The combined technologies of erasure coding, object storage, and high-performance, flash-resident metadata are the only technologies available (at the moment) that will address the three challenges above. These will be integrated as services within a software-led storage architecture as shown in Figure 1. The potential benefit is to reduce the amount of data stored by a factor of five to ten times, dramatically improving storage efficiencies while at the same time delivering substantially more business value.

Action Item: Hyperscale storage must use erasure coding techniques to allow the following:

- Avoid backing up petabytes/exabytes of data: redundancy of data should be included in how it is stored locally and remotely,

- Avoid restoring petabytes/exabytes of data. Rather restores should be completely integrated into the storage system so that no change of access method is required,

- Use an object storage foundation for storing and accessing petabytes/exabytes of data.

This software-led storage model will allow storage services to deliver the lowest cost of storing self-protecting, self-restoring data and high-performance metadata that can self-protect, self-restore, and allow multiple uses of historic data.

Footnotes: