Storage Peer Incite: Notes from Wikibon’s November 13, 2012 Research Meeting

Recorded audio from the Peer Incite:

Our November 13 Peer Incite Research Meeting was something of a departure from the norm in that instead of discussing how he solved a problem, the guest presenter, Paul Martin, Information Technology Manager from Vermont-based SMB Poulin Grain, was looking for ideas from the community about how best to solve his problem. What he wants is an inexpensive, reliable, and expandable data archiving solution to replace his existing ExaGrid EX-series system, which is more than 85% utilized. He wants a public Cloud solution, in part, so he doesn't have to revisit this issue every three years. As a one-person IT department, Martin has more important issues to focus on.

What he wants is a data archiving service. He is looking at Amazon Glacier, which certainly has the right price, and was not dismayed when Wikibon's analysts warned him that data restoration would take days or possibly weeks. He dislikes tape and, having eliminated it from the system, is unwilling to take what he sees as a step back to it.

One thing that surprised me was that he is looking for archiving only and plans to maintain primary backup on site. Like many SMBs, Poulin Grain, a bulk feed supplier for livestock and wild birds, does not require near real-time recovery. Moving primary backup to the Cloud might also serve archiving, at a net wash in cost.

In any case the big problem Martin faces is one common to SMBs seeking similar services. The DropBox-type services are optimized for consumers with comparatively small data needs, while the Amazon-class services are designed for large enterprises. Services that fit the SMB range in the middle are scarce on the ground.

Unfortunately the Wikibon community could not immediately offer any specific recommendations of a service that might fit Poulin Grain's needs. The discussion did clarify the issues and give Martin a better idea of what he should ask both ExaData and Amazon, and what expenses he should expect above Glacier's low per-GB storage fee. So the discussion was valuable in the sense of defining the problem. The pieces below expand on that discussion. David Floyer's piece in particular examines the important issues and looks at several specific services. Bert Latamore, Editor

Seeking Enterprise Data Protection on an SMB Budget

Wikibon Peer Incite Research Meeting

On November 13, 2012, Paul Martin, Information Technology Manager at Poulin Grain joined the Wikibon Peer Incite Research Meeting to share experiences and discuss alternatives for backup, data protection and archive, as he considers a move to cloud-based solutions.

About Poulin Grain

Poulin Grain is a fourth-generation, family-owned and operated business that specializes in “high quality dairy, equine, pet and livestock feeds.” Founded in 1932, it is headquartered in Vermont with offices New York.

Poulin Grain is typical of many small businesses, where IT operations are under the direction of a single individual, who is responsible for servers, storage, networking, security, application development, deployment and support of packaged applications, desktop and end-point device management, data protection and disaster recovery. With so many responsibilities and a limited budget, Paul is exploring migration from his current disaster recovery solution to a cloud service. His hope is that this will eliminate the need to regularly revisit disaster recovery and data protection and focus his limited budget and limited time on areas that will support business growth.

Current Backup and Data Protection Approach

Paul has fully virtualized Poulin Grain’s business-critical and non-business-critical applications, using VMware. He regularly backs up business-critical applications and data, which resides on his HP and Coraid primary storage using Veeam Backup and Replication. The Veeam software writes data to an ExaGrid EX-series storage appliance that stores, deduplicates, and then replicates data to another, smaller ExaGrid appliance at a second, virtualized Poulin Grain office, which is used as a disaster recovery location for business-critical applications.

Backup and Disaster Recovery Challenges

The current environment will only support six business-critical applications, and non-business-critical applications are not routinely backed up. Previous outages have resulted in the loss of application availability for greater than 24 hours. The company still maintains a backup paper trail of critical transactions, so operations can continue and transactions can be re-entered in the event of data loss.

While Poulin Grain can continue operations during short-term outages and can recover and re-enter data in the event of data loss, extended outages would be more problematic. In addition, since non-business-critical applications are not backed up, rebuilding those applications would cause a significant disruption and some applications and scripts would not be recovered.

Data growth within the business-critical applications requires that Paul revisit and upgrade his disaster recovery solution every few years, and his current ExaGrid appliance in his primary data center is almost out of capacity. On his limited budget and with limited time, Paul would like to implement a backup and disaster recovery solution that will enable him to operate within his budget, accommodate growth, and not have to be revisited every two to three years. He would also like to retain his Veeam backup solution and, if possible, leverage his prior investments in ExaGrid by consolidating them into his primary office and replicating this backup data. With that as context and constraints, Paul began exploring cloud-based backup alternatives.

Cloud-Based Backup and Archive Options

The community discussed a variety cloud-based storage providers, including Amazon’s recently announced Glacier service, which is priced as low as $.01/GB/month, making it, at first glance, very affordable for Poulin Grain. Other options under consideration for cloud storage include HP Cloud Object Storage, RackSpace Cloud Backup, and AT&T Synaptic Storage.

Accessing low-cost, cloud-based storage offerings requires a physical or virtual cloud gateway appliance or a cloud-ready backup software alternative that holds the backup and archive data at the primary site, integrates with the cloud storage APIs, and replicates the data to the cloud. In order to most cost-effectively transmit data to the cloud and reduce the in-cloud cost/GB, it is best to deduplicate and compress data before transmitting. In addition, data in transit between the organization and the cloud should be encrypted.

The community discussed a variety of cloud gateway appliances and software solutions. These will be discussed in separate documents.

The Cost of the Cloud is Cloudy

With prices as low as $.01/GB/month, the cost of cloud storage appears attractive. Certainly, the ability to continue to scale without frequently revisiting and upgrading physical hardware is also attractive, freeing up valuable time to work on applications and systems that support revenue generation. But in order to conduct an accurate comparison of cloud storage to current backup and disaster recovery approaches, organizations need to determine the effective cost of cloud storage, and there are many factors. Depending upon the offering and the approach, there may be additional cost for:

- Storing data that hasn’t yet been transmitted to the cloud;

- Deduplicating, compressing, and encrypting data before transmitting to the cloud;

- Replacing archive appliances or backup software to enable interface with cloud-storage APIs;

- Uploading data to the cloud;

- Retrieving data from the cloud.

Organizations will also want to consider these important factors:

- Data not yet transmitted to the cloud is data at risk of a site disaster.

- The larger the network pipe, the faster the data will be transmitted to the cloud.

- Redundant networks with alternate routing will help mitigate the effects of network outages, but will increase cost.

- The strategy for data protection should be driven by the business unit’s application recovery time objectives (RTO) and recovery point objectives (RPO).

Action Item: Before considering any cloud backup alternative, organizations should develop a comprehensive list of factors affecting the ability to meet the RTO, RPO, and budget limitations of the organization.

CIOs: The Pathway To Cloud Backup Remains Cloudy

Introduction

During the November 13, 2012, Wikibon Peer Incite Research Meeting, Paul Martin, Information Technology Manager at Poulin Grain discussed his recent experiences investigating cloud gateways and cloud storage offerings. This Peer Incite was entitled Achieving Enterprise-Class Data Protection on a Small-Business Budget. While the call did not result in an ultimate solution for Mr. Martin, it did raise a number of important issues.

SMB/Small Midmarket challenges

For me, this Peer Incite was particularly compelling because our guest is the IT Manager in a small IT shop. In fact, he’s the lone IT pro supporting the entire business. As a former CIO for SMB/midmarket organizations, I certainly understand just how difficult it can be to manage IT in such environments. After all, SMB and midmarket companies face the challenges similar to their larger enterprise brethren but have to do so with fewer people and fewer resources.

The backup quandary

In our call this week, Martin discussed his need for an improved backup solution and his adventure in considering cloud-based alternatives or additions to his existing on-premises and DR-based backup solution.

The adventures described have been both informative and frustrating. Along the way, Mr. Martin stumbled across companies that weren’t interested in his business due to the small volume and others that were eminently helpful and, even though they recognized that they may not be the best solution, offered to at least educate him on some of the points of consideration.

Break the cycle

Martin isn’t looking for anything unreasonable. He simply wants a solution that is affordable, as cost is a primary driver, and that can handle the backup volume and that doesn’t require constant attention and upgrades. He wants a complete solution that can scale to meet new needs without having to continually re-address backup and recovery every 2 to 3 years. Personally, I think that the goal for IT should be to find ways to break out of the constant upgrade cycle for as many services as possible. Or, at the very least, find ways to reduce the sheer labor that is attached to constant upgrade cycles.

Use cloud for scale

Obviously, it seems like cloud-based backup makes sense in a case like this. Rather than constantly have to worry about on-premises storage for backup, the customer can simply scale the cloud service to meet growing needs. No rip and replace required; simply turn a dial and add space. Of course, this is a simplistic look at the problem, but the point is this: It is possible to deploy something once and find ways to scale it without having to replace it and the cloud is a great way to make that happen.

Challenges remain

That said, the challenges in Mr. Martin’s endeavor have not been insignificant:

- Cloud providers carry with them relatively complex pricing models that can make it extremely difficult to predict the ultimate cost of the solution.

- Some providers had the expectation that Mr. Martin would have a fully formed solution ready to present to them, when, in fact, he was hoping to get more information.

- While enterprises are fairly well covered, the SMB and small midmarket CIO faces a dearth of clear, concise information related to how some emerging services can be of benefit. There is no clear roadmap for forging ahead. In most cases, some kind of device of virtual appliance needs to be implemented to act as the cache point for cloud-based backup services. These devices understand the APIs of the various cloud providers.

- While some services seem very good on the surface, upon deeper inspection they may not meet the needs of the organization. For example, Mr. Martin has been considering the user of Amazon’s Glacier service for data storage with regard to backup, that particular service may not meet his RTO due to the service’s speed. That said, the cost of the service is just right for his purposes.

Action item: I will admit that this has been my favorite Peer Incite call to date. I can absolutely identify with Mr. Martin’s dilemma and see needs for action items on a number of different fronts:

- Cloud service providers: More assistance needs to be provided to help smaller organizations make the jump to cloud-based services. SMBs and small midmarket organizations barely have the resources to maintain their existing environments, let alone spend time exploring new options. Pre-packaged, affordable, sustainable products would be more than welcome in this space. Unfortunately, many SMBs and smaller midmarkets are extraordinarily price sensitive, and many providers don’t feel that they’re necessarily worth the hassle to support, so their needs go unmet. Small SMBs have a ton of options in the cloud backup space, and I suspect that it’s easier for enterprises to on-board robust solutions, but the larger SMB and smaller midmarket spaces are in need.

- Hardware vendors/data brokers: You guys are the middle men. You place devices in data centers and act as the broker between client and cloud provider. You, too, have a story to tell, and you need to tell it to SMB and small midmarket CIOs.

- CIOs: You need to start wrapping your heads around how to get out of the constant replacement cycle, particularly for services that you’re replacing for no other reason than you’ve outgrown your existing service. Find a way to scale what you have for longer periods of time, so that you’re not constantly having to find new solutions to solve the same technical problems you solved three years ago. Further, you need to bear in mind the same kinds of metrics that would be considered for on-premises backup solutions. RTO and RPO are critically important metrics, regardless of the location of the backup. As you engage with vendors, make sure you have clear answers to these questions so that you can be successful in your recovery efforts.

Technology Challenges of Cloud for Disaster Recovery

Challenges of Cloud for Disaster Recovery

Disaster recovery seems an obvious first use case for cloud services. The relative low level of adoption signals that there significant technology issues. Using the cloud for disaster recovery requires close integration of a number of technologies. The technology integration challenges include:

- The on-ramp to the cloud must include technology for seeding the initial copy of the disaster data in the cloud, and for replacing it if necessary. This seeding can take a very long time over a network (days or months) that is required only a few times a year. Other methods of seeding include taking a magnetic or solid-state copy of the data and shipping it to the cloud data center (hybrid approaches).

- Incremental backups of changed data are the normal method of backup. The incremental data has to be applied to the original seed data either on a continuous basis in the cloud (which increases cost), or at recovery time (which can result in significant delays, especially if the data is held on low-cost slow media.

- Technologies such as de-duplication and compression can significantly reduce the amount of data transferred and transfer time, especially for seed data. The amount of data reduction is significantly less for incremental data, but not so important if the amount of change is small.

- Encryption needs to be applied after data reduction techniques have been applied. However,

- The off-ramp from the cloud to the disaster recovery site has the same technical problems as the on-ramp, but with greater urgency. The ability to move a physical copy (preferably solid-state, as time to recover from the media when it has arrived is also very important) is a business necessity in most cases.

- The urgency of the off-ramp problem can be reduced if the cloud data can be used to run the disaster site in the cloud. There is still an issue of how the data is moved back to the production site, but there is time to address that issue.

- Solutions should include the identification of critical data that should be recovered first, where possible and practical. This is not an easy exercise to identify, as many systems are interrelated as part of business processes.

- There is often a regulatory necessity to test disaster recovery procedures at least once a year. It is also sound risk reduction strategy to test procedures at least once a year.

- The backup software needs to be optimized for a remote cloud environment, with capabilities that ensure integrity of the data in the cloud.

- Having all business data in the cloud may increase risk of legal discovery being done directly on data by opposing counsel.

Examples of Disaster Recovery in the Cloud

For file-based systems there are many services that support client systems such as Mozy from EMC. They work well for the recovery of lost files, but all the issues discussed above come into play for full disaster recovery, especially the seed time and recovery time.

In the enterprise space there are many vendors offering cloud backup solutions. Wikibon has picked two examples to illustrate

Asigra has provided an on-site backup appliance that integrates the backup software, a local copy for fast recovery, and a remote copy in the cloud for disaster recovery. The remote cloud copy is often held at a local managed service providers site in the same geographical area. Backing up to IBM’s SmartCloud is also an option. The data in the cloud can only be used for backup and recovery purposes.

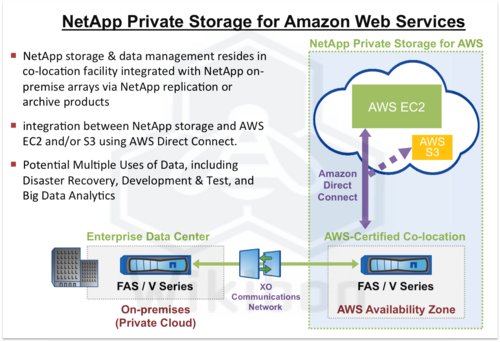

NetApp have just announced a partnership with Amazon for Private Storage on Amazon Web Services, shown in Figure 1.

Figure 1 shows NetApp on-premise storage arrays connecting with NetApp storage & data management residing in a Amazon qualified co-location facility. The data transfer between the two NetApp arrays is integrated using NetApp replication and/or archive products. THe integration between NetApp storage in the remote location and AWS EC2 and/or S3 uses AWS Direct Connect. Potential Multiple Uses of Data, including Disaster Recovery, Development & Test, and Big Data Analytics.

NetApp does not offer a total end-to-end disaster solution including the backup/Disaster Recovery Software (as in Asigra above), but leverages its data storage software tools in conjunction with its back-up and DR partners. The attractiveness of the NetApp approach is that the data in the cloud can be used for multiple purposes.

Conclusions

The use of integrated cloud services for backup and restore is still in the early stages. The vision is of a completely integrated solution that allows rapid recovery of application services either in the cloud or at a backup location, with the data in the cloud available for other purposes such as archiving, interrogation and blending with other cloud data. The challenge of delivering this vision economically compared with traditional solutions is still elusive.

Action item: The technology integration aspects of implementing disaster recovery in the cloud are complex. CIO should clearly spell out the business implications of alternative strategies to the business. There is significant innovation still coming to market - waiting for the right solution is preferable to force-fitting an incomplete solution.

Hardware and Software Suppliers Need a Cloud Strategy

Wikibon Peer Incite Research Meeting

During the November 13, 2012, Wikibon Peer Incite Research Meeting, Paul Martin, Information Technology Manager at Poulin Grain discussed his recent experiences investigating cloud gateways and cloud storage offerings. What became immediately clear to the community is that the market for both cloud storage and cloud-storage gateway appliances needs to be segmented into a collection of smaller markets, based upon customer size, features, and price.

Helping Customers Find the Right Fit

Martin is currently considering Amazon’s Glacier offering, in part due to the $.01/GB/month pricing. He believes that he does not need all of the capabilities or expense of more complete offerings. While beginning his due diligence, Martin reported that some suppliers were quite helpful, suggesting alternatives to their own offering, when they recognized they were a poor fit for Poulin Grain. Another supplier, in his words “couldn’t get me off the phone fast enough.” Suppliers will gain a great deal of credibility and community goodwill by educating their front-line sales team on how to quickly qualify good prospects and helpfully re-direct poor ones.

Articulating The Segment

A great deal of prospect confusion can be avoided by clearly articulating the segment of the market your solutions best serve in marketing communications:

- Good-enough, low-cost cloud for the budget sensitive,

- Commercial-class, feature-enhanced solutions for the mid-market,

- Enterprise-class, feature-rich solutions for the most demanding customers.

Communicating The Direction

Software and disk-to-disk backup appliance suppliers, especially those whose core products were implemented before the expansion of the cloud-storage market and initial customer adoption, need to communicate their plans and directions for integration with cloud storage suppliers clearly. In the case of Poulin Grain, Martin’s preferred backup software supplier, Veeam offers integration with a number of cloud-backup providers. At the same time, Martin was unclear as to whether his backup appliance from ExaGrid could replicate directly to the cloud. Martin scheduled a meeting with ExaGrid the same week as the Peer Incite, and he hopes to learn more of ExaGrid’s cloud-integration plans at that time.

Action item: Every backup and archive appliance supplier needs to develop an integrate-with-the-cloud or access-to-the-cloud strategy. Suppliers should include among the features the ability to identify change data, deduplicate data, compress data, and encrypt data, to minimize the network requirements. In addition, suppliers should focus on the speed with which they can reintegrate recovery data sets. RTO will be a key factor in supplier success. Customers will not tolerate a solution on which the build time on the recovery data set increases as time passes.

When Changing DR Strategies its Best to Involve the Boss

The November 13 Wikibon Peer Incite Research Meeting provided a fascinating glimpse of a small company struggling with data growth. Like many firms, Poulin Grain is fed up with the perpetual cycle of running out of capacity and forced capital expenditures.

What made the call most interesting is Poulin's IT Manager, Paul Martin isn't simply looking to the cloud to stem data growth and address his DR challenges, he's looking to Amazon's Glacier. While he hasn't made a final decision, Glacier clearly is a top contender due to it's attractive costs and simplicity. At a penny per gigabyte per month and a Get/Put object store syntax, Glacier is alluring to firms like Martin.

Many in the Wikibon community have warned CIOs about Glacier. It is the "mother of caveat emptor." As the saying goes, backup is one thing, recovery is everything, and Glacier's strength is its cost attractiveness, not its accessibility. Martin is well aware of the risks. He's thought them through and still keeps coming back to Glacier. Simply put, Glacier is 'good enough' for what he needs.

The issue called out at the meeting is many small and mid-sized businesses are going through similar situations where the "IT guy" is solely responsible for the company's business continuance strategy. This is a cause for concern as the technical folks have forgotten more about disaster recovery than most business people have ever considered. The IT person in a situation like this must educate the business executives on the proposed strategy for several reasons. First, it's a CYA-- you have to share the risk of the decision. Don't let it fall on your shoulders. Second, you have to educate the organization so executives are more DR/BC savvy. You must make sure that people understand the DR/BC strategy, buy into it, understand (in whatever terms necessary) RTO and RPO, and understand the tradeoffs of a particular approach.

In this particular case, for example, Poulin will be protecting 3TBs of data. If Poulin decides to go with Glacier, according to some estimates, it could take days and more than $20,000 to retrieve the data, as Amazon limits the amount of data you can retrieve per day and begins charging after that threshold. While the frequency of disasters will be small, the probability of a disaster over a long period of time, where large amounts of corporate data must be retrieved, is quite high. These risks must be communicated to senior management, and they must buy into the strategy.

The Wikibon community recommends five actions in a situation like this:

- Assess the costs - that's table stakes: If the cost reduction opportunities look compelling, look deeper.

- Understand the impact on depreciation: While many small businesses can easily trade CAPEX for OPEX, make sure your CFO will allow this approach.

- Communicate the hidden costs: e.g. in this case the potential recovery costs and other factors such as expertise to leverage Amazon's APIs.

- Run the probabilities: Assume in all likelihood a disaster will strike, run the scenarios with management, and force them to think through the painful situation of losing most or all of your data.

- Over-communicate, document, get buy-in and secure sign off from management.

Action item: Disaster recovery and business continuance is not solely an IT problem. IT pros must involve the business lines and senior management to ensure they understand not only the capital costs involved but the hidden costs and business impacts of critical DR and BC decisions.

SMB looks to the clouds to get rid of scalability limitations

In a small business, IT may be a one-person shop. This one person becomes application development, application support, and administration of all network, server, storage, backup, and recovery management resources. Current backup strategies often cause companies to think about backup and DR every two-to-three years due to the growth of data and limited scalability of in-house solutions. If properly implemented, a cloud-based approach could eliminate this recurring issue.

On the November 13, 2012 Peer Incite, Paul Martin, IT Manager of Poulin Grain, started down the path of examining cloud backup solutions as a replacement for his current, capacity-constrained Oracle ExaGrid solution. The cost of a service is expected to be significantly less than hardware upgrades, and in the cloud, scalability will not be a constraint. Several ISVs, such as Veeam and Asigra, integrate with cloud service providers for backup and recovery. As Wikibon’s Dave Vellante points out, while the upfront cost of cloud solutions is compelling, the total cost of recovery and potential risk of any downtime must be considered in the overall evaluation.

Action item: The modern data center has too many pieces for the overworked IT group not only to maintain, but also upgrade and expansion. While Cloud-based services are neither a panacea, nor always the most cost effective, small businesses are encouraged to examine many of the backup services that can eliminate the treadmill upgrades of backup solutions.