Tip: Hit Ctrl +/- to increase/decrease text size)

Storage Peer Incite: Notes from Wikibon’s June 29, 2010 Research Meeting

Recorded audio from the Peer Incite:

Storage managers are facing a hidden problem that many are unaware of and that threatens the business data at the heart of the enterprise. This problem is high disk failure rates in the field combined with low data transfer times that increase the chance of the failure of a second disk before a first failed disk can be replaced in a RAID drive. And the killer is that the problem is not with the disk drives -- it is with the outmoded, vertical-design disk packs that have insufficient cooling and cushioning for the drives, which were never designed to operate vertically, and with the complex controller software that creates many false failure reports. A large number of returned disks actually have no problems with them. And the storage system vendors want it that way!

This was the message that two experts -- Richie Lary and Steve Sicola of XIOtech -- brought to the Wikibon Peer Incite meeting on June 30. They have the experience and data to back this up. They headed a design team at Seagate that worked for almost 10 years to bring disk dependability in the field up to close to what lab tests of individual disk drive longevity show it should be. The irony is that when Seagate brought out its solution, its customers, the major storage system vendors, didn't want it -- they saw it as a threat to their maintenance income. Seagate was forced to spin out XIOtech to bring this better solution to the market. The proof that the solution is better is that XIOtech is the only storage system vendor to offer a five-year warranty.

Lary and Sicola blame three major factors for the large delta between lab test results and field experience: Insufficient cooling for disks inside most drive assemblies, insufficient shock absorption that subjects the disks to excessive shaking, and complex disk controller software that creates large numbers of false disk error reports. The implications of these problems are explored by Wikibon experts in the articles in this newsletter. They are both surprising and very significant.G. Berton Latamore

The Disk Drive Mortgage Crisis

Wikiview

The field replaceable disk drive unit is becoming a weak link in storage systems design. The laws of physics have brought a relentless increase in disk capacities – since the early 1990’s drive capacities have increased 1000X - however drive speeds have been unable to keep pace – increasing 2-3X. In addition, the risk of data loss is escalating due to longer rebuild times and onerous disk drive failure rates which are many times higher than published specs. The result is becoming an untenable time to recover from failures and dramatically increased exposure to data loss.

These were the conclusions of the June 29th Wikibon Peer Incite which featured to storage industry luminaries, Richie Lary and Steve Sicola of Xiotech Corporation.

The main findings of the research meeting are that the unit of failure should not be a disk drive, rather it should be a group of devices with embedded intelligence (i.e. a ‘brick’); with low level recovery mechanisms built into the unit. This in essence becomes one large logical device that is able to protect data far more effectively and at much lower cost.

NOTE: While no one on the call disputed these findings, subsequent feedback from suppliers suggests the industry has implemented means other than the Xiotech brick for solving this problem-- although Wikibon has not yet received field data results to confirm these assertions.

Most users are either not aware of these issues or not concerned because support contracts cover device replacement. Increased maintenance costs due to drive failures are not top of mind for most IT executives. However the Wikibon community agreed that as Cloud-scale infrastructures become more prevalent the problem will manifest itself in the form of noticeably higher costs, lost IT productivity, and potentially increases in data loss.

Insidious Data Loss Exposure

Annual failure rates (AFR) for disk drives according to research conducted by Schroeder and Gibson at Carnegie Mellon, range from 2%-13%, significantly higher than vendor spec sheets imply (i.e. AFR’s of less than 1%). In 2007, Google published similar data based on its experiences with disk drive failure rates (i.e. 2%-13% AFRs). The fact is the physics of cooling and vibration are having ripple effects on drive failures that warrant consideration. Notably, these findings primarily were focused on ATA-based devices which of course are increasingly becoming popular in most disk array designs. As well, certain vendors (e.g. EMC) have informed Wikibon that its AFRs on certain products are at industry spec of around 1%.

Nonetheless, this research leads us to believe that such field data should be alarming to users. While techniques like RAID are presumed to mask drive failures, as drive rebuild times increase due to ever larger capacities exposure becomes more prominent. Further, storage optimization techniques such as data deduplication and compression compound the problem by squeezing even more data into devices and increasing rebuild times due to factors such as rehydration (i.e. the overheads associated with most solutions to un-dedupe or uncompress when necessary).

Users should consider three main factors related to this trend:

- Disruption to application performance during storage device rebuilds means real business costs.

- A “when in doubt, throw it out” mentality toward disk drives increases maintenance costs. Importantly, 85% of disk drive failures are no trouble found (NTF); however software has flagged that device as problematic and in need of replacement.

- According to Lary, the reliability of the RAID set is proportional to the square of the reliability of the drive; meaning AFRs in dramatic excess of published specs substantially increase the chance of data loss.

Consider the following example:

- Assume the probability of a drive failure in a year is 1% (.01). The probability of a double drive failure then is .01 X .01 or .0001 (1 in 10,000)-- seemingly a very low number and perhaps low enough to neutralize fears of a double drive failure during a RAID 5 rebuild.

- Based on independent studies from Carnegie Mellon and Google, the reality in the field is that AFRs are much higher and range up to 13%. Using an AFR of 5% increases the probability of a double drive failure by 25X (.05 x .05). So instead of a 1 in 10,000 chance of a double drive failure, users have a 25 in 10,000 chance of failure. If one uses the high end of the Carnegie Mellon range (13%), the data suggests a 169X increase in probability of failure relative to published specs (13% x 13%).

The point is that whatever figure is chosen, the threat is escalating and eventually will result in data loss. If the unit of replacement continues to be the disk drive, the situation will increasingly worsen because it takes longer to recover drives. This trend is a ticking time bomb (think credit default swaps).

Does SSD Solve the Problem?

Not completely. In some ways flash makes the problem worse because of the technology's intrinsic write reliability problems. This can be overcome with techniques like wear leveling but component failures are a random problem and so the probability of multiple failures is still high. On the plus side, the speed of recovery of SSD and flash is significantly faster so you can recover much quicker than with spinning disk. So in theory, a hybrid solution where flash or SSD is the recovery mechanism could help quite significantly. However Lary and Sicola believe that a hybrid brick approach is the best from the standpoint of overall system reliability.

The Lary_Sicola Answer

Lary and Sicola have led design teams created to solve this problem. Their premise is to change the replaceable unit to an enclosed group of devices that delivers an order-of-magnitude higher reliability. They call this innovation the Intelligent Storage Element (ISE). ISE is a brick-like unit that houses Datapacs holding ten 3.5” disk drives or twenty 2.5” disk drives, each that are offset in a manner to reduce vibration and heat (disk drive killers) and increase the reliability of storage systems. The problem Seagate gurus set out to solve relates to the fact that disk drives were never designed for vertical insertion into storage arrays, a technique used by many OEM’s to pack more density into an array. This approach puts stress on drives, and failures become more common. Seagate of course was in a unique position to address this issue and minimize failure rates as well as address the significant problem of NTFs.

The team at Seagate re-examined the design of disk drives and made substantial firmware changes and also altered how drives were mounted in arrays. The process took the better half of the decade but resulted in more stable and reliable drives that could perform self-monitoring and autonomic healing. Seagate reportedly shed the asset because its customers viewed the initiative as an offering that competed directly with their systems. The new Xiotech was formed and the company offers the storage systems industry’s only five-year warranty based on the Seagate technology.

Users should be reminded that improved device reliability does not eliminate the need for proper backup and recover procedures as the brick is still a single point of failure. As well, disk drives inside of bricks will continue to fail, although perhaps at lower rates than conventional SATA devices. As well, there are other ways to achieve the objective of minimizing the weak link of a single replaceable disk drive. One way is to design a logical function similar to Xiotech's across multiple drives in an array and not necessarily create a brick-like unit.

The Future of Storage Systems Designs

Wikibon member Josh Krischer suggested the following premise:

- RAID 1/RAID 5 is obsolete,

- RAID 6 will survive through the end of the decade,

- Before the end of the decade we will see triple RAID or clustered RAID.

Lary and Sicola believe they have developed a form of clustered RAID where a group of devices is enclosed and the virtualization, control, recovery, and management of that cluster is done within the brick, and the group of devices (in this case ISE) becomes the replaceable unit. In theory the unit never fails, and in practice Xiotech is seeing a .1% failure rate over five years in the field—an order of magnitude better than today’s disk drives. These visionaries put forth the idea that the cluster of devices is very simple and has an open set of api’s using Web protocols (e.g. REST) that allows function to reside in the application (e.g. replication) and the array unit becomes a self-contained group of devices that essentially never fails.

Sicola and Lary see today’s controller architecture as: 1) the big box and 2) the smaller modular dual array controller; the trend of course moving to the small box modular designs. The key issue with the small box is the independence of the small box controllers creates conflicts at the back end. The problem with big box approaches are they are big and expensive.

The answer according to Lary and Sicola is to put a high speed, low latency bus between the two controllers to act as a cluster to optimize performance at the back end. The future in their view is to get these controllers to cooperate and allow the application to control certain functions that today reside in disk array controllers (e.g. remote replication). By allowing the application to control function, the idea is to dramatically simplify the storage infrastructure and optimize performance and allow linear scaling for both capacity and performance.

Action item: The dirty little secret of some storage systems suppliers is that annual failure rates are substantially higher than vendor claims. This fact combined with the physics of disk drive capacity and performance exposes users to data loss that could be increasing at a rapid pace. Users should ask vendors for AFR data, dig into the math behind the numbers, understand their exposures, and begin to architect storage solutions that will reliably meet the needs of emerging Internet-scale environments.

CIO Quandary: Should Key Storage Functionality Reside in the Application or Infrastructure

With the growth of structured and unstructured data in the enterprise expected to double every 18 months (roughly 20% / 80% of the total respectively), storage and storage management solutions have edged up towards the top of the IT priorities and expenditures list.

The strategic decision point for CIOs is whether key storage functionality should reside in the infrastructure or if it should be managed by the application. The short answer is, “It depends”. The concept that the application should be more involved in managing function is compelling and changes the thought process for how IT shops should procure infrastructure.

Steve Sicola (CTO) and Richie Lary (Fellow) of Xiotech anchored a June 29, 2010 Peer Incite discussion - drawing over 100 IT practitioners from the Wikibon community – which focused on the pros and cons of each approach.

Benefits of Application Managed Storage

Sicola and Lary gave several reasons and scenarios for why storage management array features should move closer to the application including:

- Performance: The application approach “puts the right features for the server, application or device in play” with simple management APIs that allow the features to change to meet changing application requirements. “Little units of granularity offer better scalability.”

- Metrics and Tuning: An application approach allows IT to more accurately assess and increase drive performance or time to rebuild arrays, monitor failure rates and provide each customer improved QoS and minimal reconfiguration.

- Improved Provisioning: The application approach allows IT to buy in “application chunks” as opposed to larger purchases helping to lower costs.

- Chargeback: More tightly integrating storage management to each application allows IT to more intelligently assess performance and charge its customers for what they use as well as connecting performance to a specific cost structure.

Integrated Infrastructure Approaches

Symantec, Oracle, VMware, Microsoft, and other ISVs are all pulling more infrastructure function into their stacks. Examples include:

- Oracle’s ASM,

- Microsoft Exchange Database Availability Groups (DAGs),

- Symantec Storage Foundation, and,

- VMware thin provisioning, volume management and data movement.

Often, integrating function into the application can provide superior efficiency, integration, performance and availability, but not always. As well, application-specific infrastructure function will be tied to a particular application and infrastructure supporting that application. Being able to share infrastructure function across multiple applications confers cost advantage because the function is a fungible asset.

The strategic issue for CIOs is how to make this decision point. Wikibon research shows that placing function in Oracle for example will almost always be more expensive however is often justified. With Microsoft Exchange the cost equation depends on scale – e.g. at less than 1,000 users embedding function into the app is cheaper, at scale it’s more expensive. (See Cheap or Cost Effective IT note)

Bottom Line

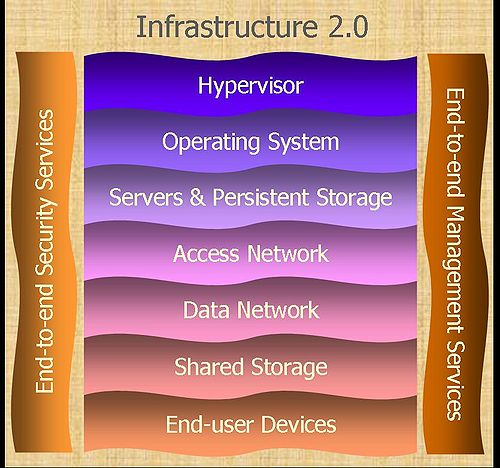

At the moment, the infrastructure has lots of complexity that doesn’t directly support the business (via the application). This is true of the network, servers and storage. Infrastructure 2.0 is about being able to reduce complexity, improve the way the application is protected, and enhance how it behaves from a performance standpoint. The goal is more efficient and agile infrastructure that can support changing business requirements and allows the business to pay for what is consumed.

Action Item: CIOs need to take an application view of infrastructure function. Design your infrastructure to be cost transparent and delivered to the business in a manner that allows the business to deploy the pieces of the infrastructure that are needed in a cost-effective way. This will accelerate the imperative for infrastructure 2.0 and directly add business value. For the purposes of this discussion, business value can be thought of very simply as either lower cost or increased revenue. For CIOs the choice of infrastructure strategy is a function of the priorities of the business and the application supporting it.

Defining Infrastructure 2.0

Infrastructure 2.0 Model

Infrastructure 2.0 is a computing model that Wikibon believes will dominate computing over the next decade or longer. It provides an integrated and pre-tested environment built on well tested best-of-breed volume components. It allows higher utilization of resources and flexible real-time addition and subtraction of resources without any significant impact on end-user availability of performance. The Infrastructure 2.0 model allows IT resources to be moved dynamically between physical machines and locations to meet resource balancing and data protection requirements. This movement can be between computing resources managed by an organization (the internal cloud) and computing resources that are managed outside the organization (the external cloud). A company's stack that is virtualized between internal and external locations is often referred to as a private cloud.

The focal point of an Infrastructure 2.0 model is support for an Application 2.0 environment that directly supports the objectives of an organization. The Infrastructure 2.0 provides the computing resources, compliance model, security model, and telemetry that will allow efficient, secure, and reliable deployment using a consumption model. The resources used by the application can be increased or decreased dynamically to meet business SLAs, and the organization pays for the resources actually consumed. The data created by the application is automatically classified and can be secured and destroyed to meet the business usage, security and compliance requirements.

Infrastructure 2.0 Components

The Infrastructure 2.0 environment consist of interoperating layers

- Hypervisor Layer provides the single point of control for the application spread over multiple virtualized operating systems, servers, and locations.

- Operating System provides control of the IT resources attached to it. It runs applications and controls the server and storage resources.

- Server Layer provides the compute infrastructure to execute the applications and infrastructure layers, provides the inter-server network (iSCSI, Infiniband, etc.), provides the high-performance local persistent storage, and proves access to the shared data storage.

- Access Network Layer (utilizing a single “wire”) provides the logical and physical connections between the user of data and the IT resources.

- Data Network Layer (utilizing a single “wire”) provides the logical and physical connections between the applications and the data.

- Data Storage Layer provides a shared virtualized storage environment and the logical and physical connections between the application and the data storage infrastructure.

- Device Layer provides the logical and physical connections between the application and the end-user devices (static and increasingly mobile). The end-users are real people and as well as data collectors.

Each of the layers is independent but provides information to the other layers that will allow end-to-end compliance and security of the data, with knowledge of the resources available and consumed and performance contribution to the application.

There are two services columns which run orthogonally to the component layers. These service columns are:

- Security Column provides a set of end-to-end security services required for either or both infrastructure or application integration.

- Management Column provides a set of end-to-end management services required for meet the business service level objectives and to create, monitor and delete sub-components in the layers.

Integration of Infrastructure 2.0 Components

Wikibon expects the component layers to be pre-integrated and pre-tested into Infrastructure 2.0 stacks. Infrastructure 2.0 will not be owned by one supplier. Winners will provide best-of-breed components that will work cooperatively with other components. Winners will provide pre-tested and pre-integrated Infrastructure 2.0 stacks with all the components included. Wikibon expects that a large variety of Infrastructure 2.0 stacks will be provided by traditional vendors, systems integrators, ISVs, and industry-focused solution providers. Creating and sustaining brand reputation for components and the integration of components will be a key business imperative for Infrastructure 2.0 providers.

While a few IT user organizations will be tempted to secure business advantage by creating these stacks themselves, the vast majority of IT users will maximize cost and speed to deploy by selecting the optimum pre-tested and pre-integrated stacks and focusing on the application layer.

Key Benefits of Infrastructure 2.0

The key benefits of an Infrastructure 2.0 environment will be reduced cost of volume components, increased utilization of components, flexible provision of resources (increase and decrease), and flexible deployment of resources (location and ownership). Wikibon models expects the overall cost of computing to be decreased by at least 50%, and speed to deploy (flexibility) to be increased by an order of magnitude.

Cheap or Cost Effective IT. Can Your Company Tell the Difference

Data center managers and CIOs may not be well positioned to report on the difference between cheap and cost-effective IT acquisitions. Acquisition cost represents only a fraction of the total cost of ownership for any technology. Often, CIOs are only directly responsible and directly impacted by a portion of the factors that determine operational cost.

Steve Sicola, CTO of Xiotech and the June 29, 2010 Peer Incite participants discussed a variety of factors that should be considered when choosing between suppliers and technologies. In the case of storage, and depending upon the workload, factors could include:

- $ per GB,

- $ per I/O,

- $ per GB/second,

- Expected utilization rate,

- Effective utilization rate,

- Power requirements,

- Cooling requirements,

- Floorspace requirements,

- The useful life of the storage system,

- The warranty period,

- Maintenance cost after warranty,

- Management cost,

- Software license cost.

Buyers should also add to that the impact to the business, if service levels can not be maintained. Even when data is protected with hardware or software RAID, performance may decline during a RAID rebuild process. Technologies that can avoid or minimize the performance impact of a RAID rebuild may be considered more valuable. Routine maintenance can also impact availability. Storage systems that offer non-disruptive software upgrades may be more valuable than those that do not.

Unfortunately, factors that should be included in the list of considerations cross budget lines. For example, the business unit may fail to meet revenue goals due to IT's failure to meet service levels, but the IT department may have no financial incentive to meet those service levels. Power and cooling may be the budget responsibility of the facilities manager and not a consideration for IT. IT may not even be concerned with power at all, at least until the facility reaches its maximum power load. Data management and data protection capabilities can be delivered in a variety of ways: in the storage system, in the operating system, or in the application. In larger organizations, software license expense may be charged against different cost centers, depending upon whether the software is licensed to a storage controller, a function of the operating system, or a feature of an application.

IT organizations that have accountability without authority or authority without accountability are significantly limited in their ability to effectively control the total operational cost of IT investments. Often reporting must be at the level of the CFO to gain a complete, consolidated and accurate view of cost.

Action item: Organizations should establish measurement systems to report total operational costs associated with IT investments. Cost and benefit estimates need to cross organizational boundaries, and reporting needs to be elevated to a sufficiently high level to ensure that budget boundaries don't lead to poor IT investment decisions. Often, that total accountability will only be reached at the level of the CFO.

The Billion Dollar Storage Time Bomb

As a young account manager, I was sitting late at night in the data center with the acting CIO of a retail company reviewing an order-fulfillment project from hell. The project had driven the CIO to a nervous breakdown and he was on sick leave. The project had tight deadlines and the software was new and unstable. Nobody had made an obvious mistake, but things had gone badly wrong and the system was being recovered for the third time. We were on the very last recovery tape. If this did not work, we could not recover the system. We were reviewing our options if the backup failed. We discussed how to re-enter the data, how many people it would take and how long it would take. We discussed how to present this to the CEO. I remember the fear in the pit of my stomach that the company would probably go under. The last backup worked, the CIO recovered and has been a lifelong friend. I have always used that fear-factor to guide me assessing project risk.

I was reminded of this incident a few years ago when I was sitting with another acting CIO from an insurance company and discussing the results of a failed project that had caused his predecessor to be fired. The project was to replace a bespoke system with packaged software and reduce costs by $20 million. They had cut over, but the new system could not provide the functionality, and they had to employ a large number of people to keep the system from failing. The project failure had cost the company nearly $0.5 billion in lost revenue and business opportunity. The acting CIO mused on the fact that the failure of the IT project had very nearly caused the company to go under and what could have been done to reduce project risk. I felt that fear-factor again in the pit of my stomach.

In both of these cases, everybody had good intentions. Both projects were reviewed, and the payback seemed worthwhile. There were no obvious big mistakes, just a series of “unlucky” events. Human beings are very poor at judging the impact of unlikely risks that have big consequences – Nassim Nicholas Taleb’s “black swans”. The recent economic collapse was a direct result of failing to take into account the high consequence of a series of low probability risks. Adding to that the fact that they could take these bets and be insured with tax-payers money against disaster only compounds the problem.

Richie Lary and Steve Sicola of Xiotech Corporation spoke persuasively to the June 30 2010 Peer Incite about the growing risk of disk failure. They argued from a very informed vantage point that the probability of disk failures were up to 13 times higher than the disk failures reported by drive and array manufacturers. Independent research confirms that view. The killer problem is not a single failure, but the problem of a double or triple drive failure. These probabilities are up to 150 times higher for double disk failure, or up to 2,000X higher for triple drive failure than those suggested by the manufacturers. The technology trends point to increasing recovery times in the event of a disk failure, and there are a rapidly increasing number of disks in service with ever denser and unreliable packaging. Good intentions abound, but the pressures of meeting vendor shipment targets and staying within IT budgets are significantly greater that the ability to understand and judge the true risks from data loss. And like the bankers, they will be no severe consequences for the individuals if failure occurs.

The fear-factor feeling is back in the pit of my stomach.

Action item: Wikibon predicts that there will be a $Billion catastrophic business failure caused by data loss in the next five years. CIOs, auditors and storage vendor CEOs must take aggressive actions to ensure that it does not happen to their organization on their watch.

Controller Software Complexity, Disk Failure, and Function

The Bottleneck - Controller Software

Much of the June 29th Peer Incite discussion concerned practitioner views on the complexity building up in storage controllers as a result of the inclusion of a host of storage optimization features, including thin provisioning, compression, dedupe, snap shots, encryption and key management, and others. Presenters Richie Lary and Steve Sicola were asked if this expansion in software controller intelligence contributes to higher drive failure rates (the topic of the call) over historic averages from the time when controllers were responsible primarily for RAID and device I/O. The general consensus answer was “probably, because all this software makes the disk work harder”. But without a focused assessment, it is difficult to know. However, it was proposed that storage controllers and controller functionality is becoming a bottleneck to application performance (complexity of software could be one reason), and that software errors could be a growing cause of disk failure, following the adverse effect of environmental errors in vibration and cooling. And controlling environmental errors in vibration and cooling could be easier to deal with than software errors.

Moving Storage Functions to the Application

This led to a debate over whether these controller-based functions should be the responsibility of the application or embedded in the storage infrastructure. The answer to this question depends on what you define as an application. Is an application a business system like JD Edwards, an infrastructure application such as SameTime, a database application such as Open Office or CFO Central by Oracle, or a database management system or operating system? The other dependency is on the specific storage management function being considered. According to Lary and Sicola, storage systems should be designed to do only three things – be available to the application, protect data for the application, move data when necessary. As the user and vendor community settles in on this debate over time, its impact on the overarching business imperative of reducing complexity in modern IT must be considered. Open, service based standards and APIs being developed by the Cortex Developers Community and others for driving more interoperability and less complexity between a host of application and storage environments is part of the consideration.

What it Means

So what does this mean to in terms of failure rates and storage reliability? There are 3 main points:

- First, controls on the environment reduce drive failure rates. Technologies such as the Intelligent Storage Element (ISE) from Xiotech are improving drive reliability by reducing failures due to vibration and cooling problems. ISE is helping users achieve much higher reliability rates for regular disk drives enclosed in a typical storage drive bay—reducing service events and their impact on IT organizations. Because of such reliability, Xiotech provides a five-year hardware warranty with all its ISE-based devices.

- Second, the impact of disk controller software complexity on disk failure is uncertain. According to Seagate Technology, almost three-quarters of drives returned as "failed" are found to be NTF, or no trouble found. Could the source of the failure be poor controller software quality, and poor error detection and correction programming (according to Lary and Sicola, only 5% of controller software is devoted to error handling and reporting)?

- And third, as the debate over which storage optimization functions belong to the application layer vs. infrastructure layer rages on, more error management functions should be designed in to the APIs, and the impact of this transition of these functions on disk failures should be measured and evaluated from a risk, cost, and business value perspective.

Action item: Look at the cost and value implications of storage system hardware warranties vs. maintenance plans. Push the vendor community to provide better warranties to reduce maintenance costs. Consider your own experience with disk failure rates, and the impact of CAPEX and OPEX costs of a warranty vs. maintenance plan. And, if you’re an application lead, get someone on your team involved in communities such as Cortex and VMware who are developing storage APIs. As storage functions move closer to the application stack, determine the impact on development and maintenance skill sets, testing, troubleshooting, and application interoperability.

Footnotes: June 29 Peer Incite: The Future of Storage - A discussion with technology gurus