Contents |

Update: See "The Cost of Storage Array Migration in 2014"

The data in this professional alert has been updated in "The Cost of Storage Array Migration in 2014".

Cost of Migration with Traditional Storage Arrays

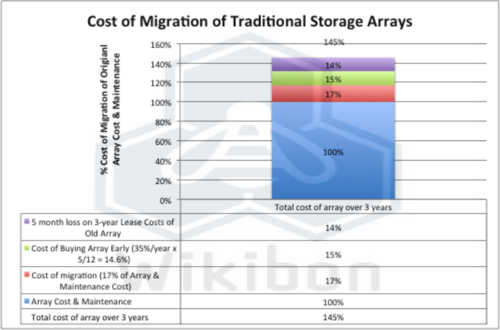

One of the major costs of traditional storage arrays is the cost of commissioning and decommissioning storage arrays. Figure 1 shows the cost of migration from a previous generation array to a new array. Wikibon studies show that the average migration time for a storage array is 5 months, with some migrations taking as long as 12 months. The cost of migration includes:

- The overlapping cost of the old array (5 months of three year lease)

- The operational cost of planning and executing the migration of volumes from the old array to the new array

- The cost of buying the new array 5 months earlier than required (storage costs decline by about 35% each year).

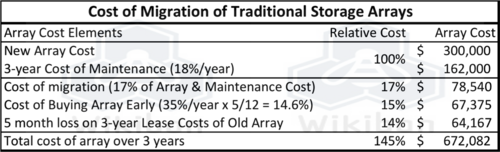

In our experience, most IT organizations don't carefully account for array migration expenses because they are viewed as ongoing staff or sunk costs that are depreciated. Vendors as well haven't focused clients on this problem because it would create a negative marketing perception with customers. However array migration costs are onerous and CIOs should take notice. The total cost of migration of a typical array is shown in table 1 in the footnotes below; expressed as the additive costs above and beyond the initial CAPEX and lifetime OPEX.

In particular, Figure 1 shows the additional cost of migration for storage typically adds about 45% to the total cost of ownership of the storage array. If the cost of an array is $300,000, and the cost of maintenance over 3 years is $162,000, the cost of migration is more than $200,000, about 45% of the cost of the array and maintenance. If the migration took 12 months, the cost of migration would exceed $300,000, or account for more than the cost of the original array.

The difficulty of moving data between arrays without serious planning and without taking the application systems down is a major inhibitor to providing scale-out software-led infrastructures. As a result, data tends to stay on the array where it was originally placed, and not on the optimum array available, creating poor utilization and waste. Wikibon estimates the cost of data storage and management at most organizations is between 100% and 200% higher than needed.

Federated Storage Approaches

There have been a number of approaches to federated storage from many of the major storage vendors. For example:

- IBM’s SAN Volume Controller (SVC) provides all the storage management and advanced functionality within the virtualization controller. This allows a number of storage arrays to be virtualized under the SVC, and allows data to be moved dynamically between arrays.

- Hitachi’s approach is to place the virtualization within the storage array itself, and allows additional arrays to be virtualized. Hitachi’s array migration capability - High Availability Manager (HAM) - allows dynamic migration of data from one Hitachi storage array to another.

- EMC’s VPLEX provides a similar virtualization approach, but differs from the IBM and Hitachi approaches in keeping the major storage functionality (e.g., SRDF) within the storage arrays in the VPLEX. The EMC VPLEX enables metro-distance migration and management of data. EMC also has a Federated Live Migration capability which is restricted to migration of DMX arrays to VMAX.

- HP’s 3PAR allows a loose federation between 3PAR arrays, and allows dynamic Peer Migration of data from one 3PAR array to another.

- NetApp Data ONTAP allows a cluster of NetApp storage arrays to be dynamically built and modified. All the nodes in the cluster contain the metadata about all the block and file storage resources. Volumes and ports are fully virtualized and can be migrated between and across array nodes. All the storage nodes at the moment have to be within a data center; Metro distances are not supported. APIs are available for external management of the cluster.

Software-led Storage within a Software-led Infrastructure

Wikibon has defined Software-led Storage, particularly the ability to separate out storage services from the storage. These services include:

- Replication

- Snapshots & Clones

- Hypervisor integration

- Compression/de-duplication

- Encryption

- Archiving

- Backup

- Storage Management

- Metadata Management

The key advantages of Software-led Storage are:

- The ability to dynamically move data to an optimum place;

- Provide storage services as needed based on user-/application-defined requirements.

The obvious cost justification is the removal of a 100% to 200% cost of migration overhead, and the ability to fully automate storage management.

All the current federated approaches have shortcomings, such as bottlenecks on virtualization controllers. They allow limited capability of migration of data between arrays, and between data held in servers, arrays and archived systems.

The most sophisticated storage array clustering approach available at the moment is NetApp’s Data ONTAP. Wikibon expects that the architecture will expand to support metro distances and additional nodes in the future. The architecture allows the addition of purpose-built, flash-only array nodes, which will be essential to provide low-latency, low variance IO.

Data ONTAP has great potential in our view to facilitate the movement to a Software-led Storage paradigm. To make it a true contender, NetApp will need to expand the capability to include data held in servers (particularly flash), and provide higher-speed lower latency sub-networks. NetApp will also need to provide an extended metadata strategy, improved automation of data movement, connections to an archived data layer held on magnetic media, and RESTful APIs for external management.

Action Item: Array migration costs are a glaring example of the waste endured by many customers over the past ten years. While federated/clustered approaches are addressing this problem, the movement to software-led storage within software-led infrastructure is a strategic opportunity over the next five years. CIOs, CTOs and senior storage specialists should push storage vendors to lay out clearly how they intend to provide scale-out architectures, equipment and software that will enable data as a service - i.e. flexible and functional software running on commodity hardware that ties price paid to value delivered.

Footnotes: