NetApp's value proposition to clients is based on a unified storage architecture that makes the same storage efficiency and management functionality available for all data, regardless of differences in the underlying hardware. The core to NetApp’s product strategy is the ONTAP proprietary operating system, built round the WAFL (write anywhere file layout) file system. The operating system started with support for NFS and has expanded into a unified storage system supporting CIFS, iSCSI and FC, creating that unified architecture.

The key storage factors that NetApp users should consider with regard to storage efficiency are outlined in the table of contents of this Alert as follows:

Contents |

Virtualization savings

The WAFL file system is broken into 4K blocks that only consume “real” storage capacity when data is written. This allows much more efficient space utilization than conventional arrays where data must be written contiguously, causing large gaps in physical storage. As well, with WAFL, data can be moved within an array without disruption. This and the other features listed below significantly improve utilization and ease of management.

- Virtualization enables storage admins to make logical copies of data without consuming physical capacity (zero capacity snaps). Traditional arrays make snapshot copies by writing data to the old location and copying original data to a new snapshot location (copy on write). In this arrangement the original copy is the current "master" and then copied to create versions representing a point in time. NetApp's approach (and that of others using virtualization) writes new data to a new logical snap location (once) and then make two logical versions, one representing the original data and the other representing the point in time. This approach is much more space efficient when making many copies (i.e. more than two).

Wikibon has previously investigated the benefits of virtualization and believes that the median gain is an efficiency improvement of 60%, or overall capacity savings of 40%. However, the overall reduction in the cost of storage is less because the number of I/Os that have to be processed remains the same, and the storage management requirement is unchanged. Using a ratio that the disks cost about one half of the total array spend, the cost savings are about 30% from virtualization and the additional features enabled.

Deduplication savings

NetApp offers a data de-duplication feature which is designed to reduce primary storage capacity (as opposed to most de-dupe implementations such as those from Data Domain which are aimed at backup). The de-duplication feature of WAFL allows the identification of duplicates of a 4K block at write time (creating a weak 32-bit digital signature of the 4K block, which is then compared bit-by-bit to ensure that there is no hash collision). The work is done in the background if controller resources are sufficient. The overall saving varies by customer, and because of controller prioritization, it is not achieved every time. A figure of 30% could reflect the saving in disk capacity. As the data is rehydrated when it is read, there is no improvement in I/O or bandwidth. The overall impact on cost is about 15%.

Future savings

The architecture of the hardware, ONTAP and WAFL system can allow a future implementation of compression for NetApp. A reasonable implementation of compression could deliver about a 2:1 reduction in capacity. As there is reduction of the amount of data, there would also be some reduction in the overheads on the controller for data movement and cache efficiency. This is estimated at 20%. The overall reduction would be 35%.

Overall Savings

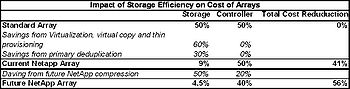

Table 1 shows Wikibon's estimate of the overall cost savings users should expect, relative to traditional arrays and the storage efficiencies produced by NetApp. Currently the median savings in storage costs is projected at about 41%. Compression, if and when it arrives, will make a significant improvement in storage efficiency, increasing the savings to 56% of today's storage costs.

The table uses a standard array with no space efficiency features as the baseline and estimates that about 50% of the cost is allocated to control function and 50% to storage devices. By applying storage virtualization, virtual copies and thin provisioning, Wikibon research indicates users will achieve an additional 60% savings in storage costs (from improved utilization). Data de-duplication applied to primary storage will provide an additional 30%.

We believe this represents a reasonable estimate of the efficiency of a 'typical' NetApp array, whereby the storage costs of NetApp arrays would be reduced by 41% as compared to traditional arrays (50% x 60% x 30% = 9%; 100%-50%-9%=41%).

Action Item: NetApp has one of the best track records of delivering space efficient storage. The savings are significant as compared to traditional arrays and consumers of these products should assess the potential of a NetApp approach. However, NetApp is running out of headroom to improve storage efficiency and existing NetApp customers should assume that compression will be the last significant storage efficiency boost delivered.

Footnotes: