Contents |

Executive Summary

Wikibon found strongly positive responses in the 2011 and 2012 VMware user surveys from practitioners who have installed hybrid storage arrays for virtualized environments.

The Wikibon community is interested in comparing the hybrid approach of flash as a front-end (flash-first) to the traditional array approach with flash storage as a cache, particularly in virtualized environments. Wikibon has recently talked in depth to storage practitioners and storage executives, as well to vendors and other consultants, about the different architectural approaches. As a result of this research, Wikibon is suggesting a definition of hybrid storage, and a model that estimates the cost benefits of the approach.

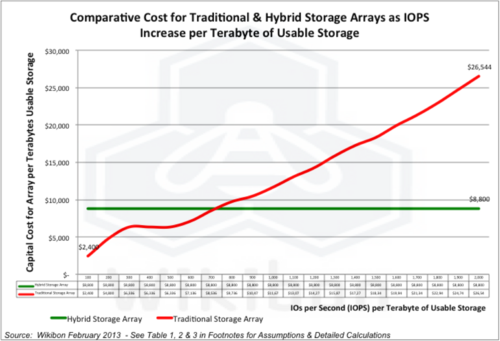

Wikibon has analyzed the cost and performance of flash-first hybrid arrays compared with the traditional architectures. In high performance environments the hybrid approach is clearly superior, both in cost and performance. Figure 1 shows a high-level view of the data, and the strategic fit for Hybrid systems. For IO rates greater than 700 IOs per Terabyte, the hybrid approach is superior.

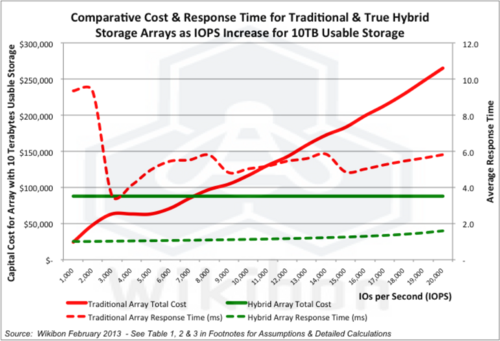

Figure 2 below and Tables 1, 2 & 3 in the footnotes show the results of the analysis in more detail and from a 10 usable terabyte array perspective and includes response time comparisons. Figure 2 below shows the detailed results of the cost analysis. For example, an environment requiring 15,000 IOPS from 1 terabytes of usable storage would require:

- 64 drives and 1 TB of flash in a traditional storage array with a flash cache;

- 16 drives including 2.4TB of flash in a hybrid storage array;

The traditional storage array would cost more than twice as much as the hybrid ($190,000 vs. $88,000):

- The response time of the traditional array would be three times higher than the hybrid storage array (1.7ms vs. 5.1ms);

- For databases such a Microsoft SQL Server or Oracle Standard Edition, this creates a potential to reduce the number of cores required to provide the necessary performance and to redeploy database licenses that would cost between $15,000 and $20,000 per processor core.

- In lower performance environments (without latency sensitive workloads such as databases or VDI), traditional storage arrays are likely to be the lower cost option. It is less likely that the improved performance of the hybrid array could be justified.

For very large environments, flash-only arrays may be attractive, with a migration policy to capacity disk as the IO demands go down. Over time, the lower relative cost of flash will continue, and flash-first arrays (either hybrid or flash-only) are likely to become the standard high-performance arrays.

Senior IT executives of SMBs and divisions of large companies, will find a "true" hybrid storage technology of great value in helping to contain costs and improve performance. One area of focus for this low-latency technology is to help optimize infrastructure software expenditure, especially in a virtualized environment. Low latency VM-aware storage will minimize the effort required to manage systems, allow more virtual machines to run, and reduce the number of cores required to run database environments. Low latency applied with purpose will decrease software budgets and improve end-user productivity.

VM-Aware Hybrid Definition

Wikibon suggests that hybrid storage arrays have three main characteristics:

- The IO queues for each virtual machine are fully reflected and managed in both the hypervisor and the storage array, with a single point of control for any change of priority.

- All data is initially written to flash (flash-first).

- Virtual machine storage objects are mapped directly to objects held in the storage array.

All these characteristics are very important to high-performance virtual environments and lead to a significantly lower cost of storage and improved performance. This is not as important for low-performance virtual environments, where traditional lower function storage arrays or JBODs may provide lower cost solutions. This is explored in depth in the section “Storage cost as a Function of IOPS” below.

Vendors have made many marketing claims that traditional storage arrays with some flash applied can be called hybrid storage arrays. The addition of flash will always improve performance to some extent. However, Wikibon does not believe that this implementation will provide the same cost and performance advantages of a full implementation of a flash-first VM-Aware hybrid architecture.

VM-aware Hybrid Architecture Detail

This section expands on the definition of VM-Aware Hybrid Storage above, and explores the differences in architecture in detail. This section can be skipped or read later for readers more interested in the business implications.

- The initial writing of all data is to the flash layer:

- In most implementations, two copies of data will initially be made at the flash layer spread out across the flash.

- The data will be trickled down asynchronously to the disk drive layer over time, where it is duplicated across multiple drives. The second copy in flash can then be eliminated.

- The flash layer is architected to spread the "wear-out" evenly across all the flash resources.

- The data is held in a non-volatile cache (e.g., capacitance protected DRAM) and is de-duplicated and compressed before being written to flash.

- The data is written and organized to fit the write performance characteristics of NAND flash (e.g., as a virtualized log-structured file).

- This approach allows large amounts of flash storage to be implemented as the front-end storage. The flash storage is better managed as a single logical unit than separate storage pools.

- In contrast, in traditional arrays, the master copy of all data is held on disk. The cache holds read-only data, and writes are transmitted to disk. The NVM memory in the storage controller cache is used to speed up random disk writes. Sequential writes are written to disk, bypassing the cache. The requirement to push data down to disk early results in longer IO response times and increases the number of disk drives required to meet the performance requirements.

- VM-aware IO Queue Management favors flash over disk:

- When applications run in a virtualized environment, the IO is managed from the hypervisor. As a result, IO from many different virtual machines running the applications are “blended”, and appears to the storage as random reads and writes.

- This is an inefficient way to use traditional disks, which are more efficient with large blocks of sequential data.

- Flash storage is well suited to random reads and writes.

- VMware provides a separate IO queue for every virtual machine, and VMware controls allow for prioritization between the IO queues.

- In hybrid architectures, this information is passed to the storage array (the data is not transmitted to traditional storage arrays).

- In hybrid storage environments, the IO queues for each virtual machine are managed independently. This makes complete performance analysis available to the administrator, breaking out IO, CPU, and network performance for each virtual machine.

- Changes to priorities are made in one place (the hypervisor manager), and will ripple through automatically to the storage subsystem.

- Virtual machine storage objects are mapped directly to objects held in the storage array

- In a virtualized environment, each virtual machine defines the storage objects (e.g., VMDKs) associated with the virtual machine.

- In the hybrid strorage array, the VM storage object is directly mapped into a storage object on the storage array. For example, it may be mapped to a file within NFS attached storage.

- This allows the performance of each VMDK to be be measured accurately and aggregated to provide the performance information for each VM machine.

- This approach also supports enhanced management of snapshots, backups, vStorage motion, and other functions.

Storage Cost as a Function of IOPS

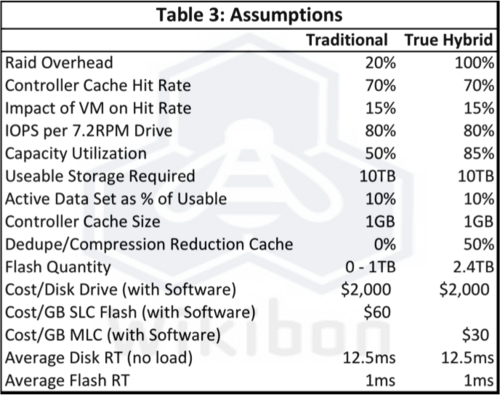

Wikibon investigated the impact of the three components of hybrid storage in depth. Figure 2 below shows the results of the research. Wikibon found an overhead for implanting a true hybrid architecture at the low end of the array market with low IO per second (IOPS) requirements. However, as the IOPS increased, the hybrid solutions become much more cost effective that traditional alternatives. The break-even is 7,000 IOPS on 10 usable terabytes, based on the assumptions in Table 3 and the calculations in Tables 1 and 2. The cost of the hybrid array stays constant, and above 7,000 IOPS for 10 usable terabytes the cost of traditional storage arrays increases dramatically.

Response Time Analysis

The most visible advantage of the hybrid approach is the improvement in IO response time average and variance. For true hybrid storage, the response time varied between 1ms at 1,000 IOPS to 2ms at 20,000 IOPS. For traditional storage without a cache of flash storage, the response time was about 9ms, and with a flash cache it varied between about 4ms to 6ms. IO response time becomes very important in workloads with databases, and workloads that have shifting peaks of high IO activity (e.g., VDI, Citrix, or VMware implementations that almost always have IO storms at various times of the day).

Wikibon has shown that latency-sensitive workloads such as databases can be implemented more efficiently if the IO latency is reduced to sub 2 ms, and the variance in IO latency is reduced as much as possible (probability of IO latency >8ms is <1%). When databases such a Microsoft SQL Server or Oracle Standard Edition are in use, reducing the IO latency and increasing server DRAM creates a potential to reduce the number of cores required to provide the necessary performance. A planned virtual database system with eight cores, for instance, could be reduced to five cores by optimizing the server and storage technology. The cost of a SQL Server or Oracle database license is between $15,000 and $20,000 per processor core, for a total cost avoidance of $45,000 - $60,000. The extra three cores could be used either to provide a three-core fail-over system using vMotion or to implement another database system.

Low latency storage also significantly reduces the effort of DBAs, system administrators, and DBAs to manage the system. In our conversations, hybrid users indicated that fewer than five hours a week were spent in administration, compared to two-to-four times as much for traditional arrays with flash as a cache. Low latency, ease of administration and the availability of a complete performance profile by VM were cited as the major factors in eliminating work.

Action Item: CIOs and storage executives and CTOs of SMBs or divisions of large companies should focus on optimizing infrastructure to minimize infrastructure (including database) software expenditure. Low latency storage is the most important component in a virtualized environment. Low latency VM-aware storage will minimize the effort required to manage systems, allow more virtual machines to run, and reduce the number of cores required to run database environments.

CIOs should ensure that the organization does not impede applying low latency to reduce infrastructure software budgets and improve end-user productivity. For all storage environments where the number of IOs is near or greater that 500 IOs per terabyte, low-latency solutions such as hybrid storage systems should be included in the RFP.

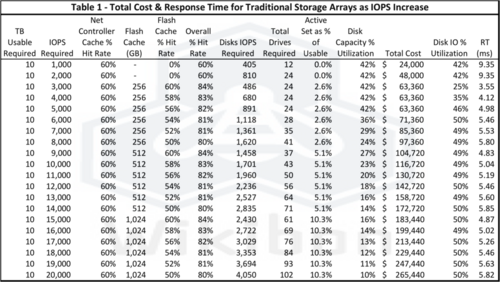

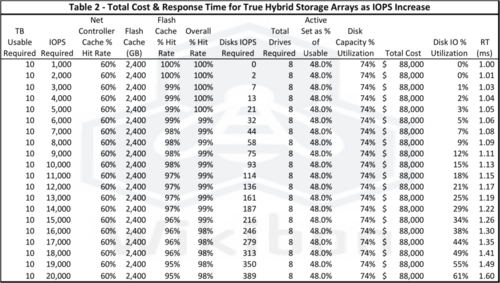

Footnotes: The following tables, assumptions and calculation notes are the basis for Figure 1 and Figure 2, and the examples in the posting.

Notes on calculations:

- Net Controller Cache Hit Rate is calculated from an assumption of 70% cache hit rate without VM, modified by a 15% impact from Vmware. Net result is 70% × (1-15%) = 60%;

- Flash-cache hit rate uses a maximum of 60% for each size of Flash-cache (Source: NetApp Flash-cache best practice), modified by -2% for each additional 1,000 IOPS;

- Overall hit rate calculated from Net Controller Hit rate + (Flash Cache hit rate × (1 - Net Controller Hit rate));

- Disk IOPS required is IOPS required × (1 - % overall hit rate);

- Total Drives required is minimum number of 1,2 or 3TB drives required to meet capacity and performance requirements;

- Disk IO utilization is calculated from IOPS required ÷ (total drives × average IOPS per Disk (= 80));

- Total Cost is calculated as the sum of the Disk components (# Drives × $2,000) and cost of flash (# gigabytes × cost/GB SLC or MCL);

- Response Time(RT) is calculated as (% of flash IOs × flash RT) + (% of Disk IOs × (Disk RT × (1 + (Disk IO Utilization ÷ (1 - Disk IO Utilization))))