Storage Peer Incite: Notes from Wikibon’s November 06, 2007 Research Meeting

Moderator: David Floyer & Analyst: David Vellante

This week Wikibon presents An early Christmas for storage administrators. In its recent announcement, Hitachi Data Systems gave storage administrators in high-end shops a revolutionary capability -- the ability to apply thin provisioning across heterogeneous, existing populations of external storage arrays from a central Hitachi controller. This, combined with virtualization, can create a huge increase in utilization rates. It does have tradeoffs, one of which is the cost in money and effort for migrating a shop from existing storage architectures to a virtualized, thin-provisioning design. However, once this is done, the reduction in time and expense of migrating to new hardware alone -- which the new architecture will cut from months to a week or less -- will make that initial effort worthwhile for large, fast-growing shops. And the early Christmas present will be the jump in storage utilization, which for a large shop can be the equivalent of the capacity of an entire new array.

This is a unique capability -- at this time no other storage supplier is providing thin provisioning across attached arrays not of their own manufacture. It has a major potential impact on high-end shops, and given HDS's reputation for delivering on its promises, the Wikibon community expects the new technology to work well. The articles below explore some of the major implications of the Hitachi announcements both for its existing customers and for any shops in the market for additional or replacement storage. Bert Latamore

Early Christmas for storage administrators

Hitachi and its distribution partners Sun and HP have announced four features with meaningful implications:

- Improved performance,

- Thin provisioning of external devices,

- Availability of 750 gigabyte SATA II drives in the bay and,

- Support of virtualized externally attached arrays with non-disruptive application data mobility between servers and storage tiers by VMware's ESX Server

Of these, performance and thin provisioning of external devices are the most interesting. While Hitachi made no changes to its previously published SPC-1 benchmarks for the USPV, it did announce increases to theoretical maximum performance of 22%. This is a notable improvement, although at this time it is unclear exactly how that improvement has been achieved. The previously published SPC-1 benchmarks showed that USPV supports sub-5 millisecond response times, which implies that performance is constrained by the lack of disk drives rather than lack of controller bandwidth. It appears more performance is there and perhaps available to drive the second key feature in the announcement, external thin provisioning. However this has not been substantiated by Hitachi, and users should push Hitachi to provide visibility on the performance of externally thin provisioned volumes and clear guidelines on where thin provisioning is appropriate and where it is ill-advised.

In May, Hitachi announced thin provisioning within the array. On November 5, 2007, it announced that it had extended thin provisioning to external arrays, meaning thin provisioning can be applied to heterogeneous arrays in a data center. Arrays supported and qualified include products from EMC, HP, IBM, LSI, Sun and Hitachi's own midrange systems. This is a unique capability that theoretically can be combined with virtualization across multiple arrays. The critical questions the Wikibon community posed are: Do the functions work, and what are the adoption and migration costs?

Hitachi claims more than 7,300 intelligent virtual controllers have been shipped, but as a matter of 'policy' it will not provide guidance on what percent of these use thin provisioning. To date, Wikibon users have reported using thin provisioning of USPV's only in test and development environments, and it appears production instances are more rare. While thin provisioning is not a panacea for all applications, and there are important caveats for users, the feedback from the community is nonetheless positive: Thin provisioning works well for Hitachi and 3PAR customers, and Wikibon members expect Hitachi engineers will get it to work consistently for external arrays.

However, adoption costs are significant. We estimate migration of 100 Tbytes from non-Hitachi 'fat' devices to internal and external thin provisioned volumes will cost $300K (above and beyond normal array hardware and software costs) and require six person-months to implement. Potential benefits are better utilization for all storage attached to the USPV and much simpler storage provisioning and migration. The combination of virtualization and thin provisioning could perhaps increase utilization rates by 35%. That could save the purchase of an entire array. On top of that there are lower commissioning/decommissioning cost, utilities and perhaps administrative costs.

Hitachi also announced the support of high capacity SATA II disk drives for the USPV, a feature of tier 1 storage popularized by EMC. Evidence suggests that demand for these devices is high, with perhaps as much as 25-30% of the USPV customer base planning on taking delivery of these devices in the near term. EMC indicates similarly robust demand for this capability. Despite the higher cost of tier 1 infrastructure, the use case appears to be attractive for low activity archive applications residing within tier 1 environments. On balance, the attractiveness of this approach comes down to the simplicity and effective cost/gb of adding these devices to already installed tier 1 systems.

Action Item: Storage executives should develop a plan that aggressively leverages thin provisioning and storage virtualization where appropriate over the next six to twelve months. They should have that plan on the CXO's desk by early December. This will serve to both heighten awareness of the imperative among executives and increase leverage with incumbent suppliers. The possible outcomes of this strategy are: 1) A guarantee from EMC or IBM for delivery of similar functionality; 2) Much better terms and conditions on array deals in Q4; or, 3) Acceptance of an alternative proposal from Hitachi, Sun or HP.

When do migrations to USPV make sense?

Hitachi's 11/7/2007 announcement extending thin provisioning to externally attached arrays delivers meaningful incremental value to existing USPV customers via new microcode function. For current USPV customers, this capability is a relatively straightforward update which, with some planning, can yield excellent utilization benefits that should pay for itself quickly.

For non-Hitachi customers, the case is not as straightforward. Specifically, customers must ask: What is the cost of migrating to a USPV infrastructure, how long will it take and do the benefits offset the costs? In general, using a reference model of around 110TBs, a migration of this nature will take many months (six or more) with substantial project management costs (assume 1/2 FTE) and incremental software costs. It will unquestionably be disruptive. The benefits however can be substantial.

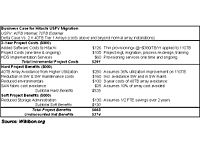

Figure 1 shows a cost benefit analysis for such a case. The scenario shows the incremental costs of migrating to a 40TB USPV and attaching external arrays with a total of 70TB; versus installing two non-virtualized tier 1 arrays. Here are the key points:

- The incremental costs of migration, above and beyond straight hardware expense, approach $300K;

- To offset these costs, customers must have a large enough installation (e.g. 100TB) and enough diversity in installed tier 2 assets to justify the USPV approach;

- Despite migration complexities, if the installation is large enough and improvements in utilization exceed 30%, the benefits may offset the initial project costs;

- These benefits will largely be realized by avoiding costs associated with adding new arrays (hardware, software, maintenance, environmentals).

There are several assumptions that must go into such a rough set of figures and customers need to carefully personalize this analysis, but the five key rules of thumb used here include: 1) Storage utilization across non-virtualized arrays assumes 70% allocation and 70% utilization of allocated storage (for a total of 50% utilization on average); 2) The impact of thin provisioning is to increase utilization by 36% on average; 3) The applications support thin provisioning; 4) Cost of a full time equivalent (FTE) is $130,000 per annum; 5) There is no hardware cost difference between a 40TB Hitachi USPV and alternative array hardware.

Action Item: Existing USPV customers and smaller homogeneous shops need not think too hard about the recent USPV enhancements-- they either obviously make sense or they don't. Non-Hitachi customers must carefully weigh the substantial costs of migrating to the USPV with the substantial benefits received from virtualizing and thin provisioning internal and external capacity. These factors must further be considered against incumbent vendor promises to deliver similar function and the time-to-benefit of these competitive offerings.

Thin provisioning: Look before you leap

See also related posts on my blog.

A couple more thin provisioning caveats

From The Storage Anarchist, Wednesday, Nov 7, 8:30AM.

In addition to other caveats, customers considering thin provisioning should be aware of two oft-overlooked factors before deploying this technology:

- Performance: By increasing the utilization of storage, you are (by definition) placing more data on each spindle, and likely using fewer spindles to support the sum of all the workloads that are sharing the devices that provide the capacity for the thinly provisioned devices. Doubling the utilization effectively doubles the access density and doubles the spindle contention. Dependent upon the performance requirements and workloads of all the applications that share the spindles, response times and throughput of ALL applications may suffer because the spindles are unable to support the higher workloads with reasonable response times.

This is specifically why the SPC-1 is irrelevant to the discussion of thin provisioning. All SPC-1 configurations leverage sparse allocation in order to attain the highest possible results - often using far less than 20% of the capacity on each spindle. Increasing the utilization to a more cost-efficient 60% requires only 1/3 of the spindles, but the effective SPC-1 IOPS and response times are likely to be far worse than even 1/3 the IOPS or 3x the response times of the published results, given the added overhead of device contention. The SPC-1 does nothing to predict the relative performance of different storage devices under this sort of (more realistic) workload.

- Fault domains: Over-provisioning depends on having multiple thinly provisioned devices (LUNs) sharing a common pool of spindles, and given the increased utilization, more LUNs are most likely to be sharing these spindles than would occur if using "fat" allocation. The first-order risk is somewhat obvious - if any of the applications unexpectedly consume all of the physical storage in the pool, ALL the dependent applications (LUNs) will have their writes rejected, potentially with serious consequences. Aggressive monitoring and the ability to respond to the alerts provided by the implementation in a timely manner (to add more storage) will mitigate this risk sufficiently for most.

Data corruption of the storage pool is not so easily avoided, however. Although rare, blocks do occasionally get corrupted (as I've also discussed on my blog). Such corruptions are most usually limited to only a few blocks, although they are often "silent" and may go unnoticed for years.

But there is a distinctly higher potential of a double-drive failure occurring in a RAID-5 group - a probability that increases with the size of the drives being used and also somewhat by the workload the RAID group is supporting (disk drives are mechanical and do wear out faster under heavy loads). The challenge is that such a double drive failure can result in the loss of nearly two full drives worth of data blocks (subtracting out the parity overhead), 60% or more of which will be real data (due to the increased use of thin provisioning). These data blocks will probably be irrecoverably lost - and (by definition) this means that every single LUN that was sharing the pool will have at least some data loss (if not total destruction, if significant portions of the layout metadata is lost). And as noted, the number of LUNs impacted will likely be significantly greater than if using "fat" provisioning.

Within most recent-generation arrays, like the USP-V or the DMX, customers will most likely be advised (by vendors and best practices) to use RAID-6 for all the RAID sets used as thin provisioned pools, as this will help to minimize the probability of data lost (RAID-6 can tolerate the loss of two drives and still maintain the integrity of the data). Note that while this approach can mitigate most of the risk of data loss, RAID 6 may have additional impact to the performance of thin devices.

But if you were to leverage the newly-announced USP-V capability of thinly-provisioning externally-virtualized storage, RAID-6 is very likely NOT to be an option, because most older storage arrays do not offer the RAID 6 capability. Importantly, in this configuration the USP-V hardware and software cannot do anything to protect the data from corruption in the external storage, aside from perhaps mirroring the volumes internally (and note that it is not clear if this is even a supported option).

It's also important to understand that this risk is not limited to thin provisioning - this is probably why Hitachi & HP documentation does not recommend externally virtualized storage be used for heavy I/O workloads other than backup or archive. Users should demand access to and carefully consider the recommended use cases for thinly provisioned external devices in light of this very risky reality.

Action Item: Thinly provisioned external storage should be considered very carefully before it is committed to as an infrastructure-wide strategy. While some will view these concerns as spreading fear, uncertainty and doubt (FUD), buyers should explore this topic with all potential suppliers and do their own homework before dismissing its significance.

Storage as a service

Many parts of the IT and user organization have fingers in the pie when it comes to defining storage. This usually leads to wasteful storage decisions. Storage should be defined by the users as a service. Key metrics of the service level include the amount of storage, the ability to expand storage, response time, and RPO and RTO requirements. A key requirement of any storage infrastructure is that the user of this service should know what level of service is being supplied, and be able to change that level of service at any time. Thin provisioning and virtualization are key components of a “storage as a service” strategy.

Action item: Organizations should centralize storage allocation decisions within storage administration, and ensure that storage equipment specifications are expressed as required levels of service, not product specifics. The storage infrastructure should include the monitoring and metering tools necessary to report back to storage consumers on storage service levels.

ISV support for thin provisioning is win-win

ISV support for thin provisioning and virtualization will evolve rapidly over the next few years, as it is a win-win proposition for both supplier and customer. Thin provisioning reduces the overall cost of hardware for the solution and allows faster expansion of usage of the application; both increase the ROI and revenue potential of the ISV product. In practice there is usually very little if any change to be made to the product or documentation. Support for key components of ISV products such as Oracle is well advanced.

Action item: IT management needs to aggressively insist that ISVs support virtualization and thin provisioning by refusing to sign contracts that do not commit to rapid support.

Hitachi keeps margin for competitive error thin

In May 2007, Wikibon analyzed Hitachi's thin provisioning announcement and suggested the race is on for competitive responses in high-end block-based storage. Since that time, EMC and IBM announced their intention to announce thin provisioning on block-based storage and 3PAR has made enhancements to its increasingly popular InServ product line.

Of the competitors, 3PAR seems to be moving ahead to try and distance itself from the pack, while IBM and EMC are targeting early-to-mid next year to catch up in the thin provisioning game. 3PAR's announcement of Virtual Domains, a software capability that allows the logical partitioning of a 3PAR InServ array of up to 2,000 domains, is noteworthy. 3PAR seems to be the one company executing on delivery of a storage services/utility storage model by providing security, access control, monitoring and virtualization capabilities that can safely subdivide storage resources based on policies and enable chargeback to users. This is a powerful vision.

Nonetheless, 3PAR is small, limited in scope and extremely focused on its growth trajectory at the moment. The storage industry is looking for clear direction, vision and leadership in the increasingly active space for storage virtualization, thin provisioning and heterogeneous tiered storage management. Much is unclear for customers right now, and Hitachi's consistent execution, while vital, underscores the need for a more compelling 3-5 year vision.

Action Item: Product-oriented messages, while necessary and important, will not suffice in helping users chart a course for the near-to-mid term. Vendors are staring at a major opportunity to articulate what the future of storage will look like and lay out a multi-year plan for customers to follow. Virtualized data centers, more efficient technology utilization and heterogeneous storage management are key underpinnings of this vision as is the accommodation for information asset and liability management.

Modernizing the storage infrastructure

Hitachi's philosophy of supporting heterogeneous arrays and enabling them with robust enterprise-class capabilities provides an opportunity to update legacy storage and inject new life into these products. By enabling thin provisioning on external storage, Hitachi has handed users a chance to extend the value of older arrays and reclaim wasted space via external thin provisioning.

There are several best practices users can consider in this regard, including:

- Perform an assessment and identify older storage systems that are candidates for attachment to a USPV and begin the process of virtualizing installed storage assets;

- Clearly identify tier 1 and tier 2 candidates defaulting as much storage as possible to tier 2 arrays that are virtualized behind a USPV;

- Reduce maintenance costs by using third-party suppliers where possible for hardware break/fix;

- Terminate software maintenance licenses for products attached to the USPV;

- Optimize USPV configurations by using high-capacity SATA II devices for lower performance applications;

- Avoid onerous software license fees where possible by limiting tier 1 support to those applications that absolutely demand it;

Action Item: Where applicable, aggressively modernize currently installed arrays by including them in a USPV umbrella. Balance the cost savings of this approach with the risks of utilizing older equipment by planning for recovery using state-of-the art services provided by the USPV applied to older arrays.