Storage Peer Incite: Notes from Wikibon’s April 18, 2007 Research Meeting

This week David Floyer presents Power Shift to Green Computing. Recent attention to energy consumption necessitates re-establishing centers of expertise around energy efficient data centers, system design and corporate policy. This week we discuss the implications to IT, organizational actions, technology integration, vendor initiatives and migration issues.

Contents |

Power shift to green computing

For many years, the issues of environmentals were a very important consideration in the design and implementation of data centers. In the days of ECL-based mainframe computers, organizations had to carefully consider issues associated with power draw, cooling, floor space and other environmental factors, if only to keep the systems up and running. With the advent of CMOS microprocessor-based computing, much of the expertise associated with data center design, location and management, as it pertained to environmentals, dispersed. However with the emergence of blade computing as a significant source of potential benefits in large data centers, as well as the rapid rise of energy costs throughout societies everywhere, the considerations of energy and environmentals are once again starting to take at least stage left if not center stage in IT data center design and location decisions.

We note it was only a few years ago that Google identified that the cost of powering its systems was going to exceed the capital costs of those systems over their useful life. We're at the cusp of a new awareness in the relationship between energy, heat, power, system design and data center design which will play out over the next several years. But we note a couple of observations.

First, it is imperative that users revisit the metric of power per square foot. Systems that require more than 10 kilowatts per square foot should immediately be flagged as potentially problematic because most environments will not support either the draw or heat associated with such systems. Secondly, we're starting to see calls for greater distribution of application function to try and ameliorate power and heat considerations but we counsel users to note that the physical realities of very large transaction-oriented applications makes breaking those applications apart often impossible so that is not a general solution. We're also starting to see some large organizations like Google and Microsoft, place their data centers closer to large power sources precisely to take advantage of the potential cost savings and risk abatement of having to pull enormous amounts of power over the general purpose power grid.

While these trends will mature rapidly over the next few years, we recommend that user organizations name an 'Energy Czar' inside the IT function if only to ensure that the CTO role is constantly monitoring and factoring the issues surrounding energy and heat in questions of data center location, design and increasingly key issues like off-shoring and outsourcing supplier decisions, noting that in many locations around the world, heat and infrastructure are more of a challenge than in others.

Action Item: The realities of energy consumption and heat are reasserting themselves as energy costs and device power-density rapidly increase. Users must begin reestablishing centers of excellence around data center design, supplier procurement and device choices that fully factor issues of energy costs and system design, both tactically and strategically.

Sensible power policies: Back to the future

As environmental issues permeate budget discussions, data center designs, corporate and government policy and social consciousness, the need to assess, design and implement appropriate power policies grows. Like a leaky faucet, this problem won't go away on its own. IT must expect this challenge to get more acute, and will require plans for more space and more electricity secured at reasonable rates. No surprises should be the modus-operandi here.

In the data center, server equipment is a notable culprit, generating up to 20X the heat output per square foot compared to disk drives, for example. Communications equipment is also problematic because the power density can be as high or higher than servers. Best practices are reminiscent of the days of ECL-based mainframes where environmentals were fundamental to data center design.

There are several approaches making a comeback. These include the use of cooling zones, where ultra-high density equipment is cabinet cooled and medium/low density equipment use row cooling and raised floor techniques. Placing high density equipment adjacent to low density equipment to essentially balance loads is another popular approach. Discussions about cooling methodologies (e.g. frames using chilled water and refrigerant cooling systems) are also more frequent as are heat displacement or heat removal techniques. The old adage of "don't just make hot air colder" rings true again in today's world.

On the user front, enforcing policies such as turning lights off or even smart lighting, turning off monitors and PC's after work hours and encouraging work-from-home days are all sensible approaches to making a "green computing" business sense.

Action Item: IT organizations must get serious about environmentals, set goals, make them fundamental to design and procurement decisions, implement policies and plan accordingly for future needs. However users should be careful to understand the economics of green design to make sure initiatives are self-sustaining and/or justified within a broader corporate/social agenda and accordingly supported by Boards of Directors.

Power projection

In a previous alert Cooling heats up, CTO’s were advised that they should create power density projections for all technologies. The steps are:

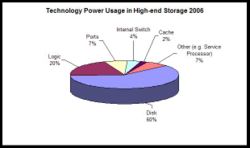

- Analyze the technology components of each equipment type in the organizations. The chart below shows the current relative power consumption of the components of high-end storage, and shows that spinning disk accounts for 60% of the total power consumption.

- Project the rate of power density increase for each technology component. In this case a reasonable projection for the spinning disk component is 0% (disks have historically had a constant form factor and power consumption (~18 watts per drive) and are expected to continue this trend in the future). A reasonable projection for power density of all the other components is 30%, as all the components are essentially the same as server components.

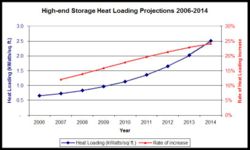

- Calculate the projected power density projections as shown in the following chart. As spinning disk becomes a smaller component of the total power load over time, the rate of increase in storage power density increases.

Action item: CTO’s should follow the steps above to create a power density projection for storage and other technologies and update them annually. Storage managers should use these projections to help plan data center evolution.

Cooling heats up

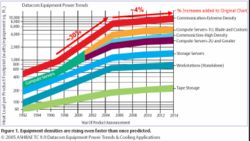

ASHRAE (The American Society of Heating, Refrigerating and Air-Conditioning Engineers) made a projection of power density of IT equipment in 2003, and had to revise these projections significantly up in 2005 as shown in the chart below.

The historical data before 2005 shows power density of equipment increasing at up to 30% per year. After 2005 their projected increases are less than 4% per year. Now two years later in 2007, high-end blade servers have already reached 10kWatts/sq. ft. in power density, and the historical increase of 30% per year for servers and telecommunication equipment is holding steady. Although manufacturers are focusing on technologies that will reduce the power load, this is likely to have a short term impact on the rate of increase; the technology fundamentals that are increasing these power densities are likely to revert to much closer to 30% than 4%.

If these trends continue, the cost of environmentals for most servers and communication equipment will become greater than the cost of the technology over the life of the equipment.

Action item: CTO’s should develop and keep up to date guidelines that project the likely cost of environmentals for all IT technologies over the next five years. All business cases for IT projects should include a line item for environmentals that should be derived from these guidelines. These funds will be required to significantly improve data center power and thermal management.

The squeaky wheel of storage environmentals

Despite the fact that servers and communications equipment are notoriously more power consumptive than storage devices, storage vendors are increasingly going to hammer away with messages about environmentals and attempt to leverage this issue for competitive advantage. EMC threw down the gauntlet late last year by being one of the first to feature this issue with new energy assessment services and a power calculator that allows customers to estimate energy consumption and cooling requirements for EMC products. This clever marketing approach is like a souped-up version of a famous opening line used by Xerox copier salesmen in the 1970's and 80's, namely, "where are you going to put it?"

By aggressively qualifying and implementing super high capacity disk drives, companies will claim substantial power improvements on a per terabyte basis relative to previous generations of subsystems (without making any substantive engineering redesigns) and capitalize on the fact that spinning disks account for over 50% of power consumption for storage systems. Companies will also tout better utilization and consequently lower power consumption by delivering virtualization, storage consolidation and tiered storage management as immediate-term solutions. Longer term, vendors will increasingly have to account for the cost of environmentals as part of system design.

Action Item: In the near term, vendors should use marketing leverage and proven consolidation approaches including virtualization, tiered storage and ultra-high capacity disk drives to claim power leadership. Services that transcend storage and account for other data center equipment including servers and networking gear should also come into play. Longer term, vendors must more diligently architect improved environmentals into subsystem design or be faced with a sore spot in head-to-head marketing clashes.

An inconvenient truth in IT

As questions regarding the limits of Moore’s law start to gain force, the one thing that all IT professionals can agree upon is that the costs of energy will continue to rise much faster than IT budgets overall. Some estimates suggest that energy costs may amount to as much as 10%-15% of total IT budgets in the next decade. Against this backdrop, it’s essential that IT organizations devote immediate attention to establishing programs for retiring energy hogs. These programs likely will force inconvenient and unpopular actions, as classes of infrastructure technologies are put under the bright light of energy efficiency. However, we anticipate that emerging energy-based metrics will start being employed to evaluate applications, as well. For example, it may soon become economically justified to retire older client/server applications that require the most powerful clients.

Action Item: IT organizations have to begin incorporating energy-based metrics as part of the value equation for infrastructure and applications technologies. Tracking these measures now could ameliorate potential "panic decisions" later in the event that expected energy price spikes catalyze executive spasms.