Storage Peer Incite: Notes from Wikibon’s May 22, 2007 Research Meeting

This week David Floyer presents Data deduplication: Declawing the clones. Techniques that use various algorithms to reduce duplicate data and enable more data to be stored on spinning disk are gaining prominence in the market. Recent announcements by NetApp, IBM and others underscores this trend.

Based on the Peer Incite weekly research meetings moderated by Peter Burrris, we've tried to make this newsletter about your business. Each week we summarize the community's input from the meeting and document specific advice for users (IT), organizational considerations, technology integration issues, and vendor actions. We also address the all-important 'getting rid of stuff' (GRS).

Contents |

Data deduplication: Declawing the clones

Users have been struggling for years with the challenges of trying to reduce the amount of storage necessary to support critical applications in their organizations. A technology that has been put forward for quite some time recently received a pretty significant boost in announcements by both Network Appliance and IBM.

Data deduplication promises potentially very high space savings (30%-50%) for storage environments that feature frequent cloning of single pieces of data either at a file, record or block level. Data deduplication takes three basic forms, including in-line, block hashing and logical construct. Each of these different technical approaches has their pros and cons but they all basically seek to find circumstances in which the same bit of data has been replicated multiple times in response to often arbitrary backup and/or application activities.

It is important to note that the types of applications that tend to receive the largest benefit from deduplication tend to be those that feature very high backup and restore requirements such as database backup, software archiving, etc. where the notion of truth in the data becomes very important and as a consequence cloning of that data is often repeated across different application forms (e.g. to data warehouses, etc.).

The concerns users will face as they evaluate data deduplication today are a few but important nonetheless. The most significant is data deduplication is applied utilizing proprietary formats. Data is written directly into the file headers that basically describe how the data has been deduped and presents pointers to applications so that those applications can be assured that they will get access to the copy of the data that they need. The system of pointers that results from these technologies can lead to some performance degradation. Indeed storage environments which benefit the most from data-deduplication are likely also to be those that face the greatest performance concerns. Additionally, it is critical that encryption occur after data deduplication to ensure that overall integrity and other very basic concerns regarding storage can be maintained.

We will see a fair amount of discussion regarding how data deduplication can be a general purpose replacement for tape in a backup restore scenario. However, due to a variety of reasons, not the least of which remains the cost of communicating large volumes of data over potentially great distances, tape will continue to have a viable life for the foreseeable future despite some of the advantages of data deduplication. At this juncture it is safe to say the best current data deduplication implementation offers no major advantage over the worst tape solution for very high volume data backup and recovery applications.

As we look forward, we see deduplication becoming a critical enabling technology that can be successfully paired with other emerging storage technologies including thin provisioning, virtualization and others. However it is imperative that users take very close looks at the tradeoffs between the advantages of deduplication and the potential performance costs on the one hand and on the other hand fully understand the consequences of buying into yet another storage technology featuring relatively proprietary formats.

Action Item: Data deduplication is emerging as a critically important new arrow in the storage administrator's quiver to answer hard questions about the increasing problem in storage growth costs. However like all technology arrows, users must be careful to choose which targets to shoot data deduplication at and be very certain in their aim.

Deduplication implementation strategies

The announcement of general purpose (rather than supporting backup or virtual tape libraries) deduplication by NetApp and IBM within a controller for file orientated storage is a first within the major industry players. It is a sound placement of the function, and will be a contribution to establishing a storage services infrastructure.

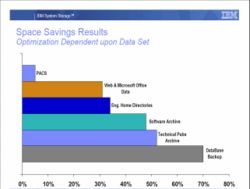

One of the challenges for CIO's and CTO's will be managing user expectations. Many vendors have proclaimed 50 – 100:1 data reduction. These are restricted to environments where the same data is saved many times sequentially, such as in virtual tape libraries and backup. Deduplication of production file systems offers more modest reductions in space in the 2:1 range, as shown on the chart below illustrating reduction for different workloads.

The following can serve as useful guidelines to getting started with deduplication:

- Start by understanding the benefits and costs of deduplication in your specific environment. Measure the data reduction, and measure the impacts on performance, particularly on reads.

- Create a deduplication pool of storage with minimal other function on the filer head to minimize potential performance problems with competing filer functions.

- Consider dropping tape storage for anything other than data that must be physically moved off site. If there is no justification for keeping it on some sort of disk, it should probably be discarded.

The best candidates for deduplication likely to be Tier 2 and Tier 3 applications with low I/O read activity and low volatility.

Action Item: IT management should assess deduplication for enteprise storage systems. However, user expectation of both the amount of benefit (30%-50%) and the range of applications that it will be suitable for (low volatility, low read I/O) should be managed. Look hard at tape storage for long-term use and evaluate moving to a strategy of eliminating tape except for removing data off-site.

Go tell storage on the CFO mountain redux de-dup

Data de-duplication joins thin provisioning as another storage technology story that could warm the hearts of CFOs. Although for very different reasons, each heralds potential 30-50% space savings, which drops right down to lower storage acquisition costs. Ultimately, users will be able to exploit both technologies. However, the practical reality is that near-term budgeting (and other) constraints are likely to preclude pursuit of both technologies. Consequently, storage groups are likely to have to choose either the data de-duplication path or the thin provisioning path, looking to unify them as storage product timelines merge over the next few years. The decision regarding which path to choose should follow application and business value needs, but we fear that vendor marketing strategies and internal politics may well play a significant role. For example, while thin provisioning may well ultimately deliver greater returns to application development groups by enabling truly services-orientated storage, near-term the benefit of eradicating data redundancy in database environments may tip application groups to favor data de-duplication.

Action Item: Storage management groups are likely to face an unfortunate either/or decision regarding thin provisioning and data de-duplication technologies near-term that will strongly influence storage buying patterns in the intermediate term. Mixing these technologies is the best option, but will require deft procurement planning and savvy political maneuvering.

Deduplication offerings likely to stay proprietary

During the 5/22 Peer Incite research meeting there was a concensus that storage vendors would not allow direct access to data except through the storage controller. After the research meeting Fred Moore wrote “[Storage] vendors have little to gain or to differentiate themselves by being open.”

Unfortunately for storage vendors' long term benefit, this is probably going to be true; short term illusions of competitive gain will trump establishing a larger market. As a result this will limit the use of deduplicated storage by software vendors. Developers are likely to find that it will be much more attractive to be able to directly control specialist deduplication hardware in the server or appliances.

Action item: Keep technology options for the placement of deduplication open. Placement in the storage controller may be best for archive applications. Data that is shared between multiple applications may be better served by server based specialist depulication access methods and engines.

Data deduplication and vendor roadmaps

The recent announcements of general purpose data deduplication from NetApp and IBM as well as EMC's for virtual tape, and Hitachi's announcement of thin provisioning raise questions about which of these technologies will have the greatest impact and where do they fit in vendor roadmaps. At a recent Wikibon Peer Incite meeting, one participant suggested that data deduplication would have a greater impact on the market than thin provisioning which catalyzed this professional alert.

Given that both data deduplication and thin provisioning promise substantial (20 - 40%+) improvements in storage capacity utilization, it seems both arguments have merit. So where should vendors (and users) place technology bets?

Data deduplication is best suited for applications that move predominantly the same data frequently (like daily backups) where there's a high degree of repetitiveness in copying data that doesn't change often. The combination of low cost disk and technologies like data deduplication make the prospects for onsite backup and restore on tape look pretty dim. More often users are going to find that if they want to get to data, it better be on disk. This makes the prospects for data deduplication seem quite attractive and tape vendors, which are unable to exploit today's data deduplication designs, had better flee to compliance, fixed content and offline archiving markets sooner rather than later.

The picture for thin provisioning is in some ways more intriguing. While the sheer volume of candidates for thin provisioning may be less than data deduplication, over time, high value data will be a predominent target for this technology. This in some ways makes thin provisioning more sexy. But the longer term benefits of thin provisioning have the potential to dramatically alter and improve the relationship between IT and business lines in terms of making storage consumption more transparent. This transformative aspect of thin provisioning could bring substantial competitive advantage to vendors and pay added dividends in terms of greater application service levels.

Action Item: Storage vendors should not make data deduplication and thin provisioning an either / or decision point. Rather leading suppliers need to accommodate strategies for both technologies and invest in development to mine obvious opportunities in each area.

Data deduplication has tape looking over its shoulder

Predictions of the death of tape have been forthcoming since tape has been around but for a variety of reasons tape just won't go away. Increasingly, it looks like if it's worth keeping, users should put data on disk. Recent deduplication announcements from the likes of NetApp, IBM and EMC underscore this point as a 'clone everything you ever really might need acess to' strategy is becoming economically feasible.

Tape's uniqueness is it remains removability, so tape vendors are looking at opportunities in offline archiving, as well as fixed content and compliance markets, which according to Horison Information Strategies and Fred Moore are probably more lucrative than traditional backup and restore. But for those customers with mountains of tapes and loads of data that has at least some intrinsic value, using a data deduplication strategy to clone everything on disk is starting to look pretty interesting.

Action Item: Customers should move toward a strategy of using disk for data that at some point will likely be read. Putting data on tape essentially means you'll never get to it again (or at least hope not to have to). Tape should be considered as an offsite medium, used in situations where compliance is the key driver or the economics of tape still make sense.