Storage Peer Incite: Notes from Wikibon’s March 4, 2008 Research Meeting

Moderator: David Vellante & Analyst: Steve Kenniston

As the amount of data grows beyond all bounds and huge amounts of unstructured data -- emails, personal productivity documents (spread sheets, electronic copies of white papers, etc.) -- are added to the growing amount of structured data, traditional brute-force backup approaches are becoming difficult. Backup windows are being exceeded, networks overloaded, organizations forced into complex partial backup schemas, and the entire mechanism of basic data protection is threatening to break down. An emerging tool IT has to combat this problem is data deduplication, and fortunately major advances in this technology now allow organizations to keep all their data backed up both locally and at remote sites while cutting the nightly data backup load by ratios of 1:20 to 1:50 or more. This large reduction in the amount of data to be backed up also makes the use of disk rather than tape, with its advantages of higher reliability and faster access, practical in many applications at least for short-term backup. Tape remains the economical choice for long term data archiving in many environments.

Deduplication, however, comes with caveats: First, two different and somewhat competing technologies -- source based and target based -- are on the market, each supported by several vendors. Each of these has its benefits and trade offs, and each is appropriate for specific kinds of environments (see articles below for a more detailed discussion). Thus it is important to understand your environment and business needs before selecting a technology. Second, with any new technology, some adoption costs are necessary to incur. G. Berton Latamore

Data de-duplication: Greasing the rails of the backup window

Disk backup, which protects data by performing a backup directly to disk-based media rather than tape storage, is exploding, particularly since data deduplication technologies can be applied to eliminate redundant data within a stream. They can achieve data reduction factors of 20:1 or higher, bringing the economics of disk backup much closer to those of tape backup.

Tape backups often fail and are widely recognized as less reliable than disk-based approaches. Moreover, backup windows are increasingly tight, and recovery is often uncertain with traditional tape-based methods. Finally, the increasing popularity of server virtualization places further stress on the backup and restore process, as spreading data across fewer virtual servers makes tape backup more complicated than a simple brute force physical server-by-server approach.

At a high level, there are two predominant models to disk-based deduplication:

- Source-based deduplication(e.g., EMC/Avamar, Connected, Carbonite, Symantec, and others) uses a host-resident agent that reduces data at the server source and typically sends just changed data over the network (either locally or remotely).

- Target-based dedupe (e.g. Data Domain, Diligent, NetApp and others) is controlled by a storage system, rather than a host. This approach simply takes files or volumes resident on disk and dumps them, either to a cloned set of disks (which then dumps to backup disks) or directly to the disk-based backup target. The former is more expensive but reduces backup window pressure and minimizes application downtime; the latter is cheaper and simpler.

Where should customers consider each of these approaches? In general, target-based dedupe is an excellent fit for customers who want to install a virtual tape library (VTL) without substantial disruption to existing backup software infrastructure and processes. A VTL without dedupe, while convenient for recovery, is perhaps still as much as 10X the cost of tape (or 4X-5X if blending tape with integrated VTL), whereas a VTL with dedupe can take this ratio down to as low as 2-3X tape costs.

Additionally, target-based dedupe is best for higher change-rate environments (e.g. more than 3% changed data daily) and larger databases (e.g. 200GB+) with more rigorous recovery point objective (RPO) and recovery time objective (RTO) requirements. For example, direct copy to disk in this context improves RPO because this approach is able to handle higher change rates, whereas source-based dedupe will have too many changes to transmit.

Source-based data deduplication will shine in lower change-rate environments (less than 3% change daily), where customers have lots of data distributed remotely and backup today is unreliable, cumbersome, and uncertain (e.g., laptops, PCs, and remote offices). Source-based dedupe also has an advantage when transmitting over remote networks, because the data stream is reduced prior to transmission thereby reducing bandwidth constraints.

Will data de-dupe eliminate tape? A commonly asked question in the Wikibon community is: “Will data deduplication allow us to eliminate tape?” In both source and target-based dedupe, tape involvement may be minimized or eliminated depending on the need to get data off site. There are some examples of customers using remote vaulting to disk to eliminate tape entirely. However this approach requires the additional expense of redundant infrastructure and typically substantial network bandwidth investment. Indeed, for most customers backing up data to disk, dumping to tape and shipping tapes off-site remains the most cost-effective and fastest way to comply with the disaster recovery edicts of the organization.

What guidelines and best practices should customers consider? Customers should start by considering RPO and RTO and understanding needs by classifying data. The extremes are relatively straightforward to address. If the application’s RPO/RTO requirement is many hours or even days, any model will work well. Go for low cost and easy recovery and even consider remotely managed services.

If RPO/RTO requirements are measured in hours or minutes, then a change in infrastructure is going to be harder to justify, and customers will likely want to leverage hardened backup and recovery processes unless they have good reasons to change. Here, internal source-based models will be a difficult sell in the organization unless it’s a database-driven source model (e.g. Oracle), where the database handles the deduping and maintains consistency between volumes and the point-in-time aspects of the process.

As well, customers should always perform dedupe prior to encrypting data.

On balance, disk-based backup and data deduplication should be on every customer’s near-term planning roadmap unless the primary backup application is for data that will not demonstrate good deduplication ratios (e.g. music, movies, and mother nature).

Action item: The choice of data deduplication applied to disk-based backup is one of how, not when. Customers should start by considering RPO and RTO requirements and assess dedupe relative to current backup methodologies to decide economically which approach is the best strategic fit. In addition to RPO and RTO, comparative metrics should include cost of recovery, operational costs and RAS (reliability, availability and scalability) of backup process.

It's Time to Start Protecting ALL of Your Data with the Right Solution

The backup market is consistently a $2+ billion dollar market. Every year people look to build or add on to existing backup solutions. With the advent of disk-based backup solutions and technologies that support a multitude of environments, it is time to take a step back and start asking the questions: "What am I really trying to accomplish?" "Am I really backing up data, or am I protecting data?" "Which data is most important in my environment?" "What is the value of that data?"

Now that IT is 100% accountable for 100% of the data that lives within its business, it is imperative that IT start asking these tough questions. Once this is done, and ONLY once these questions have answers, can you start to answer more important questions such as, "What are my RTO and RPO objectives for this 'type' of data?"

It is this part of the conversation that will lead you to the right technology solution, whether it be data deduplication, VTL, disk-based backup, replication, etc. Each of these technologies is robust enough and has been in the market long enough that many vendors have excellent products to solve each of your challenges. The trick is know what you need, not what you want.

Action item: The call to action is for IT to take the time to meet with the lines-of-business to have a hard conversation about the expectations of data availability. It is also important to let the LOBs understand that every improvement in RPO and RTO has an associated dollar cost and to help them decide the value of their data. The follow-on steps will be to meet with the vendors and acquire the right solution to meet everyone's SLA.

Retaining employment while backing up VMWare

There is not much upside potential to employment prospects if drastic changes are made to backup procedures. “If it ain’t broke, don’t fix it” is and always will be sound advice. Even more so when the data is mission critical and introducing change increases risk to the business.

Sometimes change is inevitable, and this is likely to be the case with VMWare initiatives. Backup processes that worked fine with “physical=logical” environments do not work as well with VMWare's logical server environments. For example, instead of restoring just the application, the VMWare process may require restoring the operating system as well. To avoid this, backup system agents may have to be deployed – that will probably bring in its wake disruption to the current processes and a requirement to re-architect and retest the backup and recovery system. Traditional target-based deduplication will not help at all in speeding up backup and is likely to increase complexity in a changing environment.

Given that change is “thrust upon you”, it makes sense to re-evaluate the backup software and processes used and see if consolidation or a different backup model is more appropriate. If the servers and storage in the VMWare environment are supporting small databases and have low change rates (often the case with Windows-based unstructured data systems), source-based technology may be more appropriate.

Action item: Storage executives should take a long hard look at backup procedures well before VMWare initiatives go into production. Consult with the current backup software supplier and evaluate the alternatives. This may include waiting for a new release. Test the optimum alternative. If required, bring in alternative vendors including source-based deduplication. Being part of the solution will optimize employment prospects.

Data Deduplication -- A Recovery Perspective

While data deduplication running on backup servers can save immense time in backups and the disk space required for them, does data deduplication have similar impact on data recovery operations? This question is quite controversial, but we believe the answer is no – in fact, it can slow things down a little.

The reason, of course, is that versus straight disk-to-server recovery, all the deduped data must be reconstructed and that process adds overhead which can slow things down. This is true regardless of whether the data was deduped at the source/host or the target/appliance/storage device. Recovery of deduped data is likely to be a little faster if the data was deduped at the target. If the data was deduped at the host, the host will have to issue many extra read operations in order to reconstruct the data in full, particularly to refresh is index cache. Of course, if the data was deduped at the target, that target will also have to perform extra reads but, overall, the recovery will be faster than when data was deduped at the source.

If one is recovering just a little data, the differences will be negligible, but if a large amount of data needs recovery, the total recovery time will be longer. However, when restoring data over a LAN from a backup server with data deduplication, the LAN will be the limiting factor and not data reconstruction. Recovery over a WAN is another matter, however. While data deduplication significantly reduces the load on the WAN for backups (after the first backup or deduplication scan), a huge amount of data must still be sent over the WAN. This problem gets some relief when there are two data deduplication servers operating in concert at both ends.

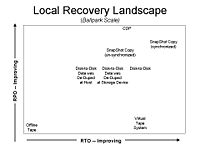

Figure 1 puts things in perspective. By far and away, snapshot copy techniques provide the best recovery times and recovery points for local recoveries, provided these snapshots were properly synchronized with the applications and databases. If not, then it can take quite a while to figure out which snapshot to use. The same is true for log points. Therefore, recovery time suffers. Note that CDP, Continuous Data Protection, has been covered previously on Wikibon.

After snapshots, good old disk-to-disk backups will provide the best recoveries, albeit with significantly worse recovery points and recovery times than those of snapshots.

Recovery times from disk where the data has been previously deduped will have the same recovery points as disk-to-disk, but somewhat longer recovery times.

Recovery times from a virtual tape library will vary. If the data is still in the VTL’s disk cache, the one can expect performance similar to disk-to-disk. If the data is not in the cache and, therefore on tape, recovery times will suffer. Offline tape, of course, offers the worst recovery times, but is the least expensive option.

Action item: Users considering data deduplication solutions must test recovery processes and verify they can meet their RTOs. Users should also consider synchronized snapshot copies for their tier 1 data – usually the data with the most stringent recovery objectives.

Data de-duplication: What shade of green?

How green are data deduplication solutions? The answer depends on your perspective. On the one hand, dedupe products are replacing tape solutions which are the greenest of all storage technologies (notwithstanding all the trucks driving tapes around), so it would appear out of the chute that dedupe is anti-green. Relative to a non-deduped VTL, however, a dedupe appliance or host-resident solution is a positive from a green standpoint but the degree of benefit depends on the ratio of data reduction. The reduction in number of drives typically will offset the increased power consumption of the appliance (in target-based solutions) or additional processing power needed (for source-based solutions). A 1u server may consume about 1kVA of power and a disk drive about 20 watts. With a 10:1 reduction ratio you can achieve some savings but they're not huge. With a 50:1 reduction, the numbers get a lot more interesting.

So where does that leave dedupe technologies in the color spectrum? For now it appears to depend on your position in the spectrum. Even without the green benefit, customers are choosing to trade added power consumption for the cost savings and other benefits of dedupe. If data reduction ratios are high, customers get the added bonus of a greener IT story and further cost reductions. As we've said before green storage is all about the other green. This suggests that suppliers should tread carefully and make sure claims can be justified before making too much noise about the greenness of data de-dupe. As well, suppliers should architect next generation solutions designed to further green up today's dedupe infrastructure.

Action item: The apparent lack of greenness of today's dedupe systems, relative to tape, suggests that suppliers should think carefully about how to position the issue. Position relative to VTLs as a starting point. While the lure of claiming de-dupe is green because it reduces disk drive consumption will be attractive, users should understand this depends on reduction ratios and suppliers should be open about this in their marketing so users don't get blindsided within their own organizations.

Data deduplication: Backup is one thing, recovery is everything

Recovering information from disk is the ideal method because disk technology is random versus sequential tape. In addition, disk-based backup and restore (BUR) systems are more reliable than tape and with data deduplication the economics are so compelling that the market continues to explode.

While everyone talks about deduplication in the context of disk-based backup, the real attractiveness of this technology is disk-based recovery and the ability to lower RTO cost effectively by keeping more data on line longer. If disk space were free, data dedupe would not be used, because dedupe adds overhead. But disk isn't free, so dedupe has become an attractive way to trade a bit of extra time and complexity for a whole lot more benefit in terms of data recovery.

For mission critical applications, disk-to-disk BUR, with nothing in the middle to get in the way, is always the best solution. As such, data dedupe doesn't fit well here where recovery processes are hardened and reliable. Rather users should target dedupe at the fastest growing segment of data, unstructured and distributed information, and help business users put in place reliable recovery processes that work quickly with minimal IT effort.

Action Item: Aim data deduplication at two places:

- Where data is not backed up properly (e.g. remote offices and desktops). Here, consider source-based deduplication or managed services; and

- Backup applications where recovery is challenging, for example email or file services. In this case, target-based deduplication will likely be the best strategic fit.

Footnotes: