Big Data and the cloud often get mentioned in the same breath (by one vendor in particular), but finding examples of production-grade Hadoop deployments in the cloud are hard to come by. According to Wikibon estimates, 90% of production Hadoop deployments occur inside corporate firewalls. Some of those deployments are admittedly in private cloud environments, but most are run on bare metal.

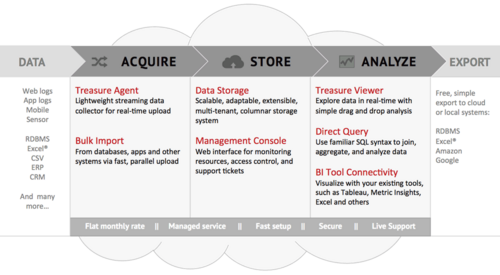

The question becomes, then, what role - if any - will the public cloud play in helping enterprises turn Big Data into actionable insights? Treasure Data believes it has an answer. The Mountain View, Calif.-based start-up has developed what it says is the first cloud service to address each phase of the Big Data pipeline - from data ingestion, transformation and storage to processing, analytics and visualization.

On the data ingestion front, Treasure Data has developed its own agent called Treasure Agent — essentially just a few lines of code deployed on its clients’ internal servers — that taps into existing data streams, performs some minimal transformations, compresses and then uploads the data to Treasure Data’s cloud-based storage and processing platform. That platform is deployed on AWS, where it leverages S3 for storage and Treasure Data’s internally developed & distributed columnar database it calls Plazma for analytics. (Notably, Treasure Data does not use Hadoop, but it does leverage MapReduce for processing data.) Clients can then visualize the data via a limited number of existing BI tools (including Tableau Software) or Treasure Data’s own visualization tool. More sophisticated users can also query the data directly via SQL.

This is the classic ‘the whole is greater than the sum of its parts’ scenario. Taken individually, Treasure Data’s proprietary components (Treasure Agent, Plazma) are interesting and useful, but the real value proposition lies in the end-to-end service delivered from the cloud that founders Hiro Yoshikawa and Kaz Ohta have built. Treasure Data’s approach removes significant adoption barriers associated with Big Data generally and Hadoop specifically by abstracting away the complexity of the underlying infrastructure and making it relatively easy to get data into the cloud for large-scale analysis.

Treasure Data’s timing could be both a positive and a negative for the company. As illustrated at #BigDataNYC 2013 and Strata + Hadoop World 2013, Big Data early adopters are increasingly looking to move successful Hadoop proof-of-concepts into production. One of the key challenges associated with this process internally is scaling small clusters of machines to tens or hundreds of nodes and maintaining high levels of performance and resource utilization. In many cases, practitioners are looking to better understand and predict customer behavior by merging relatively small volumes of transactional data with high-velocity social data sourced from the cloud. Both these factors lend themselves to a cloud-based service such as offered by Treasure Data.

While Treasure Data is one of the first service providers to offer such a comprehensive cloud-based Big Data service, it will increasingly find itself competing with larger players in this space. The most obvious is Amazon itself. AWS provides the infrastructure foundation for Treasure Data’s service, but AWS also has most if not all of the components needed to build a comprehensive Big Data pipeline. This includes recently announced Amazon Kinesis, a managed service for harnessing data streams, as well as RedShift and Elastic MapReduce. Amazon has yet to glue all these pieces together as well as Treasure Data has, but it is likely only a matter of time until it does.

Another competitor is Cloudera. As its name suggests, Cloudera’s original business model was to deliver a Big Data processing and analytics service from the cloud remarkably similar to Treasure Data’s approach. As co-founder Amr Awadallah points out, the company pivoted to an on-premise-based business model because the public cloud was simply not viewed as a viable enterprise-grade platform when Cloudera was founded in 2009. That perception has changed, and Cloudera is moving aggressively (as it must, in Wikibon’s opinion) to forge partnerships with cloud providers such as IBM (SoftLayer), Verizon and CenturyLink (Savvis).

For Treasure Data to compete in this market, it must continue to innovate and add complimentary services. This includes making it easy (i.e. creating a push-button service) for users to access third-party data streams and providing compatibility with more visualization and analytic tools “out-of-the-box.” A challenge for all service providers in this market is addressing the security and privacy concerns - both real and imagined - of enterprise CIOs. In many cases, security is better in public clouds such as Amazon’s than the average enterprise data center, but risk-averse CIOs are still reluctant to move and store internal data outside the firewall.

It’s also important to remember this is just one phase of the larger Big Data lifecycle. In addition to large-scale exploratory analytics on huge volumes of mutli-sourced and multi-structured historical data, enterprises must also leverage streaming analytics and machine learning to automate business processes as well as architect systems to deliver the right data to the right place at the right time. There are significant cultural and process-related barriers to overcome as well at most enterprises.

Action Item: Enterprise Big Data practitioners who are struggling to scale Hadoop proof-of-concept deployments to stable production deployments should consider public cloud-based services as an alternative. Special consideration should be given to the data sources involved, security requirements, the relative ease (or lack there of) of getting data into and out of the cloud service, and the user experience for Data Scientists who will interact with the data.

Footnotes: