Originating Author: Dave Vellante

I've often tried to make the case that cost savings is greatly impacted by factors other than just the specific storage technology deployed, namely the applications and workloads running on a storage infrastructure (see Storage TCO - comparing apples to apples). I’d like to explore this reality in a bit more depth and provide some guidelines on hard-dollar storage cost justification methods. I won't deal with so-called soft dollar benefits here, only the hard dollars to be saved by making infrastructure investments.

Contents |

What matters most in storage TCO

The main emphasis of this article is the Total Cost of Ownership (TCO) and factors that have the biggest impact, particularly IT staff productivity and storage utilization. I’m not suggesting that other items aren’t worth considering, but these are the so-called “biggies” in my experience. As stated in previous opinions, there are typically two main cost areas that provide the most benefit when consolidating storage: 1) IT staff productivity (i.e. GBs managed per person); and 2) Storage utilization. Let’s start with the first.

Does IT Staff Productivity Count?

People sometimes tell me it’s difficult to cost-justify a storage investment based on IT staff productivity improvements. Often senior management won’t count IT staff productivity as hard dollars, and projecting staff cost savings is politically incorrect because someone might lose their job. What management is really saying folks is they don't believe you have a clear-cut business case and they don't trust they'll ever see the benefits.

In a survey of CEO’s by Darwin Magazine, 87% of CEO’s said that increasing productivity is their top IT priority. My strong advice is to find a way to sell the benefits of staff productivity improvements because it’s typically the largest area of benefit for a storage investment. But be careful with projections because complexity (e.g. applications, workload, processes, etc.) will have a major impact on potential benefits.

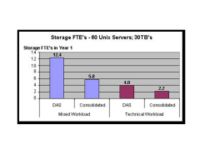

Consider the following case study examples demonstrating the potential staff impact for two similar scenarios: 1) A 60-server Unix environment with 30TB of Direct Attached Storage (DAS) running a mixed Unix workload (e.g. business processing, decision support, file/print mix) and 2) A 60-server Unix environment with 30TB of DAS running a purely technical workload (e.g. CAD/CAM application):

The chart shows full time equivalent (FTE) storage management staff for a base case DAS and target consolidated scenario in the two workloads cited. While both demonstrate very similar improvements in staff productivity from a percentage standpoint, the more complex workload (mixed) demonstrates a much better profile for staff savings in absolute terms. To calculate the rough dollar savings is simple (12.4 – 5.8 = 6.6 FTE’s vs. 4.0 – 2.2 = 1.8 FTE’s). Take a fully loaded cost per FTE ($75K - $120K in the U.S.) over a three-year depreciation period and the potential for savings becomes obvious, especially in the mixed workload (almost $2M over three years).

Exploiting Un-utilized Storage

Storage utilization improvements are often cited as a potential cost benefit of a consolidation, and rightly so. Often, depending on the size of the storage pools attached, improved storage utilization can mean real dollars. This is especially true in high growth environments where changes are frequent and more GBs coming in means more wasted space. That said; understanding storage utilization is not so simple. Typically, small and medium sized shops lack the tools to accurately measure utilization. While products from storage resource management (SRM) vendors help, most companies I visit have not exploited such tools in their Windows and Linux environments.

The factors influencing utilization are many, including the quality of processes, IT staff expertise and the amount of staff dedicated to storage management to name a few. I’ve seen customers with very high utilization rates but very low storage management efficiency, indicating they are efficiently using allocated storage but they need more people to do so. The point is, sometimes it’s cheaper to buy more storage than to pay people to manage it.

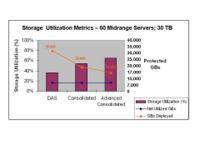

Assuming you can establish a decent estimate of utilized, protected storage, a good way to conceptually understand the cost benefits of improved utilization are to think in terms of application requirements. Specifically, the characteristics of an application will determine the amount of storage needed, while the technology and other factors (e.g., staff skills and growth) will determine how much storage needs to be deployed to meet application requirements. To illustrate this trend, consider our previous 60 Unix-server, 30TB example shown below:

The chart plots three metrics on a double-Y axis showing: 1) Utilization percentage (Bars on leftmost Y-axis); 2) Net utilized GBs – representing the application requirements (straight line on the rightmost Y-axis) and 3) GBs deployed to meet the application requirements (descending line on the rightmost Y-axis). The data compare three scenarios including DAS, a Consolidated environment (basic storage management) and an advanced consolidated environment (with advanced copy and storage management services).

The numbers demonstrate the potential for cost savings by showing that a simplified storage management infrastructure requires less “Gross” GB’s deployed to meet the application requirements. In other words, I need to deploy 30TB protected to deliver my 12TB application requirements with DAS and only 16.8TB in an advanced consolidated environment to deliver the same 12TB to the application. I can either pocket the money saved (GBs saved * cost per GB) or use the additional storage for something else.

Getting Started without “Boiling the Ocean”

Having laid out some of the common misconceptions in Storage TCO - comparing apples to apples, and emphasizing the importance of focusing on the big-ticket items, the following guidelines can be used to build a solid TCO framework:

Set a Baseline – Lay out all the hardware, software, backup network and other capital costs; and the implementation and training costs. Tease out the staff costs by really digging into the operations that are impacted by storage beyond the obvious backup tasks. My experience is people frequently underestimate the percentage of time spent managing storage. To test this premise, ask questions like: “What’s the process for recovery and who’s involved?” Once you’re reasonably comfortable with the “as is” start projecting the “to be.”

In thinking about a SAN or data consolidation, consider the implementation costs because they can be substantial. The more complex the implementation, the higher the implementation costs (and risks). Implementation costs can run from 2-10% of the overall three-year costs.

Storage Software Costs – In many cases, savings from storage utilization can be offset by increased storage software costs. Don’t let acquired software go unused.

Factor in Growth – Factoring new storage, switches and other infrastructure, as well as staff costs over the depreciation period is essential to forecasting a business case.

Don’t Forget the Server Benefits – Often, server benefits are excluded from TCO analyses. Consolidating storage will often improve server utilization and reduce server management complexity. While a second level benefit, a large consolidation may demonstrate substantial savings.

Get a Financial Person to Help – When you have a business case that’s reasonable, translate the benefits into a cash flow model. Calculate not only a simple ROI (Benefits / initial costs) but find someone who can help derive Net Present Value (NPV), IRR (internal rate of return) and break even period. Remember, no one financial metric is a silver bullet. ROI won’t tell you anything about the size of the benefit and NPV won’t give you a sense of the time to benefit; which is why they invented payback period. Use the metrics that best reflect your business needs.

The bottom line is if it's a good deal the numbers will say so and if you have to stretch the metrics, it's probably not worth the investment.

Related research: Managing geometric data growth in SANs

Action Item:

Footnotes: