Contents |

Hadoop as History

For most of its (short) existence, the open source Big Data framework called Hadoop has been all about innovative ways to process, store, and eventually analyze huge volumes of multi-structured data. From the time of its inception by Doug Cutting at Yahoo until 2011 or so, the majority of enhancements to the platform have been mostly focused on new and better ways to accomplish this core function.

Indeed, the entire concept of Hadoop – processing petabytes of unstructured data in parallel across potentially thousands of commodity boxes using an open source file-system and related tools – flies in the face of the traditional database model – relational data only, scale-up not out, proprietary hardware and software.

But this paradigm started to change with the emergence of the first commercial Hadoop distribution vendor, Cloudera, back in 2009, and it’s not hard to understand why. In order to successfully sell Hadoop to the enterprise, the platform must meet certain levels of, for lack of a better term, enterprise readiness. While Hadoop pioneers, Web giants like Yahoo, Google, Facebook, and LinkedIn, can devote armies of administrators and engineers to keep Hadoop clusters up-and-running efficiently, most enterprises cannot. There simply aren’t enough skilled Hadoop practitioners to go around.

This drive to turn Hadoop into an enterprise-grade platform accelerated about this time last year. It was then that MapR, though already a two-year-old company, joined Cloudera with its own commercial Hadoop distribution and a high-profile reseller agreement with EMC Greenplum. Within weeks, Yahoo spun-out its internal Hadoop division to form Hortonworks. And, later, Greenplum unveiled its own Hadoop distribution distinct from MapR. Meanwhile, a NoSQL database company called DataStax produced its own version of Hadoop for the enterprise. With five-plus commercial Hadoop distribution vendors vying for top spot in a potentially $50 billion market, the race to build the first truly enterprise-ready Hadoop platform was officially on.

So why is this important to you?

The Hadoop distribution race is important because no technology can achieve mass adoption in the enterprise unless risk-averse IT departments believe it will stand-up under pressure, and Hadoop is no exception. Consider the apocryphal saying, “Nobody ever got fired for buying IBM,” but replace IBM with Hadoop. Sounds almost fanciful, no? But making that statement a truism is now the mission of every Hadoop distribution vendor.

What We Mean When We Talk About “Enterprise Ready”

For all its promise, Hadoop has a number of inherent weaknesses that make it a less than ideal platform for supporting mission-critical applications and workloads. These must be overcome for Hadoop to emerge as a truly enterprise-grade platform. The five major weaknesses are Single Points of Failure, Integration with Existing IT Systems, Administration and Ease-of-Use, and Security. While each of these weaknesses has been addressed to greater and lesser extents by both the commercial and open source Hadoop communities, it is still useful to summarize them below:

Single Point of Failure (SPOF)

As originally developed, a single node within a Hadoop cluster is responsible for storing and managing metadata. This node, called the namenode, is essentially responsible for understanding which nodes store particular data and for providing clients this information when a MapReduce job is initiated. Three copies of data are typical stored on three separate nodes in a cluster, and the namenode maintains a directory tree with this information. With just one node responsible for understanding where all data is located, the namenode becomes a SPOF. Should the namenode fail, the cluster itself becomes useless. Once a namenode fails, it can take hours or longer to return the cluster to working order, during which time no jobs can be processed. While a secondary namenode is part of most Hadoop configurations, as originally designed it only periodically replicates and stores data from the namenode, meaning it cannot be relied upon as a failsafe should the namenode go offline.

Integration with Existing IT Systems

Part of the promise of Big Data is the ability to breakdown data silos. The idea is to bring together and mine all relevant data sources, regardless of data structure or domain, for actionable insights. Therefore, the ability of any Big Data platform to integrate with legacy and new IT systems – transactional databases, analytic databases, existing applications, file stores and other NoSQL databases – is critical. While the Hadoop Distributed File System can ingest data in virtually any format, moving data back and forth between Hadoop and, for example, existing enterprise databases, was not originally a trivial task. There is significantly less value in any Big Data platform that cannot easily integrate with existing enterprise IT systems, lest it become yet another data silo.

Administration and Ease-of-Use

Hadoop speaks its own language – MapReduce. Most database administrators and other data practitioners speak SQL, or standard query language, used by most relational database management systems. The two languages share few commonalities. As such, existing data management professionals require significant training to administer Hadoop clusters. Likewise, most analytic professionals are not versed in MapReduce, meaning the pool of qualified Hadoop analytic pros is limited to a select few.

Lacking the internal expertise to administer and monitor Hadoop clusters or to take advantage of Hadoop for performing Big Data analytics, the wider enterprise market will not adopt Hadoop. While education and training is one answer to the problem, Hadoop vendors must also make their products simpler to administer and use this to further break down adoption barriers.

Security

No sane mainstream enterprise in the 21st Century will deploy a new technology, particularly a data management technology, absent a robust security layer. The consequences of running afoul of privacy and compliance regulations, succumbing to data breaches from hackers, or even just appearing to take a lackadaisical approach to data security are simply too high. Hadoop in its original incarnation, unfortunately, lacked fine-grained user access controls. Hadoop can run Kerberos, a network authentication protocol, which allows nodes to “prove” their identity before an action is taken. Still, as perceived by most data security professionals, Hadoop has lacked the level of security functionality needed for safe deployment in the enterprise and loaded with sensitive data.

These four issues do not constitute a comprehensive list of Hadoop-related enterprise readiness concerns, but they are the issues most commonly raised when companies begin evaluating Hadoop for enterprise deployments. As mentioned, however, commercial Hadoop vendors and the open source Apache community have been working diligently to address each of these issues for the last year or more.

Breakdown of Hadoop Distribution Vendors

On the occasion of Hadoop Summit 2012, now is a good time to review the progress each Hadoop distribution vendor has made in regard to enterprise readiness. Below are overviews of enhancements aimed at improving Hadoop in this regard made by, in alphabetical order, Cloudera, DataStax, EMC Greenplum, Hortonworks, and MapR, over the last two years. These are not comprehensive accounts of each and every feature in each Hadoop distribution, but rather serve to highlight functions and features that address the four concerns listed above.

One of the complicating factors in such an exercise is taking into account the open-source nature of Hadoop. The vendors reviewed below each take different approaches to the Apache Hadoop project, with some contributing all of their code to the community and others keeping certain features and functions proprietary. Code contributed to the open source community by one vendor may be used by another, meaning there is significant overlap among all five vendor distributions. Indeed, some of the features highlighted below for one vendor may also be a part of another vendor’s distribution even if not listed here. Enterprises evaluating these vendors should keep this in mind.

Cloudera

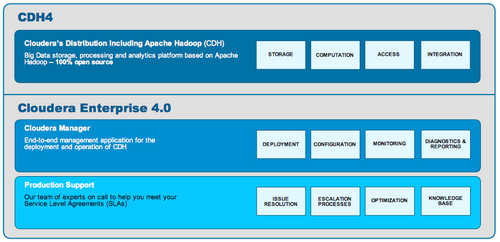

As the first commercial Hadoop vendor on the scene, Cloudera boasts what is generally considered the most mature Hadoop distribution on the market. Cloudera’s distribution including Apache Hadoop (CDH) is currently in its fourth iteration, which was released in early June 2012. To aid enterprise adoption, Cloudera has also developed Cloudera Manager, proprietary software for deploying, managing, and securing CDH. While CDH is available for free download and is fully Apache compliant, Cloudera bundles the closed-source Cloudera Manager along with support services with CDH as part of its Cloudera Enterprise offering, which it sells under a subscription model.

High Availability:CDH4 includes true high availability (HA) in the form of a secondary namenode that now lives up to its name. It is now available as an automatic, hot fail-over should the primary namenode in a cluster go down. HA was developed in conjunction with and has been contributed back to the Apache project.

Table and Column-Level Access Controls: The most recent version of CDH also introduced highly granular table and column-level access controls for HBase, a popular NoSQL database used in conjunction with Hadoop. Such controls should accelerate the use of HBase to support mission-critical applications. Like HA, these features are open source and available to the Apache community.

More APIs:New sets of APIs allow administrators to integrate Cloudera Manager with existing IT monitoring and management systems. This allows for administrators to view Hadoop in the context of the larger enterprise IT infrastructure.

Cloudera has been criticized by some for not making Cloudera Manager open source and contributing its code back to the Apache community. But the company has decided on a go-to-market strategy that leverages an open source core with proprietary management software. Cloudera believes that is the best way to build its business, and there is little chance that it will open source Cloudera Manager in the future.

Cloudera Vital Stats

| Founded | 2009 by Dr. Amr Awadallah and Jeff Hammerbacher |

| CEO | Mike Olson |

| Headquarters | Palo Alto, Calif. |

| Funding | $81 million over four rounds by Accel Partners, Greylock Partners and Meritech Capital Partners |

| Employees | 250+ |

| Marquee Customers | Groupon, Nokia, Rackspace |

| Web | www.cloudera.com |

DataStax

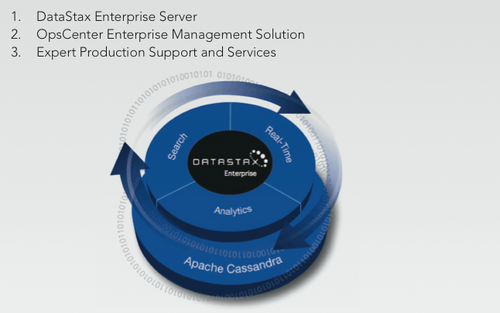

DataStax is best known as the commercial Cassandra company, but the vendor also plays an important role in the Hadoop ecosystem. DataStax swaps out the Hadoop Distributed File System (HDFS) with Cassandra, a NoSQL, column-oriented database designed to support near-real time applications and workloads. Because Hadoop is at heart a batch-oriented system, this gives the framework important new functionality. From an enterprise readiness perspective, DataStax’s Hadoop distribution takes advantage of Cassandra’s decentralized architecture to mitigate the SPOF issue. The company’s OpsCenter Enterprise Edition provides a visual interface to allow administrators to manage deployments, including monitoring the health and status of Hadoop jobs.

Advanced Workload Management: The latest version of the DataStax platform also incorporates Apache Solr, the open source enterprise search platform. With three distinct projects – Hadoop, Cassandra and Solr – integrate into one Big Data platform, advanced workload management capabilities are critical. OpsCenter allows administrators to replicate data between the three as well manage node assignment.

Workload Isolation: The DataStax platform also boasts workload isolation capabilities to ensure that real-time data workloads do not compete with analytic workloads for compute resources such as memory, CPU, or disk space.

What DataStax does not offer are visual interfaces, easy-to-use tools or other aids to make it easier for admins and Data Scientists to write MapReduce jobs or Hive and Pig routines outside of currently available open source methods. Also, few DataStax customers deploy the platform for strictly Hadoop jobs, but rather look to the vendor when they are in need of mixed workload capabilities.

DataStax Vital Stats

| Founded | 2010 by Jonathan Ellis and Matt Pfeil |

| CEO | Billy Bosworth |

| Headquarters | San Mateo, Calif. |

| Funding | $13.7 million over two rounds by Lightspeed Venture Partners, Sequoia Capital and Crosslink Capital |

| Employees | 45+ |

| Marquee Customers | Netflix, Disney and Cisco |

| Web | www.datastax.com |

EMC Greenplum

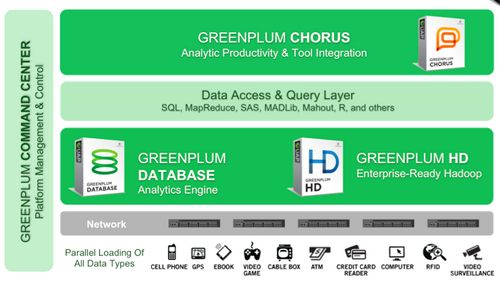

In addition to reselling MapR’s M5 Hadoop distribution, EMC Greenplum now offers its own Apache-compatible Hadoop distribution known as Greenplum HD. The distribution itself does not significantly distinguish Greenplum HD from its competitors, however. Rather, Greenplum offers customers an enterprise-grade Hadoop “wrapper,” leveraging EMC’s enterprise storage technology and new collaborative analytic workspace called Chorus. EMC also partners with Cisco to deliver the high-performance M5 Hadoop distribution as an optimized appliance.

Isilon and Chorus: Isilon is EMC’s scale-out NAS technology, which it acquired in 2011. When integrated with Greenplum HD, administrators can take advantage of Isilon’s existing enterprise-grade security features with Hadoop. It also removes the SPOF issue, and it decouples storage and compute, allowing administrators to scale up/down one independent of the other. Chorus, as mentioned, is a collaborative workspace that sits on top of Greenplum HD and allows Data Scientists to annotate their work, share notes, and otherwise build-off one another’s’ analysis.

1,000 Node Hadoop Workbench: EMC Greenplum deployed a 1,000 node Hadoop cluster storing 24 petabytes of data for Hadoop practitioners to experiment with. The Hadoop workbench, as EMC calls it, provides enterprises with a dedicated and secure area to test Hadoop deployments and applications before rolling them out in production.

Reference Configuration via Cisco UCS: Greenplum also offers a Hadoop appliance, bundling together the MapR M5 Hadoop distribution with the Cisco Unified Computing System reference architecture. This option is generally aimed at existing, sophisticated Hadoop customers.

EMC Greenplum’s approach to Hadoop is to surround it with a slew of complimentary tools and technologies – some open source but most closed source – to deliver a robust enterprise-grade platform. This includes tight integration with the Greenplum analytic database and Chorus in the form of the Greenplum Unified Analytic Appliance. The result is one of the most comprehensive Big Data Analytics platforms on the market. The platform is very much EMC-focused, however, and comes with a higher price tag than most competing Hadoop offerings.

Greenplum Vital Stats

| Founded | 2003 by Scott Yara and Luke Lonergan |

| CEO | Bill Cook |

| Headquarters | San Mateo, Calif. |

| Funding | $46 million over three rounds by Sierra Ventures, Meritech Capital Partners, SAP Ventures, others; Acquired by EMC in 2010 |

| Employees | 300+ |

| Marquee Customers | NYSE Euronext, Fox Interactive Media, Orbitz |

| Web | www.greenplum.com |

Hortonworks

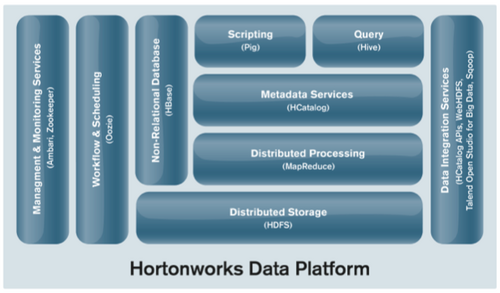

Released on June 12, 2012, the Hortonworks Data Platform is a 100% open-source enterprise version of the Apache Hadoop distribution. Spun out of Yahoo last year, Hortonworks is trying to replicate the Red Hat playbook: offering free, open source software monetized with for-pay technical support services. Still, Hortonworks decided the vanilla Apache Hadoop distribution needed improvement, hence its development of HDP. Highlights of HDP aimed at enterprise readiness include:

HCatalog: HCatalog is an Apache-based table and storage management service developed largely by Hortonworks that is designed to enable administrators to delineate the location and structure of data within Hadoop. The goal is to make it simple to view and access all Hadoop-based data regardless of which program or application is being used. HCat also supports standard table formats to enable integration with relational databases and data warehouses.

Ambari: Apache Ambari is Hortonworks' answer to Cloudera Manager. It is a Hadoop monitoring and management console that includes data visualization tools for tracking Hadoop cluster health. It also includes a REST interface for defining and manipulating Hadoop clusters, as well as the ability to upgrade existing Hadoop clusters to newer versions of the software without losing existing data.

Talend Open Studio: Hortonworks formed a partnership with Talend, an open-source data integration and master data management vendor, to embed its functionality into HDP. It allows administrators and others to integrate data from various sources inside Hadoop via an intuitive GUI, eliminating the need to write complex code.

Being 100% open source, HDP eliminates the risk of vendor lock-in posed, to greater and lesser extents, by competing Hadoop platforms. That said, Hortonworks’ business model – relying on technical support revenue alone – has yet to prove itself effective in context of Big Data.

Hortonworks Vital Stats

| Founded | 2011 by Arun Murthy, Eric Baldeschwieler and six others |

| CEO | Rob Bearden |

| Headquarters | Sunnyvale, Calif. |

| Funding | $20 million+ in one round by Benchmark Capital, Yahoo |

| Employees | 55+ |

| Marquee Customers | Microsoft, Yahoo |

| Web | www.hortonworks.com |

MapR

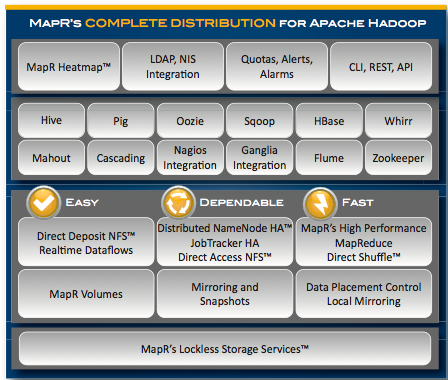

MapR takes what is probably the most controversial approach to Hadoop of any of these five vendors. The company replaces standard HDFS with its own proprietary storage services layer that enables random read-writes and allows users to mount the cluster on NFS. MapR has decided to keep this source code of the core of its Hadoop distribution to itself, but it is 100% API compatible with Apache Hadoop.

Direct Access NFS: MapR’s enterprise Hadoop distribution, M5, uses Direct Access NFS, allowing administrators to allow Web- and file-based applications to write data directly to Hadoop. This enables users to easily get data into and out of M5 without the need for custom data connectors. It is 100% Apache compatible at the API layer.

Security Enhanced Linux: MapR’s M5 supports Security Enhanced Linux, a set of access control security policies, as well as all active directory methods. It also employs snapshot and data mirroring capabilities in addition to a distributed namenode architecture to ensure five 9’s of continuous uptime.

MapR ExpressLane: This capability allows the cluster to identify small MapReduce jobs and give them priority over larger jobs. This prevents smaller jobs, namely ad hoc queries, from getting stuck behind larger, lumbering MapReduce jobs that could take minutes, hours, or longer.

As mentioned, MapR’s approach has caused some controversy. M5 has been dubbed a Hadoop “fork” by some in the community because its storage services code is closed. However, and again as mentioned before, M5 is API compatible with Apache Hadoop, meaning users can fairly easily move from MapR back to Apache Hadoop if desired. In any event, as a result of its approach, MapR has developed a high-performance Hadoop distribution that leapfrogged many of the weaknesses that dogged the Apache community, most notably the SPOF issue. It is a more efficient Hadoop distribution than most, though it is also more expensive from a price-per-node perspective. And as the Apache Hadoop distribution catches up with MapR, the company will come under increasing pressure to prove its value proposition.

MapR Vital Stats

| Founded | 2009 by John Schroeder and M.C. Srivas |

| CEO | John Schroeder |

| Headquarters | San Jose, Calif. |

| Funding | $20 million+ million over two rounds by Redpoint Ventures, Lightspeed Venture Partners and NEA |

| Employees | 45+ |

| Marquee Customers | CX, comScore |

| Web | www.mapr.com |

Weighing Your Hadoop Options

Action Item: Whether a technology is enterprise ready or not is something of a subjective proposition. What is enterprise ready for one organization may not be for another. While Hadoop has come a very long way in a relatively short period of time from an enterprise readiness perspective, clearly it is still an emerging technology. While the various distribution vendors have increased Hadoop’s management, security and performance capabilities with their respective distributions, there are still no industry-wide standards accepted by all. As maturation continues, expect a set of standards to emerge as we’ve seen in other technology areas. In the meantime, enterprises evaluating their Hadoop distribution options should weigh the features and functions of the different vendors against their particular needs in both the short and long-term.

Footnotes: Special thanks to Cloudera, DataStax, EMC Greenplum, Hortonworks, MapR and the Apache Hadoop community for their help in compiling this report. For a list of Wikibon clients, click here.