DDN has formally announced its hScaler Apache Hadoop appliance. This appliance is very well thought through, and builds on DDN's experience in providing storage for High Performance Computing (HPS). As Big Data moves from trial to production, the Big Data challenges change from developmental to operational, following the same trend as the preceding High Performance Computing (HPC) wave.

In high compute environments with large numbers of processors, the key challenge is to keep the data moving into and out of the processing units. It becomes essentially a manufacturing line workflow just-in-time management challenge of ensuring the right data is in the right processor at the right time. The faster the IO, the higher the quality of IO (e.g., fewer retries), the less the processors are waiting for IO, the greater the processor efficiency, the fewer the processors required. The fewer the processors required, the lower the parallel scaling overhead.

The hScaler combines the following components:

- DDN 12K SFA Appliance

- Integrated SFA Support for Hadoop HDFS files system

- Compute Nodes

- InfiniBand RDMA Network

- Integrated Management of System

- Integrated Management of DDN SFA Platform, Compute & Networking and Analytics Toolkit

- Cluster Management

IO latency and IO latency variance is reduced by:

- A reduction in protocol overhead by integrating the HDFS file system within the storage system;

- Elimination of IO retries (one of the worst disruptions in a highly parallel environment because it usually causes a restart of the pipeline). The elimination is achieved because of the in-flight reconstruction of IO errors;

- High speed InfiniBand interconnect;

- Single point of control for the management system;

- RDMA communication between all the Storage nodes.

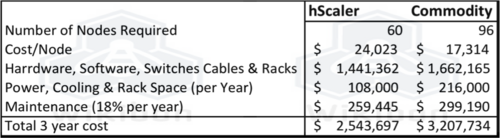

In general, the higher the number of compute nodes, the greater the saving in nodes. Wikibon has been writing about the potential of reducing the number of cores required to run database system by improving IO latency and latency variance. This technique is also well understood in HPC. A good rule of thumb is a reduction of 192 node to 120 nodes. Traditional commodity servers and storage would require about 60% additional nodes. For traditional systems with over two hundred nodes, commodity servers would require twice as many nodes.

The table uses Wikibon estimates of costs, and does not take into account "soft" saving of people and flexibility. The potential for ease -of-use savings are significant, but more important are improvements in ability to guarantee job completion, and the ability to iterate the set-up quickly for new versions of software.

The disadvantages of hScaler (or any appliance) are the higher cost of the storage over commodity DAS storage, the higher cost of servers, and some loss of configuration flexibility. For any specific problem, it is usually possible to build a cheaper commodity solution; the problem is it can only be done after the system is installed, never beforehand!

For larger internet customers, DDN also offers a reference architecture built round the DDN 12K storage. For the future, creating an offering that will fit the OpenStack or Compute Foundation templates

Action Item: CTOs & CIOs moving from trial to production Hadoop should take a hard look at the DDN hScaler. In Wikibon's opinion, this is the best designed Hadoop appliance available.

Footnotes: Watch the video of David Floyer's Breaking Analysis on DDN with SiliconANGLE.