Contents |

Introduction

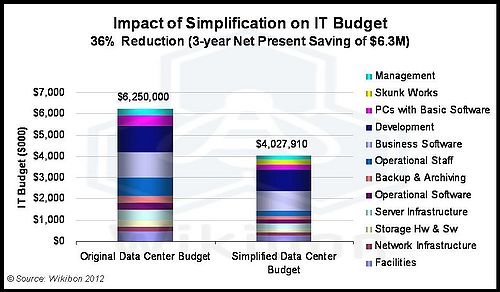

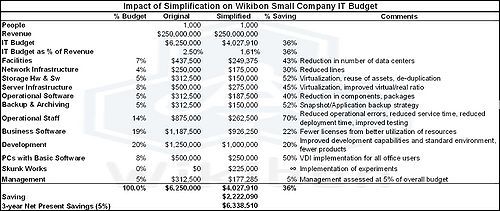

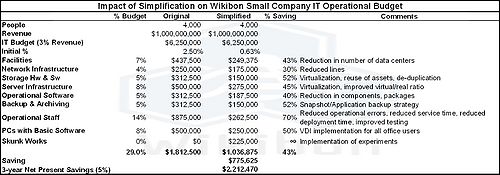

Data centers are very complex. Historically IT department have had the responsible for integrating the products from a large number of vendors to meet the IT requirements of the business. This case study shows that by taking an extreme simplification strategy, the IT budgets could be reduced by 36%, with a $6.3 million dollar net present saving over 3 years. Figure 1 shows a summary of the overall savings from simplification on the IT budget. The detailed numbers are presented in the footnotes below.

Understanding Data Center Complexity

To understand the overall complexity it is useful to look at part of the data center and analyse the number of components and the interactions between them. Focusing on the storage component of the data center as an example, the traditional data center way of managing storage has been through a succession of processes from different hardware and software that are pressured together with procedures and people power. The components include some or all of:

- Backup servers (virtual and/or physical);

- Backup software;

- Processes to quiesce each system and ensure a consistent version of data;

- De-duplication systems (hardware and software);

- Local hardware for disk recovery with its management system;

- Methods for transferring data outside of the data center to meet RPO business requirements (tape, disk copy);

- Remote hardware for disaster recovery with its management system;

- Remote hardware for archiving with its management system,

- Systems for ensuring data security;

- Systems for ensuring data privacy;

- Systems for creating test systems from backup data;

- Systems for testing local recovery and disaster recovery;

- Overall storage capacity management;

- Overall storage tiering and caching to meet different application and business requirements;

- Overall optimization of storage capacity and the space, power and cooling costs of storage.

Within this one section there are 15 major components that interact with each other. That is 105 interaction points (15*14÷2) that have to be assessed and managed. By reducing the number of components, the cost of assessment and management can be significantly reduced. This approach needs to be extended to all parts of the data center.

Virtualization Changes the Rules

Virtualization has significantly improved server utilization and has hidden many of the complexities of dealing with physical systems from the operating systems and applications. The business case is crystal clear and incontrovertible. Virtualization has significantly lowered the CAPEX and OPEX costs of running a data center.

Most technologies come with some costs and disadvantages. Server virtualization has some negative impacts, particularly on storage performance and storage management. Virtualization inserts an additional layer, a hypervisor between the operating system (OS) and the hardware. This layer abstracts the hardware layer, and greatly simplifies the OS software environment. However, the hypervisor also significantly exacerbates the complex storage management system described above, and creates:

- An IO tax of about 25%, because all the IO goes through the hypervisor and appears randomized to the storage systems.

- Increased elapsed times for running backup systems, because the servers are now running at much higher levels of utilization.

- An additional locus of control and management layer, with the requirement to manage a virtualization layer (I.e., VMware administrator) in addition to the existing server administrator, storage administrator, network administrator, and database administrator.

Additional virtualization software has been developed (e.g., Control Center for VMware, Virtual Control Center for Microsoft's Hyper-V) together with APIs that help hypervisors and storage work better together. This has achieved some success. However, Wikibon has consistently postulated that in order for IT to gain the full benefits of server virtualization, completely different approaches to storage management and software are required. Wikibon has also postulated that change would appear first in smaller data centers, as the friction of change becomes higher the larger the data center. Having introduced the complexity of virtualization, the key is to find many more components that can be eliminated to provide and overall reduction in complexity and cost.

Case Study: Strategy for Reducing Complexity

The Wikibon research process is constantly investigating leading examples of IT practice in general. It is a powerful way of investigating the real-world result of new approaches. In the case study presented, the solution to the original backup problem is achieved by fundamentally changing the way that storage as a whole is managed.

The company at the center of this case study manages private house building projects in the US, and employs about a 1,000 people. In 2008 the global housing business went into deep recession, and the company was faced with the imperative to reduce IT costs in line with the company costs. The CIO and his operations manager set about completely restructuring their approach to managing IT, with the constraint that the process could not interfere with the parallel requirement that IT continue to assist with the overall improvement in productivity of the IT staff.

The principles that drove this project to success were simple:

- Constant experimentation with small projects:

- A proof-of-concept was run for all of the ideas for streamlining IT. Some worked, and many did not. Any solution had to work in the real world, with the actual skills sets of the IT employees, and with the agreement with the business.

- Despite the economic downturn, IT started to invest in these experimental projects;

- The project manager should have a history of project success and in killing unsuccessful projects early;

- A proof-of-concept was run for all of the ideas for streamlining IT. Some worked, and many did not. Any solution had to work in the real world, with the actual skills sets of the IT employees, and with the agreement with the business.

- Virtualization of everything:

- All the key components of IT were virtualized (i.e., servers, storage, and desktops). This fundamental process allowed software and processes to be separated from specific hardware constraints;

- Ruthless simplification of all the components and sub-components in the data center:

- The IT management team believed that complexity, overhead, and cost came from the number of individual parts that had to be managed. Reducing this complexity by reducing the number of components had a greater than linear impact on overall cost reduction. Equally important was the reduction in the sub-components. For example, switching on features in software or hardware increased complexity, and unless it reduced a moving part somewhere else (e.g., removed a management requirement), additional features should not be used. When evaluating a product, importance was placed on its ability to perform the core function, and additional function was usually regarded as a negative unless it could be completely turned off and hidden.

- Reducing the number of data centers from four to two was part of the simplification process.

- Ruthless reduction in the number software and hardware vendors:

- Simplification comes from reducing the number of vendors, and taking as much of a stack as possible from a single vendor.

- In evaluating a vendor, the highest functionality should not be the criteria unless the functionality directly leads to simplification.

Case Study: Current Infrastructure Status

The current infrastructure reflects the choices made as a result of the experimental projects and the strategic simplification initiative. The main changes and components are:

- Application Software Strategy

- Microsoft was chosen as a strategic partner, and IT is a Microsoft Enterprise customer with Microsoft premier service. The CRM is Microsoft Dynamics, and other major applications are Biztalk, IIS, SharePoint, Exchange, SQL.

- The only major exception is an industry-specific ERP application.

- Server Virtualization

- The Hypervisor is Hyper-V, chosen because the operating system was Microsoft Windows Server, and Microsoft Virtual Desktop allowed a single vendor.

- The underlying philosophy is that there should be on company with control of the hypervisor and operating system.

- The hypervisor history started with VMware, which was very good but expensive, and used Citrix for desktop virtualization. As soon as Citrix bought ZenSource, they migrated to Zen. As soon as Microsoft brought out 2008 R2, IT moved to Hyper-V with a single supplier for desktop, system, and operating system. “We are very happy; it works.”

- HP blades were selected because of the virtualization of the IO provided by Virtual Connect;

- The virtual-to-real ratio is about 10:1 on most HP blades, 20:1 on newer blades. There are 190 virtual servers running on 20 physical servers.

- This company is not using the Microsoft System Center Virtual Machine Manager as it is not yet ready for prime time. The next layer of technology is not justified with the number of servers installed, and it is easier to manage manually. “We do not have a requirement to spin up 50 Web servers at peak load; there is too much function”. It is easier to manage automation with a little scripting. IT could look at it later if the product improves and become more integrated.

- Storage Virtualization

- The raw capacity is about 200TB, with everything running of NetApp Storage controllers;

- De-duplication is set on for all storage volumes except for those used by Exchange, SQL and the ERP applications. These are IO latency sensitive application, and the benefits were assessed to not outweigh the risk of impact on performance;

- De-duplication reduced the storage capacity by about 40% (the number of IOs is not impacted);

- IO performance was enhanced by the use of NetApp FlashCache of 256GB in the storage controller.

- Backup Strategy

- The underlying strategy is that backups should be application focused, but that the storage system should provide the core functionality. The NetApp storage system and backup functionality was chosen.

- Backups used NetApps SnapReserve snapshot technology together with a SnapManager for a particular Microsoft product (e.g., SnapManager for Microsoft Exchange). This allows a consistent copy to be made at a point in time. Only the incremental changed data is required, and this is copied to the second system at the backup site, using SnapVault;

- The management of the snap copies and restore is performed by SnapRestore.

Case Study: Financial Impact

To answer this question, Wikibon constructed a model to evaluate the business benefits of the simplification strategy. To accurately assess the financials, a return on assets (ROA) model was developed. The primary objective of an ROA model is to provide a budget view and understand how investments impact currently installed assets and how future rolling investments will affect costs and business value over time. Within this case study it models a real environment and takes into account the fact that there exist assets on the books with value that will not be de-installed until their useful life has expired. An ROA model allows clients to compare a strategy of installing cheaper infrastructure with one that may appear more expensive initially but leverages the value of existing assets over time. An ROA model can differentiate between investments that have no impact on installed assets and those that do. In this case, the ROA model assumes a mix of installed arrays that vary by age. Each system has different initial costs, OPEX, power/cooling, staffing, etc., and over time specific assets are replaced by new assets.

The details of the model are shown in the Footnotes below. The main conclusions from the analysis of the simplification approach are:

- The overall cost of IT was reduced by 36%;

- The number of primary vendors was reduced by 50%

- The number of IT Sites was reduced by 50%, with a 43% impact on total cost

- The IT operational budget was reduced by 43%

- Storage Hardware and Software was reduced by 52%

- Backup and Archiving costs were reduced by 52%

- The IT Operational Staff were reduced by 70%

- A new budget of $225K was introduced for "skunk works"

- Recovery Capability significantly improved for both applications data (e.g., mail-box) and system recovery

- Disaster Recovery implemented with aggressive RPO and RTO targets

- Ability to meet industry and internal audit and compliance requirements significantly enhanced

- Ability to meet security requirements significantly improved

Conclusions

The success of the "extreme simplification" project has been because of a reduction in the number of components and vendors in the data center. The converged infrastructure approach leads to fewer components with less work to maintain the infrastructure.

Taking on extreme simplification is in essence taking on a converged infrastructure project. The major challenge is choosing which components to bundle from a supplier.

In this case study, the main elements of the converged infrastructure simplification strategy included:

- Selecting Microsoft as the primary software vendor of the hypervisor, operating system, middleware, desktop virtualization and office applications

- Selecting NetApp as the primary storage vendor and as the primary backup and archiving software provider, and the storage virtualization front-end of existing external storage;

- Selecting HP as the primary blades server vendor, together with IO virtualization;

- Only take products that work 100%; wait if necessary until the product is working before implementing;

- Get rid of stuff whenever possible, and not implementing any products or feature that did not allow other stuff to be got rid of;

- Establish the confidence of the business by measuring success;

- Constant small experiments with new products and ideas.

The net result is impressive, a $6 million budget saving over three years, a 70% reduction in operational staff, and setting the company in a position to grow with a lower IT cost structure when the housing market eventually rebounds.

Action Item

Choose logical converged infrastructure pieces that the organization can manage to fit together and maintain. Starting down this course will also mandate simplification of the IT department in line with the converged infrastructure choices made.

Footnotes: