#memeconnect #ql

Contents |

Executive Summary

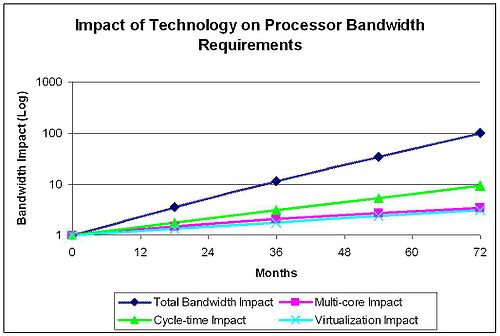

There are a number of important technology implications on the total Input/Output (I/O) bandwidth that will be required to keep evolving servers busy. These include:

- Server cycle-time impact,

- Server multi-core & L3 cache impact, and,

- Server virtualization impact.

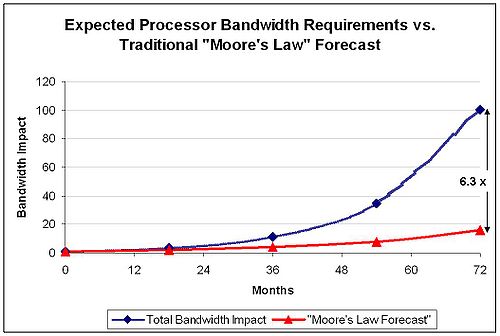

The total effect of these technologies is multiplicative. The result of this analysis is that in 72 months servers will require one hundred (100x) times the bandwidth that today’s processors require. The I/O will be more random and more varied than today’s servers, and the space and power constraints for I/O adapter technologies will be severe. A forecast based on historical trends (doubled performance every eighteen months) would indicate that sixteen times (16x) the I/O bandwidth would be required. This analysis shows that the I/O bandwidth will very probably grow six times faster than historical trends.

As a result of this increase in bandwidth and reduction in space, I/O adapters will be have to become multi-protocol, and at the high-end there will be an increasing amount of virtual I/O implementations as part of the blade or rack infrastructure. Ethernet will be the predominant transport but not the only one; the predominant storage protocols will be iSCSI, FCoE and NAS variants.

Cycle-time Impact

Historically cycle times have been doubling every eighteen (18) months under Moore’s law. For this analysis Wikibon has taken a more conservative view point that increasing the number of cores per processor will have a damping effect on the improvement in cycle time. The assumption used in the analysis is that processor cycle time will increase in servers at 75% every 18 months. In 72 months, the expected impact of cycle time would be about nine times times the I/O bandwidth requirement of today’s servers.

Multi-core & L3 Cache Impact

Cores have been and are forecast to be doubling every 18 months. Six-core servers are shipping in volume, and 12-core processors will probably be shipping in 2011. An additional improvement in performance is the introduction of a shared level 3 cache (L3) which is included in the chip and is read/write accessible by all of the cores.

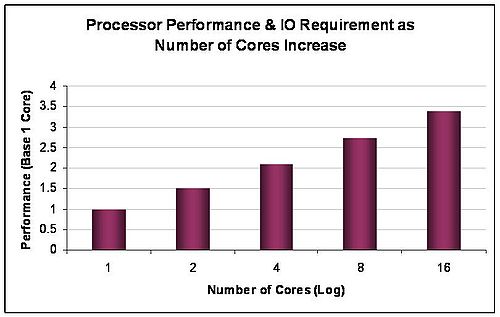

However, the impact of multiple cores on performance and total bandwidth is not linear. As a conservative rule of thumb for commercial servers, each additional processor adds half the throughput of the previous processor. For some processors used for high-performance computing, the throughput impact could be much higher. Figure 1 shows the impact on bandwidth of multi-core processors and L3 caches based on the assumptions above. In 72 months, the expected impact of multi-core and L3 cache is about 3.3 times the I/O bandwidth requirements of today’s servers.

Virtualization Impact

Virtualization is increasing the utilization of processors very significantly. Most non-virtualized servers run at a utilization of less than 10%, and to be conservative Wikibon uses a 15% average figure. With virtualization, the utilization increases to about 50% (VMware would of course claim at lot more, and sometimes that will be true), and with it the I/O bandwidth requirements. Wikibon assumes that the percentage increase in virtualization being adopted for new servers is about 10% every 18 months. The average increase in bandwidth over 18 months would then be given by the formula:

10% x 50% / 15% = 33%.

In 72 months, the expected impact of virtualization is just over three-times the I/O bandwidth requirements of today’s servers.

In addition to the I/O bandwidth increase, virtualization will continue to make the I/O more random and more varied. This is because virtualization is swapping from one workload to another, which will often have different I/O protocol requirements.

Total Impact on Server IO Bandwidth

The impact of cycle-time, multi-core and virtualization are multiplicative (the trade-off of cycle-time and multi-core has already been included in the assumptions above). The overall impact on I/O bandwidth is shown in figure 2.

Conclusions

The traditional forecast based on the I/O requirement of servers being the same as Moore's Law (doubling every 18 months) would lead to a conclusion that there would be a sixteen times (16x) increase in I/O bandwidth over the next 72 months. Figure 3 shows the Wikibon analysis compared with the "Moore's Law" forecast. The result is a difference of about 6 times (6x) the I/O bandwidth using the Wikibon model. Traditional ways of estimating I/O bandwidth for servers should be significantly revised.

Input and output from a server core can be categorized:

- Intra-server

- Core to Core withing a processor

- Processor to Processor within the server

- Server to Server

- Local

- Remote

- Server to IO

For Intra-server communication, the communication transport and protocols used will balance the needs of latency, bandwidth and connectivity. As servers get more processors and cores, a significant portion of the increased bandwidth discussed above will be taken up with intra-server communication on buses, with the emphasis on latency and bandwidth.

Inter Processor Communication between clusters of servers running traditional operating systems or clustered hypervisors will also require low latency and bandwidth (InfiniBand is used extensively for high-end clusters at the moment).

The traditional way of provisioning I/O adapters (HBAs) has been to have separate 1GbE, FC and 10GbE adapters. The trend of shrinking the size of multi-core processors from Multiple U to 1 U to 1/2 rack 1 U implementations will continue. This will make real-estate increasing precious and heat density even more a constraint to improvement. This will lead to the imperative of consolidating I/O adapters from specific types to converged network adapters (CNAs), or to take a virtual I/O approach. At the same time, the throughput requirements will be pushing the technology capabilities CNA adapters to the limit to reduce the number of ports required. As is usual, there will be a strong trend to include the CNA logic on the motherboard.

The different technologies (cycle-time reduction, multi-cores and virtualization) will all reduce the cost of processing. However, this is unlikely to reduce the number of servers shipped; the market has historically been elastic, and Wikibon believes there is no reason to doubt that this trend will continue.

Ethernet will be the predominant transport, and the predominant storage protocols will be iSCSI, FCoE, and NAS variants. The fundamental requirement for different protocols will not change. Increasingly, InfiniBand is being used as an alternative transport for high-end blade servers and separate I/O virtualization boxes. The recent Oracle Exalogic box uses Ethernet over InfiniBand to allow a single virtual NIC at the server level to communicate with each other over InfiniBand and with the outside world over Ethernet.

Action Item: Server and storage executives need to ensure that they are designing the storage network to be large enough and flexible enough to deal with the tsunami of data that will be flowing through Infrastructure 2.0 servers. Early adoption of CNAs either directly on servers or indirectly through I/O virtualization devices is important so that installations can understand the direct and indirect benefits of this approach, as well as to understand the “gotchas”.

Footnotes: