Contents |

Introduction

Today’s storage landscape is more competitive than it’s ever been. CIOs in the market for new storage have a plethora of options from which to choose, from making a decision to buy from a traditional storage vendor vs. a scrappy startup, to deciding between flash versus HHD, to everything in between.

Such a range of options and opportunities often breeds concern over making the right choice for an organization’s storage needs. While large enterprises may buy multiple storage arrays to support different workload needs, a large number of SMB and midmarket companies buy just a single array for everything. Storage for them is a major investment, and getting it wrong isn’t an option.

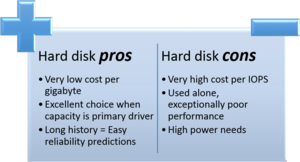

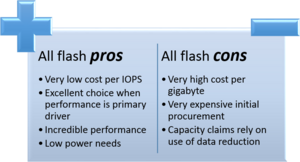

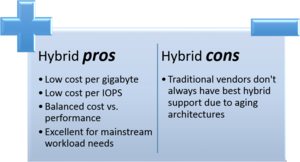

In this article, I will provide some guidance about when to choose which kind of storage. In the sidebar at the right hand side of this article, you can see the pros and cons of each storage array type – ones with all hard disks, ones with all flash, and newer hybrid systems that combine both traditional hard drives and solid state disks into one array. Click on an image to enlarge it.

Analyze existing challenges

Most organizations enter the market for storage because an existing need is not being met or a current solution is no longer supported or has reached the end of a lease or depreciation schedule. As for needs not being met, these generally fall into one or more of these categories:

- The solution is no longer meeting acceptable performance levels. Perhaps the organization’s operational needs have grown to a point at which the storage environment is no longer able to keep up and latency levels have increased as a result. At some point, storage latency issues become visible to users.

- The solution is running out of capacity. At some point, storage environments run out of space. This is especially true as organizations push their IT assets further than ever before. In some legacy environments, customers might have the option to enable various data reduction technologies to stretch their investment, but many organizations choose not to use features such as deduplication in legacy environments due to concerns about overall performance or because many legacy vendors sell deduplication as a separate software add-on.

- New projects are being planned. Today, big data, VDI and new I/O intensive workloads are being added at a feverish pace, seriously outpacing the ability for legacy storage environments to even be considered for these needs. As such, companies that have traditionally relied on legacy storage systems are finding themselves needing new storage systems that can support the new workloads.

The first step in the storage selection process is to determine which of the above challenges is being faced. For example, at one point in a previous CIO position at a midmarket organization, we had an EMC array that was running low on disk space. In that instance, performance was fine, so we simply added an additional expansion chassis to the existing array to meet the immediate needs. However, when that system hit end-of-life, we were in the midst of considering a virtual desktop deployment. Rather than simply buy another array with enough capacity and performance to meet current needs, we moved forward with an array from a different vendor that we thought would best meet existing needs as well as VDI demand, if and when it arrived.

Analyze workload needs

With broadly define challenges identified, determine what specific kinds of workloads you plan to run in the storage environment. Bear in mind that modern data centers have I/O that is so blended with virtualized servers, databases, and VDI that it can be difficult to perfectly plan and monitor I/O.

You may also find that you can’t take a one-size-fits-all approach to your environment. For example, you might find that a single array is suitable for daily production, but your disk-based backup array has such vastly different requirements that attempting to go the same route for both systems might not make sense.

As you analyze workloads, you’ll determine what drivers – capacity, performance, or both – are critical for that workload. This will help you to determine what kind of storage is needed for each workload and it may look something like this:

- General file storage: Capacity.

- Exchange: Capacity and performance.

- Database: Performance.

- Data analytics: High performance.

You may end up with a list that makes it look like you will end up needing either 18 different storage arrays to meet the various needs or a single massive storage architecture with a dozen tiers to meet different workload storage requirements. But never fear -- a lot of solutions are out there that can make the choice pretty easy.

All flash: A need for speed?

If you’ve discovered that your workloads simply require massive performance and have heavy I/O needs, you might consider looking at all-flash arrays. In such arrays, all data is stored on very high performance solid state disks, providing tens of thousands, hundreds of thousands, and more IOPS. These arrays are geared for those with a need for speed, and many emerging workloads require this kind of level of performance.

However, they do have some downsides. First, they’re expensive. That said, they provide absolutely incredible performance and when considered on a dollars per IOPS basis, their value really comes out. At first glance, it may also appear that all-flash arrays have very little capacity. Fortunately, many of the players in the all-flash space have developed powerful data deduplication engines that enable much more available storage than is advertised in raw figures. Moreover, many newer players in the all-flash space include deduplication and data reduction features as a part of their single SKU package, and they don’t nickel and dime customers for these features. Finally, because the processors in these units are so powerful, there is little to no performance impact as a result of the data reduction features. Even with all of that, though, if capacity is a major consideration, all-flash arrays cannot hold up to the capacity capabilities that are available with other storage options.

Hard drive: Need some breathing room?

Many companies still run their entire IT infrastructure on tried-and-true rotating disks. Even within this space, there are some options from which to choose. When capacity is the absolute need, companies can choose arrays that use very high capacity SATA disks, which currently ship in sizes of up to 4 TB each. Although the all hard-drive tier is generally considered to be low performance when compared to other options, companies can choose faster, more nimble SAS drives that spin at speeds of up to 15,000 RPM, making them the fastest turtle in the race. In fact, I work with one organization that runs its entire data center with NetApp on a combination of SATA and SAS disks and has not encountered any performance issues that could not be corrected via settings changes.

The beauty of the hard drive approach is that customers get a lot of disk space for very little cash. This kind of storage is often desirable in file storage scenarios and in backup and recovery tasks where capacity is the primary driver.

Hybrid: The best of both worlds

When an organization has funding to buy just one and only one storage array – and many SMBs are in this situation – it can be really tough to figure out which metric needs to be emphasized. After all, everyone wants to have their workloads perform well, but if there just isn’t enough disk space to make it all happen, the overall solution isn’t going to be sufficient for the long term.

This is where hybrid storage comes into play. There are times when capacity needs are critically important, but, on the performance side, an organization has moved beyond the reasonable ability for an all-hard disk solution to meet those performance levels, but has neither the budget nor the need for the performance levels provided by all flash systems. Hybrid storage arrays work by coupling solid state disks with rotational storage. In general, the solid state disks are used as a mega-cache that accelerates the reads from and the writes to the rotational storage. By using the solid state disks as a middle man, hybrid systems can achieve very high levels of performance while still maintaining the capacity benefits of rotational storage. Of course, hybrid storage devices won’t reach the very high performance levels of all-flash devices, but they also come at a fraction of the cost and can still hit performance levels well into the hundreds of thousands of IOPS. In addition, many hybrid storage vendors also provide data reduction features such as compression and deduplication, thus expanding the amount of usable capacity on these systems.

In fact, today’s hybrid storage arrays are extremely well-suited to just about any mainstream workload, including server virtualization, databases, Exchange, VDI, data analytics and more.

The calculations

You might be wondering just how to go about calculating everything you need to know to decide which direction you want to go. In this section, I will provide a brief overview of what are really relatively simple calculations.

On the capacity front, calculations are pretty easy. For example, suppose you’re buying a 20 TB array for $40,000. By doing some division, you find that you’re paying about $2 per GB or $2,000 per TB of space. However, this figure does not take into consideration overhead from various RAID levels or hot spare disks that you need to define. It’s a very raw number but can provide an apples-to-apples comparison on cost per GB between arrays. As another example, suppose you were to purchase a 5 TB all-flash array and paid $150,000 for it. In this case, that cost-per-TB figure is pretty high -- $30,000 per TB. Don’t forget that these are pre-reduction numbers. Deduplication, compression, and thin provisioning will help address some of the capacity issues. So, let’s say that a vendor generally sees a 5X reduction benefit.That brings the possible per-TB cost down to $6,000. To be fair, though, the $40,000 vendor may see similar reduction figures, so those after-reduction costs could come down to $400 per TB, which isn’t bad at all!

On the performance side, the equations are similar, but use IOPS instead of capacity figures. In this scenario, let’s take a look at three arrays:

- A hard disk-based array with 24 x 15K SAS disks that costs $40,000. Since each SAS disk will provide at most around 200 IOPS, that array can give up to 4,800 IOPS in performance capacity before any RAID is applied. Doing the math, that works out to about $8.33 per IOPS.

- An all-flash array that provides up to 200,000 IOPS and costs $150,000. With the math, a single IOPS in this array costs just 75 cents. This is very cheap and is the metric used when performance is the main driver.

- A hybrid array that provides up to 30,000 IOPS of performance capacity and costs $40,000. With this hybrid array, a single IOPS might cost $1.33. As you can see, while $1.33 is higher than the 75 cents seen from the all-flash array, it’s much, much less than is seen with a traditional hard disk array.

Action Item: Severfal decision points go into making the right storage selection. As you work on creating a plan for your next storage project, don’t forget to consider the wide variety of options that you have at your disposal, from tried and true hard drives – and the SATA to SAS performance range therein – to hybrid arrays to all-flash players that can support even the most I/O intensive workloads.

Footnotes: