Contents |

Introduction

Mechanical Disk drives have imprisoned data for the past 50 years. Technology has doubled the size of the prisons every 18 months for the past 50 years and will continue to do so. The way in and out of prison is gated by the speed of the mechanical arms on a mechanically rotating disk drive. These prison guardians are almost as slow today as 50 years ago. The chances of remission for data are slim, leaving little opportunity for data to be useful again. Data goes to disk to die.

Transactional Systems Limitations

Transactional systems have driven most of the enterprise productivity gains from IT. Bread-and butter applications such as ERP have improved productivity by integrating business processes. Call centers and Web applications have taken these applications directly to enterprise or government customers. The promise of transactional systems is to manage the “state” of an organization, and integrate the processes with a single consistent view across the organization.

The promise of systems integration has not been realized. Because transactional applications change “state”, this data must be written to the only suitable non-volatile medium, the disk drive. The amount of data that can be read and written in transactional systems is constrained by the elapsed time of access to disk (milliseconds) and the bandwidth to disk. The fundamental architecture of transactional systems has not changed for half a century. The number of database calls per transaction has hardly changed and limits the scope of transactional systems, which have to be loosely coupled as part of an asynchronous data flow from one system to another. The result is “system sprawl”, and multiple versions of the actual state of an enterprise.

Read-heavy Application Limitations

Enterprise data warehouses were the first attempts to improve the process of extracting value from data, but a happy data warehouse manager is as rare as a two dollar bill. The major constraint is bandwidth to the data. Big data applications are helping free some data by using large amounts of parallelism to extract data more efficiently in batch mode. Data in memory systems can keep small data marts (derived from data warehouses or big data applications) in memory and radically improve the ability to analyze data.

Overall, the promise of data warehousing has been constrained by access to data. The amount and percentage of data outside the data warehouses and imprisoned on disk is growing. Enterprise data warehouses are better named data jailhouses.

Social & Search Breakthroughs

Social and search are the first disruptive applications of the 21st Century. When “disk” is googled, the search bar shows “109,000,000 results (0.17 seconds)”, impossible to achieve if the data was on disk. The Google architecture includes extensive indexing and caching of data to avoid access to disk wherever possible for these read-heavy data applications. This is data without “state”.

Social applications turn out to be a mixture of state and stateless components. All of them started implementing scale-out architectures using commodity hardware and homegrown or open-source software. The largest reached a size where the database portions of the infrastructure were at a limit where locking rates had maxed out at the limits of hard disk technology.

As examples, Facebook and Apple have used flash within the server (using mainly Fusion-io hardware and software) extensively for the database portions of the infrastructure to enable the scale-out growth of services. In a similar way to Google, they have focused on extensive use of caching and indexing.

The end objective for both Google and Facebook is to ensure quality of end-user experience. Both have implemented continuous improvement of response time, with the objective of shaving milliseconds of response times and assuring consistency of data. Productivity of the end user ensures user retention, more clicks, and more revenue.

Watson and Siri, and the Potential Impact on Health Care

The two most exciting application developments in 2011 were Watson from IBM and Siri from Apple. Both respond to questions in natural speech and answer them quickly and, for the most part, accurately.

Watson won the Jeopardy challenge in February 2011, astounding contestants and the public with its performance. Watson had to answer within three seconds and was not allowed to be connected to the Internet. To meet that requirement, all the data and metadata was held in memory. That data was constructed from multiple sources. The technology behind Watson is being used to create products that can be interrogated to answer medical questions.

Siri was announced in October 2011, and works through iPhone 4S smartphones in connection with Apple hosting systems. The data is held in memory and flash memory. Siri provides a set of assistant services to users, looking things up on the Web and using the local data from the smart phone. It is an amazing and seamless blending of local and remote technologies.

Looking out to 2015, it is interesting to think through what could happen to the health care market if the Siri and Watson technologies were fused. Assume for the moment that the technology and trust issues* have been addressed, and in 2015 a robust technology exists that will answer spoken queries about health care issues in real time. Two key questions:

- Where and how could this technology be applied?

- Direct use by doctors in medical facilities.

- Service provided by payers.

- Service provided directly to consumers.

- What are the potential savings?

- Reduction in Costs might include reduced time of doctors per patient, fewer tests, lower risk of malpractice suits, avoidance of treatments.

- Improvement in Outcome might include additional revenue from new patients and improved negotiation with health insurance companies/government.

- Perceived Quality of Care or customer satisfaction might include improved Yelp scores, improved customer retention, additional revenue from new patients/health insurance companies.

- Risk of Adverse Publicity include negative impact on revenue, brand and customer satisfaction from misuse, faults found with technology, etc.

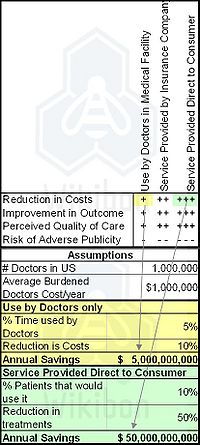

The total health care spend in the United States is estimated to be 16% of GDP, about $2.5 trillion per year. Assuming that this technology can address 40% of that spend ($1.0 trillion) that is attributable to doctor-initiated spend, the top half of Table 1 attempts to look at difference in impact between the different deployment models. The bottom half of Table 1 takes the two cost cells, and attempts to ball-park the potential yearly impact based on some assumptions.

The table indicates some interesting potential impacts on the heath care industry. First, the savings might be 10 times higher if this service could be delivered directly to the consumer. If we assume some contribution from the cells not assessed, the potential benefit might be $100 billion/year, or $1 trillion over 10 years. And health care practitioners indicate that increasing consumer use would be easier than increasing doctors use.

Nobody is going to build a factory based on this analysis - but it shows the potential of broad-scale implementation of systems that would rely on all the data being held in memory to meet a three-second response time. Flash would play a pivotal role in enabling cost-effective deployment.

The players that are able to develop and deploy these technologies will have a major impact of health care spend in general and a major potential to create long-lasting direct and indirect revenue streams.

Action Item: CEOs and CIOs must take the best and brightest to identify integrated high-performance applications that could disrupt their industry by increasing real-time access to very large amounts of data, two orders of magnitude greater that current systems. They should then be working proactively with their current or new application suppliers to design those systems. Significant resources will need to be applied, based on the assumption that the first two versions of any "killer" application will be off-target.

Footnotes: *Trust issues include openness about the sources and funding behind information within the technology, trust in the brand of the suppliers and deployers of the technology, trust in the training to use the technology, trust in the security and confidentiality arrangements, trust that the system can be updated rapidly in the light of new information, trust in the reliability and validity of the outputs of the technology.