#memeconnect #fio

Contents |

Introduction

Many existing system architecture models currently use traditional hard disk drives (HDD) as the persistent storage layer. Persistent means that even when there is no power, the data will be maintained and accessible when power returns. Wikibon believes that the persistent nature of flash storage will herald one of the most profound architectural changes in the history of computing.

In particular, flash storage is being adopted widely in the consumer space, as is seen by its use in portable music devices and cell phones and as a replacement for video tapes and disks. The cost/bit for flash is dropping by 60 percent per year whereas disk drive costs are declining at a rate of 37 percent per year. This is driving a very rapid reduction in the cost of fabrication of industrial quality flash. Wikibon predicts that by the 2012-2014 timeframe flash drives will not only be much faster, but also cheaper than high-end fibre channel (FC) HDD. Storage architectures will evolve where high IO storage activity will reside on persistent flash storage and the balance of primary storage capacity will be placed on very high density SATA disk drives.

This has two major implications for industry participants:

- CIO's should expect that high value, revenue-producing applications will be most affected by these changes. As such there is an opportunity to improve the amount of data that can be processed within a transaction by 10X. This means organizations will be able to dramatically enhance the quality, performance, and end-user experience of their most important IT application assets.

- Vendors have seen a steady migration of storage function away from the processor for the past two decades, creating a multi-billion dollar storage software market. Function will increasingly migrate back toward the processor creating a dislocation that is both an opportunity and threat to established array vendor software franchises.

Current Storage Architectures

Direct Access Storage Architecture

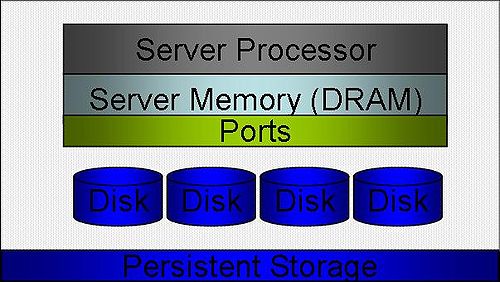

The initial model for system and storage architecture was direct attached storage (DAS), as shown in Figure 1.

Storage was usually included as part of the server, and SCSI became the primary protocol connecting processor boards to storage for open systems. Server memory was volatile, and disks and tapes were the only form of persistent storage. The master copy of data resided on disk. Data buffers in volatile server memory could be recovered from disk and log files by smart design of files systems and databases. Backup was server by server and usually involved a copy to tape procedure.

The problems with this type of architecture were that storage was under-utilized, and data could only be accessed by the processor it was attached to. Storage functions such as remote replication had to be provided by the server operating system.

Networked Storage architectures

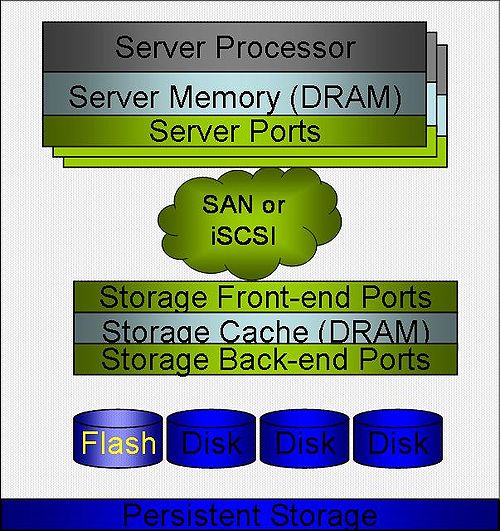

For file-based storage systems, Network Attached Storage (NAS) using the IP protocol, which allowed files to be stored and accessed across an Ethernet network, was introduced. For block-based storage, Storage Area Networks (SANs) were introduced in the late 1990s based on a faster and more reliable point-to-point fibre channel protocol. Figure 2 shows the network storage architectures that are most common at the moment.

Today the servers are usually in racks. For high-end server farms, connectivity is a problem with large numbers of Ethernet, fibre channel, and (for clusters) IPC connections on each server. These configurations are moving towards the use of converged network cards and top-of-rack switches to reduce costs and complexity. Virtualization of servers and storage is driving much higher utilization. The growth in size, complexity, and functionality of network storage controllers has been impressive. These controllers offer functions such as storage virtualization, thin provisioning, virtual copying, remote replication, and much more. Current database, middleware, and application architectures have been built around SAN/SCSI system and storage architecture.

The major problems with current models of computing is systems are driving ever increasing levels of I/O from high-performance processors with multiple cores, and virtualization of the servers is driving ever greater utilization of those servers. One side effect of this is that the I/O is increasingly random, which is the least efficient for mechanical hard disk drives. Disk drives have improved enormously in storage density, but they have not and will not keep pace with the explosive increase in random I/O coming from high-performance server farms. The speed gap between servers and storage continues to widen.

Figure 2 also shows the introduction of solid-state disk drives (SSD) on enterprise storage arrays (initially by EMC in 2009), which has resulted in a significant performance evolution of network storage systems. These flash drives give much better I/O performance for reads and writes. At the moment, most arrays require manual identification of volumes that can be placed on the flash drives, but future software will allow high-activity data in a volume to be held on flash and dynamically migrated to and from flash and HDD.

One important constraint on application design imposed by the speed gap in the current system architectures is the I/O density and locking rates that can be sustained. The fact that every transaction has to wait until at least the changes are committed to log files on disk storage before the locks can be released constrains the functionality that can be achieved in today's transactional systems.

Future System and Storage Architectures

Wikibon believes two distinct storage models will evolve:

- Flash on Server (FOS): This architectural approach is revolutionary and will provide the largest benefit but will require the greatest change in infrastructure.

- Flash on Storage Controller (FOSC): This approach is more evolutionary and will be easier to incorporate into existing application infrastructures.

Flash on Server (FOS) Architecture

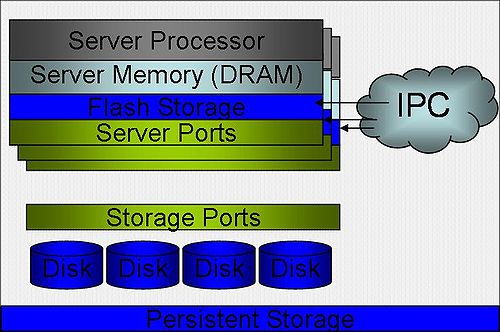

The fundamental FOS architecture is shown in Figure 3.

The key difference is that the flash storage is attached directly to the server (either on the motherboard or attached via a PCI Express interface). Companies such as FusionIO have pioneered this approach to system level flash. The flash storage on servers is connected by Inter-Processor Connection (IPC) protocols such as Infiniband or RapidIO, so that critical data can be replicated on another server. Spinning disks, therefore, will be mainly configured for storage capacity and either directly attached or connected as a basic array on a simple storage network. All of the storage functionality currently provided on a storage controller (copy, data reduction, remote replication, etc.) will move to the server layer, and this storage subsystem will move data between the persistent layers of storage to balance the optimization of flash storage and I/O performance.

Although the flash storage on the server could be configured to look like a disk drive (which would simplify the use of existing software and drivers), this approach would not be optimal. However, the changes required to provide the OS, database and middleware support will be substantial. Implementation of new software will probably start in specialized servers for the management of high-transaction databases and migrate down to other workloads. Use of existing storage controllers to manage the data will be impractical because of the speed differences between the server/flash storage and the storage controller.

Backup systems will need to be modified to run (at least in part) directly on the server itself. It some circumstances it will be required to integrate the backup into the operating system or database itself to allow increased functionality of the backup process.

The benefits of this approach will be very high I/O performance, both in throughput and latency. These improvements will allow applications to process an order of magnitude more data in the same elapsed time as today's transaction systems.

Wikibon expects large companies such as IBM, Oracle, HP and Microsoft to lead the introduction of FOS architectures in the long-term but start-up companies to be in the forefront in the short term.

Flash on Storage Controller (FOSC) Architecture

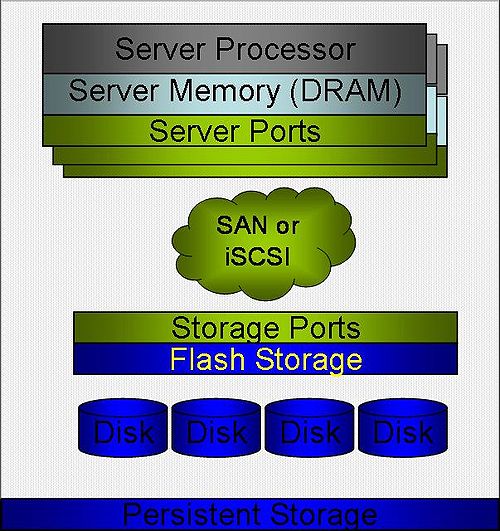

The fundamental FOSC architecture is shown in Figure 4 below.

A FOSC architecture does not provide the same performance improvements for applications as FOS. It will, however, avoid the complexities of IPC connections on the servers,and be more suitable for the support of today's applications. FOSC will be preferred for lower performance simpler block-based applications and most file-based systems.

Backup systems on FOSC will have to be updated to include support for the store-in cache design, as it will not be practical or desirable to write all the contents of the flash-cache out to disk.

An early example of FOSC implementations include the XcelaSAN product from Dataram, which puts a solid state storage cache between the Fibre Channel ports and an existing storage array and dramatically improves IO performance. Another example is NetApp's PAM II, which uses Flash to accelerate and boost the scale of the storage controller read cache. Wikibon expects other existing storage vendors such as IBM to introduce the architecture first on XIV, followed later by vendors such as EMC, NetApp, 3PAR, and Hitachi.

Conclusions

Flash storage will become an increasingly important component of storage systems. Storage and system architectures will adapt to take advantage of the persistent nature of flash storage, and avoid storing data twice. The fundamental model will be two tiered, with flash caches on the server or storage controller dealing with 95%+ of all I/Os, and high density SATA drives providing the low-I/O and low-cost components of the storage sub-systems.

These architectural advancements will require changes to operating systems, databases, and middleware. Applications will need to be modified to take full advantage of these innovations. Because of the changes required to system software, the migration to these new architectures will be gradual.

However, Wikibon predicts that the history of dominance of flash-based technologies in consumer devices will be repeated in the enterprise storage market.

Action Item: IT executives should expect flash storage to profoundly change systems and storage architectures over the next decade. The most significant business impact will be in allowing an order of magnitude more data to be processed in transaction systems. IT executives should identify the potential business value impact on their most important applications and assign the youngest and brightest IT professionals to lead the new revolution in application and system design.

Footnotes: