Contents |

Summary

Wikibon recently spoke with Kurt Grubaugh, Sr. Engineer IT Operations Manager, Microsoft Studios to learn how Microsoft tripled the creation of more than 6,000 pieces of video content per month. The key to this was creating a new workflow system based on a revamped data network infrastructure.

The storage and system infrastructure for high bandwidth and massive volume video production requires a different architectural approach compared to traditional enterprise storage. The architecture for video production needed to support a workflow involving many studio resources (e.g. editorial, compression, ingest, digital rights management, graphics, etc.), and needed to improve the overall velocity of content creation.

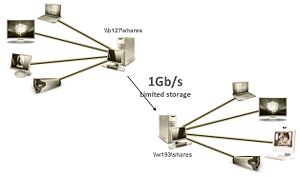

Previously there were a large number of separate islands each with its own storage, servers and user workstations, and each managing its own file system. Files were copied from island to island across 1 Gb links, and there was no consistent file naming system. This made automation of the work-flow very difficult, and the manual control was slow and serial.

The new system infrastructure allows the user systems to interact directly with the data across the SANs to the resources they needed. Instead of moving files from island to island, the workstation or server can directly access the data and update it across a 4Gb high-speed SAN. Partners can access this storage network "cloud" via an OC48 network. The storage at the center was specialized for media with very high bandwidth and reliability from DataDirect Networks (DDN), managed by a single xStreamScaler file storage system (based on the SNFS clustered file system from Quantum). The file system manages a hierarchical file system with a Quantum i2000 LTO 4 tape library for archiving and backup.

There are two SANs in the new set up that will soon support 1.5 petabytes of data. One supports Microsoft's communications department and the other supports the Xbox Live environment.

Business Problem

Microsoft Xbox Live is an online gaming and multimedia community with rich graphics. As of January 9 2009 there were 17 million worldwide paying subscribers to Xbox Live, across a global network in nearly 30 countries. Xbox LIVE allows its members to play games against other online players, as well as download demos and trailers. Recently Microsoft has partnered with Netflix to allow the download of movies to the Xbox.

Microsoft Video Studios produce the content for Xbox Live and Microsoft's communication department. The production process takes a huge amount of raw data and boils it down to a relatively small set of content for delivery. Over time, the Studios had developed a workflow supported by an ad hoc series of stove-piped systems and processes. Individual production steps formed a hodgepodge of inputs and outputs along the production line with no centralized naming conventions or [[Classifying data|classification schema]. The production system was relatively slow with no common storage repository.

The business was demanding three things of the Studios and its partners:

- Increased and faster delivery of video and audio content to Xbox Live and Zune, Microsoft's portable media player;

- Better utilization of original source content, including the ability to maintain the original source content so they could easily be used to enhance existing work or create new content;

- The ability to manage resources and Digital Rights effectively.

The key to improving the delivery of content was improving productivity of the users, and improving the work-flow between the users. Any solution had to allow the user to use the platform of his or her choice, that best suited their training and the job in hand. The solution had to allow heterogeneous protocols and operating systems to share the data. The solution also had to allow fast and direct access to the data, so that time was not wasted with data transfers. The solution also needed remote access.

Solution Architecture

Kurt Grubaugh and his team looked at a number of different solutions. One key requirement was to move away from video tape to digital storage. The digital storage had to read and write faster enough, and had to ensure frame integrity and quality. Another key requirement was a single file system that could handled video and audio content.

One possible solution approach was to use a proprietary system from a single vendor (e.g., CXSF from SGI). However, the team believed integrating a solution from different components would reduce costs long term because of the very high expected growth in capacity. Their team tested a number of clustered file systems (e.g., MelioFS, PolyServe, EMC Celerra Highroad). They chose the DDN xStreamScaler file storage system (based the Quantum SNFS file system, originally called ‘CentraVision’ before it was bought by Quantum), because it could provide support for the size of media files expected, deal with the media file type characteristics well, and provide hierarchical storage management (HSM).

The next component to be looked at was the disk storage. All the major storage vendors (e.g., EMC, Hitachi, HP, Sun) are good partners with Microsoft. One challenge was to communicate with them how different media management storage needed to be compared with traditional data center storage. In order to keep up with the users systems, the storage has to be able to read and write at speeds of four gigabytes per second or higher. Any error handling which interrupted the very high data transfer rate has a severe impact on user productivity. DDN storage was chosen because of their specialized array hardware which allows very high sustained write rates, and provides a deep pipe-line which allows frames to be corrected on the fly without impacting video production. Microsoft chose a combination of FC storage (7.2 GBytes/second) and SATA storage (2.4 GBytes/second) as part of the HSM. Microsoft also valued DDN's ability to speak the language of media production, the ability to support the xStreamScaler file storage system and their deep understanding of media storage.

The next piece in the puzzle was the selection of tape component of the HSM. The tape system was designed to be both a backup system and as an archive system, and is housed in another building over dark fiber. Changes to content are backed up every ten minutes so that previous versions can be restored if necessary; this is temporary tape storage that gets recycled under control of the xStreamScaler file system. Microsoft also stores the source content to the Quantum tape library for long term archival purposes.

The Quantum i2000 Scalar tape library was chosen because it reads and writes at 4GB/second, because it could be integrated with the DDN xStreamScaler file storage system (a small watch icon alerts the user that the file is held on tape), and because it supports LT04 tapes. This allowed encryption of the content and security of content for Microsoft and its partners.

The last major component was the integration of the OC48 network "cloud" for remote partners and the switching of all parts of the network. CISCO and Brocade were chosen as vendors for this part of the architecture.

Microsoft used its own Permissions software to manage security, access control and Digital Rights, with some extensions to deal with additional protocols and operating systems.

Performance of file system metadata is critical to this architecture and fundamental to locating content quickly. Redundant metadata controllers require very fast I/O access to their clustered storage, and this was maxing out at 500 IOPS. This necessitated the introduction of the one terabyte non-volatile RAM storage RAMSAN to allow faster access to the SNFS metadata, which now handles 1,800 IOPS.

Adoption Challenges

Prior to the implementation, the Studios had many different platforms (e.g. Windows, Unix, etc.) each with its own access control and security mechanisms. The new architecture had to be hardware and OS agnostic, and integrating multiple platforms has not been trivial.

In addition, several groups within the Studios have been unable to grasp the concept of all participants having access to the same content. From the company's perspective, this shortens the lifecycle of the overall production process. However, it requires individual departments or partners to change their business processes and exploit the capabilities of the new approach.

The migration of content from legacy storage to the SAN infrastructure has been slower than expected. This is due to the introduction of new media types and other organizational issues (e.g. process changes).

A key part of the integration has been gaining cooperation and interoperability with the SAN topology across the vendor portfolio. Grubaugh simplified the discussion for the vendor base by describing the new architecture as appearing to be a “really big disk drive to applications. This approach has addressed compatibility issues with 3rd party software vendors.

Currently, the SAN supports 130 clients. This number will double with the addition of new multicore blade server compression software.

One future direction that Microsoft is looking at carefully is the integration of SSD (Solid State Disks) into the storage subsystem. Other opportunities to improve performance will be in spreading I/O across more DDN disk controllers.

Conclusions

Media infrastructure needs to be architected in a radically different manner from traditional IT infrastructures. Microsoft Xbox Live Studios clearly identified the key business requirements and architected a solution from scratch that dramatically enhanced its business capability and at the same time kept total costs under control.

Technologies from DDN and Quantum were critical to the success of this architecture. The xStreamScaler file storage system provides the "glue" of the system so that users could find and manage content. DDN's deep pipeline approach allowed Microsoft to support its users with direct access to data because DDNs storage could provide sufficient sustained ability to read and write content without error.

While in traditional enterprise computing, the trend is toward disk-based backup and data de-duplication, video applications are not good candidates for such data reduction approaches. As a result, tape is a more economical media of choice in this environment. The DDN xStreamScaler file storage system provides the HSM capability and is integrated with the Quantum tape library. The use of LT04 tapes allows protection of assets for Microsoft and its partners.

Microsoft's integration of a classification schema and hierarchical storage manager were critical enablers of this architecture. This approach has tripled the overall work-flow and production rate, with enhanced access to content and increased ability to re-purpose that content to increase business value. Previously, in theory, this content could be accessed within the Studio's many silos-- but often finding specific content created a needle in the haystack problem.

Wikibon believes that the Microsoft Studios team clearly identified the business requirements, and believes that the infrastructure designed will allow the attainment of their business goals. The productivity of the users was maximized by the ability to create a private cloud of users and give them direct access to the resources they need to do the job.

Wikibon believes that the overall architecture designed by the Microsoft Studios IT team is a harbinger of a more network-centric approach to designing IT infrastructure.

Appendix

DDN is unique in that its architecture has ten parallel paths in a RAID-6 system (8+2) which funnels data through a deep pipeline so that, if necessary, errors from the data stream (reads and writes), can be reconstructed and corrected on the fly so that no frames are lost. DDN maintains a very high data rate of more than 6GB/sec for both reads and writes. This capability is enabled by DDN's deep pipeline architecture, a custom hardware design that manages the data flow and data integrity. This highly parallel architecture is able to use SATA disks for many video streams which reduces the cost of disk storage by a factor of three or more.

Footnotes: Legal: © Wikibon 2009. This document is copyright protected by Wikibon and does not fall under the GNU general license terms for Wikibon.org. Links to this article from external sources are allowed, however any other re-distribution of this content for commercial purposes is strictly prohibited. Please contact Wikibon for more information.

The cases cited herein are real. Wikibon reports actual customer experiences and results with no attempt to emphasize any one vendor’s strengths or weaknesses. Read the full disclaimer.