Edit this page

This "sandbox" page is to allow you to carry out experiments. Please feel free to try your skills at formatting here. If you want to learn more about how to edit a wiki, please read the the wikitext tutorial at Wikipedia.

To edit, click here or "edit" at the top of the page, make your changes in the dialog box, and click the "Save page" button when you are finished.

Content added here will not stay permanently; this page is cleared regularly.

Testing Area

Introduction

GZIP data compression has a critical role of increasing performance and smashing the monstrous costs of energy in big data systems. Using compressed data, systems require less energy and computing resources to store, transmit, and process data.

Consequently, many applications have adopted GZIP compression such as web page servers, WAN optimization, financial trading systems, data storage, genomics, and even Hadoop.

Unfortunately, some users dont use GZIP because CPUs can become heavily loaded resulting in decreased network performance and energy efficiency. Fortunately, GZIP hardware can offload data compression from CPUs and has the following benefits in big data systems:

- Increases System Performance:

- GZIP hardware increases performance by removing CPU bottlenecks and increasing network I/O.

- Reduces Operational Expenditures (OpEx):

- Huge energy cost savings from GZIP hardware creates a break even point on the order of weeks.

- Reduces Capital Expenditures (CapEx) :

- Systems require fewer compute nodes since CPUs operate more efficiently.

- Integrates Plug and Play:

- Our customers are surprised how easy our drop-in replacement for GZIP and ZLIB is.

Through using GZIP hardware, CPU offloading increases network I/O, energy efficiency, and results in systems requiring OpEx and CapEx.

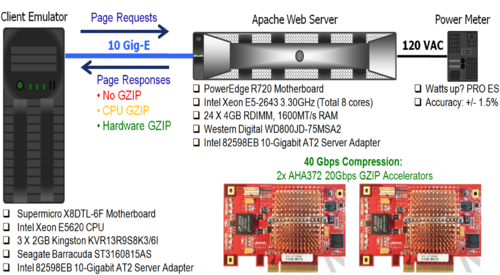

To demonstrate the benefits of GZIP, AHA created a hardware offloading experiment using a web page server. A hardware configuration diagram of the experiment is shown in below.

GZIP Hardware Offloading Experiment

In this experiment, the client emulator floods the Apache web server with page requests. To provide performance comparisons, the web server changes its page responses using no GZIP, CPU GZIP, and hardware GZIP. Hardware GZIP compression is provided with two AHA372 accelerators. Additionally, a power meter measures watts used by the system. Through this configuration, we demonstrate how GZIP compression affects bottle necks, optimizes workloads, and reduces operational and capital cost.

Evaluation Metrics

For performance comparisons, we a show a strip chart with rolling averages of the following metrics:

- • Effective Throughput of the 10 Gig-E link

- • CPU Load of the Apache Web Server

- • Energy Efficiency Joule/Gigabit Transmitted by the Apache Web Server

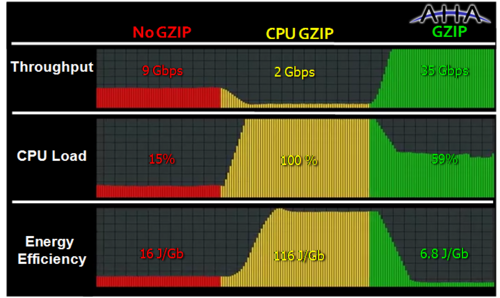

The strip chart in the figure below shows these metrics captured in the bars labeled: Throughput, CPU Load, and Energy Efficiency using no GZIP, CPU GZIP, and AHA GZIP. Using this data we show the need for GZIP hardware and the performance increases from removing CPU bottle necks and increasing network I/O.

Increasing System Performance

CPUs are Inefficient at Performing GZIP

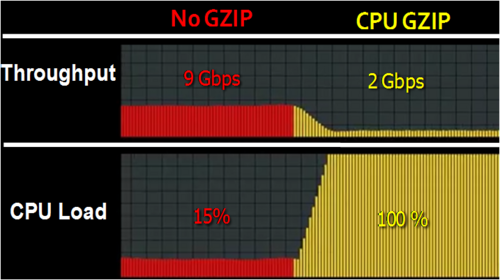

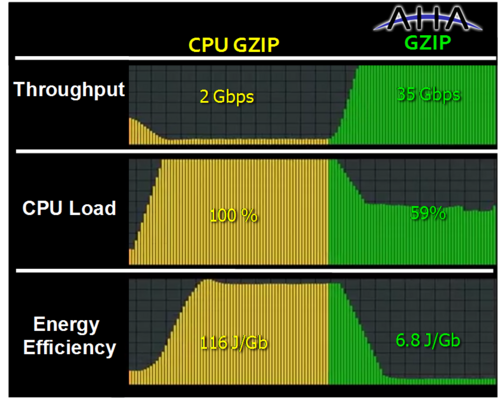

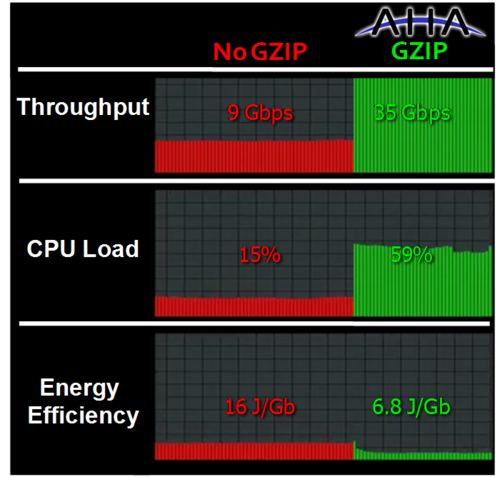

To illustrate the need for GZIP hardware, notice the drop in throughput and increase in CPU Load between the no GZIP and CPU GZIP cases. A focused comparison is shown below. With no GZIP, the web server is able to respond to web pages at 9 Gbps. However, when compared to the CPU GZIP case, there is almost a 5x drop in throughput to 2 Gbps. Additionally, the 8 Core CPU is at 100% load and consumes 7x more energy. Thus, the CPU is inefficient at simultaneously serving pages and performing compression. In this case, GZIP is creating a CPU bottleneck, which requires offloading GZIP onto hardware.

GZIP Hardware Removes CPU Bottlenecks

GZIP hardware excels at removing CPU bottlenecks. From the figure below, there are huge increases in performance and energy efficiency over CPU performing GZIP. Hardware GZIP has a huge 18x throughput and 17x energy efficiency advantage over CPU GZIP. Even at full load with 8 cores, CPU GZIP is only capable of 6% of the throughput compared to the GZIP hardware case. Finally, there is still available CPU cycles for performing other tasks.

GZIP Hardware Increases Network I/O

In addition to removing CPU bottlenecks, GZIP hardware excels at increasing network I/O. The figure below shows over a 4x increase (from 9 Gbps to 35 Gbps) in throughput from the no GZIP case to the hardware GZIP case. Since our maximum link throughput is 10 Gbps, we reach the maximum effective data rate possible for the given compression ratio (10 Gbps Link * 3.5:1 Compression Ratio = 35 Gbps effective throughput). As shown earlier, this experiment used two AHA372s for an aggregate input compression rate of 40 Gbps1. However, the hardware GZIP case is limited to 35 Gbps because the data has a compression ratio on the order of 3.5:1.

When adding compression to increase network I/O, knowing the compression ratio of data is important in order to select the compression rate of hardware for the application. In this experiment, we used two AHA372s for a maximum input compression rate of 40 Gbps and throughput limit of 35 Gbps, due to the compression ratio. If the data had a higher compression ratio, such as 6:1 or 8:1 we would require faster hardware, such as the AHA378, to reach the maximum effective data rate possible for those compression ratios (60 Gbps and 80 Gbps, respectively).

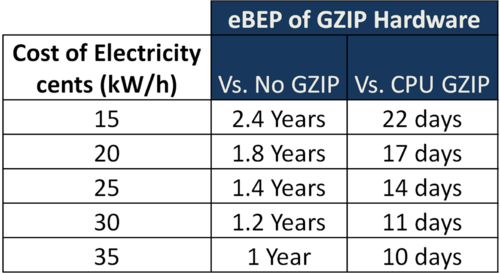

Reducing OpEx

In addition to the performance benefits, GZIP hardware reduces OpEx by reducing the amount of energy consumed and can even pay for itself many times over. Using the data from this experiment, we can calculate when the cost for energy saved is equal to the cost of the GZIP hardware, which we call the energy break-even point (eBEP). The eBEP is shown below for a given cost of electricity and is calculated against the No GZIP and CPU GZIP cases. In areas where the cost of electricity is high (e.g., Japan, Australia, and Germany), compression hardware can easily pay for itself many times over in energy costs alone.

Reducing CapEx

In addition to reducing OpEx, compression hardware reduces CapEx by creating equivalent performing systems using less hardware. We can determine the reduction in CapEx by revisiting how hardware GZIP removes CPU bottlenecks and increases network I/O. To show the CapEx reduction by removing CPU bottle necks, we revisit the comparison between CPU to hardware GZIP. In this comparison, we noted an 18x difference in throughput in an earlier figure. For a performance match with GZIP hardware, 18 CPUs (i.e., compute nodes) would be required in the CPU GZIP case (18 CPU * 2 Gbps = 36 Gbps). We also note that further utilization optimizations are possible in hardware GZIP case, since the CPU is only at 59%. Theoretically, the addition of another 10Gig-E card and 80 Gbps AHA378 card could maximize CPU utilization and achieve a throughput of 70 Gbps.

To show CapEx reduction in the increasing network I/O case, we revisit the comparison between the No GZIP case and the hardware GZIP. To exceed the throughput of hardware compression (35 Gbps), the No GZIP case requires replacing the 10Gig-E card with a 40Gig-E, resulting in a much higher CapEx for network hardware (i.e., network cards, cables and switches ). In a cost comparison, the CapEx of a single 40Gig-E switch alone can be the total cost of GZIP hardware for an entire network. With GZIP hardware offloading, systems with 10Gig-E infrastructure can achieve close to 40Gig-E speeds with the installation of much less expensive PCIe cards.

Integrates Plug and Play

While not illustrated in our experiment, integrating AHA GZIP hardware is plug and play. After installing the PCIe card, the entire driver installation procedure consists of five command line entries. Additionally, to support a drop in replacement of ZLIB functionality, AHA provides a ZLIB library replacement for the Linux ZLIB library. This ZLIB library replacement provides immediate integration of compression offload into existing applications. For expert users, complete source code for drivers and utilities are provided. If users have more complex applications, AHA also provides direct engineering support for integration. Based on our engineering support and hardware performance, our recent customers have said, Why isnt everyone using this? Its even easy to integrate this hardware into Hadoop, high frequency trading, storage, and genomics data applications.

Summary and Conclusion

GZIP compression hardware offloading is a clear winner, when it comes to energy cost reduction and performance improvement. Hardware compression creates more efficient network I/O, which optimizes workloads on computing systems. More efficient network I/O reduces energy costs. With optimized workloads, fewer computing nodes are required resulting in less capital and operating costs. GZIP hardware offloading increases system performance, reduces Opex/CapEx, and integrates plug and play. Although big data applications can consume a monstrous amount of resources, GZIP hardware increases performance and smashes costs.

About AHA Products Group

The AHA Products Group (AHA) of Comtech EF Data Corporation develops and markets application-specific integrated circuits (ASICs), boards, and intellectual property core technology for communications systems applications. Located in Moscow, Idaho, AHA has been providing leading edge Forward Error Correction and Lossless Data Compression technology for almost three decades. AHA offers a variety of standard and custom hardware solutions for the communications industry. Visit us at www.aha.com

Don't worry - you won't break anything in here (we hope!). Really?

GZIP is Awesome

No this is Awesome

Portal Links:

- Storage

- Information Management

- Storage Networks

- CAS (move from cascommunity.org)

- Data Protection

- Mobile Enterprise

- Sustainability

- Cloud Computing

- Performance Lab

- Virtualization Portal

- Careers

|-

||50:45 |-

Tag Cloud

Tutorial Tab Format

| Tab 1 | Tab 2 | Tab 3 | Tab 4 | Tab 5 | Tab 6 |

| AND THE CONTENT GOES HERE AND THE CONTENT GOES HERE | |||||

<comments />

Word2wiki converter of table

| Storage Facts, Figures, Estimates and Rules of Thumb |

| This table is compiled from a wide variety of sources and is intended to serve as planning guidelines for storage and data management planning activities. |

<object width="425" height="355"><param name="movie" value="http://www.youtube.com/v/dJf3z9AfiVM&hl=en"></param><param name="wmode" value="transparent"></param><embed src="http://www.youtube.com/v/dJf3z9AfiVM&hl=en" type="application/x-shockwave-flash" wmode="transparent" width="425" height="355"></embed></object>

| Wikibon Planning Assumption |

| By 2012, 50% of organizations will be vegetarian and the longer I type the shorter it gets |

| Planning Assumption | By 2012, 50% of organizations will be vegetarian |

___________________

TEST AREA HERE FOR NOTES

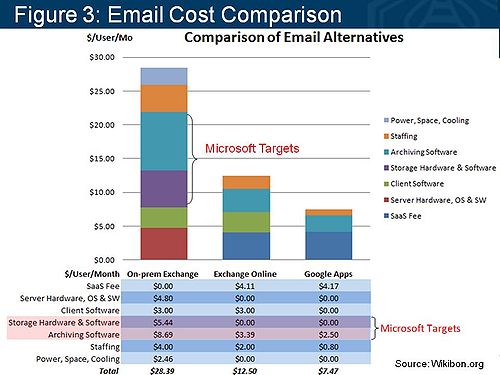

- Analysis of 10,000 seat deployments. Function and pricing vary between on-premise and cloud offerings. Cloud archiving and spam filtering software: Microsoft- Exchange Hosted Archive; Google- Postini.

- Google Apps costs are $50/user/year for 25GB of email storage. Google’s archiving/spam filtering service costs (Google Message Discovery/Postini) are $45/user/year ($30 for volume customers) and include unlimited storage for ten years.

- For archiving, Google provides RAID-protected disk storage located in two geographically distinct locations. Google claims to index message data and then write it to two separate locations for long-term storage.

This analysis underscores why Microsoft’s Exchange group is motivated to try to attack the two biggest culprits of on-premise costs, storage and archiving. Microsoft’s strategy has consistently been to develop ‘good enough’ solutions for smaller customers, bundle more function into its software stack, and maintain its pricing umbrella.

Microsoft’s recommended storage strategy calls for DAS, which will presumably help lower Exchange TCO. However as we explore in Part Two of this series, larger customers must consider the bigger picture and evaluate costs, availability, and business value across the entire application portfolio. Considering Exchange in isolation can often increase costs and pose unnecessary information risks to organizations.