#memeconnect #ibm

Contents |

Introduction

As part of its recent refresh of its unified storage array line, NetApp introduced compression as an option for Flex Volumes. This is a no charge feature, and can by selected by volume, with or without the A-SIS feature.

NetApp Compression Implementation

NetApp uses the Lempel-Ziv-Oberhumer (LZO) lossless data compression algorithm. The copyright for the algorithm is owned by Markus F. X. J. Oberhumer. This compression implementation has the following characteristics:

- The algorithm is designed for compression/decompression speed at the expense of compression ratios

- The block size for the compression is 32K. Eight (8) 4K pages are assembled and assessed for compression. If the blocks can be compressed by 25% or more, the 32K block is written out to 6 or fewer 4K blocks. The maximum compression is 8:1 or 88%, and the minimum compression is 0%. Decompression is fast and inline.

- No ASIC is used – the storage controller is used for compression and decompression

- According to NetApp, a typical compression overhead ranges from 150 to 900 microseconds per 32K chunk. In the CORE calculation we have used 750 microseconds. The worst case is when data cannot be compressed and the controller is busy; this process is necessary in order to achieve a minimum compression of 0%, and avoid increasing the data.

- The processor requires an additional 64K buffer during compression

- The algorithm requires no additional memory for decompression other than the source and destination buffers, both 32K.

- Theoretically there could be user parameters can be set to allow an adjustment between compression quality and compression speed, without affecting the speed of decompression. NetApp have not implemented any user access to these parameters.

- The implementation supports block or file in the same way (no additional file information is used)

- In the NetApp scenario, data is first compressed, and can then be de-duplicated

- There is no charge for the compression feature

- Compression can be implemented on Flex Volumes only and requires the latest level of OnTap, 8.0.1

- Compression is available on the 3X00 and 6x00 NetApp product lines, but not on the lower end systems.

More Detail on NetApp Compression

For those who want a deeper dive:

- Wikibon understands that the data is not compressed in the cache (it is uncompressed when the data is read into cache)

- There are two types of NetApp snap - a Logical snap (qtree) and a Physical volume snap (Volume Snap Mirror, or VSM). If qtree is used, the data would be uncompressed (and rehydrated if de-duplicated) before it is sent to the second copy. If this data is remote, this would impact line costs. If VSM is used, data compression is maintained over the mirror.

- Synchronous Mirroring is not supported together with compression (or de-duplication)

- If data compression is turned off for a volume, new data is not compressed. Old compressed data is not affected unless it is updated, when it will be uncompressed. There is an "Uncompress" option for storage administrators that allow a batch job to take place to uncompress the complete volume.

General Issues with Capacity Optimization

- Compression and de-duplication (and encryption) reduce the recoverability of data from bit errors, and require higher data protection for the same RPO.

- Wikibon believes that data is always decompressed or re-hydrated when leaving storage controller

- There is significant overhead of using storage controller for compression – however, there is a general trend away from using ASICs and towards using additional cores in Intel processors for software implementations of functions (ASIC implementations are usually slower, and processor cores tend to be underutilized).

- Wikibon expects that with higher performance controllers, NetApp will introduce additional compression algorithms

CORE Analysis

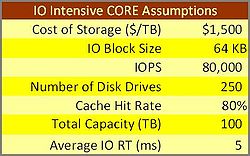

Wikibon has developed a CORE (Capacity Optimization Ratio Effectiveness) Methodology, with the goal of being able to rank the ROI of a technology implementation as defined by (marginal benefit)/(marginal cost). Table 1 shows the IO intensive workload assumptions that were used in the CORE analysis.

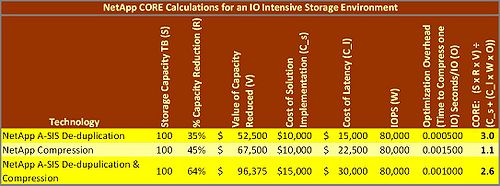

Table 2 shows the results of this analysis. A CORE score of 1 means that the benefits of capacity optimization equal the costs. The NetApp A-SIS technology has a CORE value of three (3) for this workload, which shows an adequate return on investment. The compression has a CORE value of 1.1, which is not satisfactory for the investment and risks of an IT deployment.

What this Means for Storage Executives

NetApp is to be applauded for its aggressive drive to optimize the capacity of data stored and compression is delivered at no additional cost and integrated into the storage controller. However, NetApp's implementation is not the most efficient decompression algorithm in the marketplace. As such, IT professionals will be enticed to turn on compression and CFOs will opt for a 'free' option over an appliance or a new capital expense on an alternative NAS technology (e.g. Permabit via BlueArc). However the decision to enable compression should be done carefully as applying it to the wrong workloads will create overhead issues for clients.

In addition, we make the following points:

- This is further confirmation of our prediction that storage optimization technologies will be embedded and integrated within the storage controller;

- Wikibon believes that users can be confident of using NetApp Compression on low IO volumes that have users who can tolerated higher response times (care needs to be taken with volumes that are used on a cyclical basis);

- The NetApp A-SIS technology is not as powerful or as cost-effective as de-duplication technologies from Permabit and its OEM partners or IBM's Real-time Compression (acquired via the Storwize acquisition). However, for installations with NetApp storage already installed, the technology offers a reasonable (and sometimes excellent) return on investment for suitable workloads.

- As NetApp executives themselves have pointed out, NetApp compression should be approached with caution, and only used initially for archive and other Tier-3 storage applications, where IO rates and low and there will be toleration for higher response times.

- Wikibon does not recommend the use of NetApp compression on high IO volumes and SSDs, especially with low-latency sensitive databases.

Technologies such as IBM's Real-time Compression with lower latencies and overheads could be more suitable for file-based storage where performance is of greater concern. As well, while many of our clients are concerned about appliance-based technologies this may be an advantage in some cases as the technology can be applied to any NAS array.

Action Item: Although the cost of the NetApp A-SIS and compression technology licenses are free, there are hidden costs from higher controller utilizations, higher IO latencies, implementation costs to put in evaluation and monitoring software and processes and ongoing performance monitoring and problem resolution. Storage executives should clearly understand the performance and costs envelopes for NetApp capacity optimization technologies, and keep well inside them. For mission critical and high-performance workloads, storage executives should evaluate other capacity optimization technologies.

Footnotes: Additional Information: