Contents |

Introduction

In previous research Intel Post-PC Blues and Client Support in Software-led Infrastructure, the following strategic question was asked:

- If ARM processors and technology becomes dominant in the smart client device and PC market, will history repeat itself with the emergence of these chips in data center servers?

In other words, will the same strategy that Intel deployed 20 years ago of using the volume created by commodity end-user devices to create lower-cost servers work for ARM holdings and their licensees? Intel succeeded in entering the server market at the low end and then growing from that base to eventually dominate and drive out the HP, IBM, and Sun RISC servers.

To answer that question of whether that will happen again, it is necessary to look in detail at hyperscale computing and software-led infrastructure. At the end of the discussion, it will be evident that Intel must embrace hyperscale, and drive aggressively to compete at the entry-level and volume markets with both price and function. Any misstep will allow ARM 64-bit processors a beachhead. It is also interesting to look at vendors such as Fusion-io, that are strongly embracing the hyperscale model and submitting software and APIs to the open source community, and are comfortable with an environment where multiple sourcing is a given.

Hyperscale & Software-led Infrastructure

At the Open Compute Foundation conference of 2012 in Santa Clara, hosted by Facebook, the discussion focused on the characteristics of hyperscale computing. Facebook spends a billion dollars per year on computing. The computing architecture, like those of all similar hyperscale organizations such as Google, Apple, and Microsoft, uses scale-out racks of commodity component-based equipment.

Facebook’s approach is to define a small number of configurations (<10) to meet a relatively small number of very large applications designed for scale-out. Examples of the servers are Web, database, and ad serving, which differ by the amount of processing, memory, and persistent storage type.

The objective of hyperscale computing is to minimize the total cost over the life of the rack. The most expensive component is human operators, and to minimize operator cost any 1.5 U shelf that fails is switched off. When enough shelves in a rack fail, the rack is switched off. When a rack reaches the end-of life, it is switched off. The final resting place of the shelves is the wood-chipper.

To minimize cost, each shelf is designed to be no-frills, just the bare minimum. For example, in most cases a dual power supply is not necessary if the additional cost outweighs the probability of power-supply failure. It is cheaper just to switch of a shelf with a failing power supply. The cost of additional efficiency of the power supply must match the power savings made over the life of the rack but also enable sufficient power to be available to the rack. Compute-intensive shelves have the shortest life, as Moore’s Law for processors is at its most aggressive.

The other thing completely missing from hyperscale computing is component software. For example, there is no storage tiering software within the Intel OCP 30 Drive Open Vault “Cold Storage” system. Any software is provided across the infrastructure, at the infrastructure and/or application layers. Hyperscale is the first instantiation of true Software-led Infrastructure (SLI).

Hyperscale, a Harbinger of Future Enterprise & Cloud Design

These examples of trade-offs illustrate that the business model behind hyperscale computing is completely different from traditional enterprise data-center model:

- The enterprise data center is primarily driven by purchasing for an application, and the equipment is designed to fit that application. Storage and network sub-systems come with significant software built-in. Work is required to maintain these bespoke systems, both from the vendor and data-center staff. The business model is to minimize the upfront cost and pay maintenance and operators to maintain the hardware and software.

- The hyperscale model starts the other way round. The application is put on the most suitable set of hardware, and the software management services are provided by software packages, open-source software services, or services provided by the application. The application is designed or chosen to be compatible with a scale-out commodity system design. All possible physical maintenance is eliminated, both from vendors and in the data center.

The potential cost savings of hyperscale and software-led infrastructure are very significant. At the moment it may seem a business model only applicable to Internet service providers. However, the large operation cost reductions will create strong arguments for enterprise adoption, in order for enterprise IT to be competitive with external IT services.

Competing for Hyperscale Business

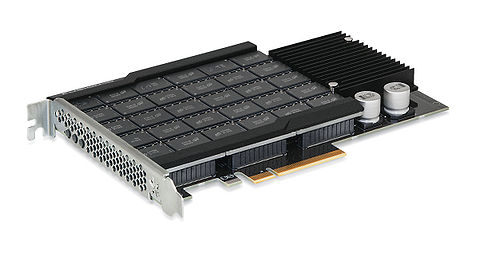

To compete effectively in the hyper scale environment, vendors will need to adopt win-win strategies. Fusion-io presented at the Open Compute Foundation conference, introducing an ioScale PCIe flash device (see Figure 1) with up to 3.2TB on a full-height, half-length card, specifically developed for the hyperscale environment to meet the endurance requirements of database servers. The important part of the announcement was the business side. The cost was $3.98/GB. The atomic write APIs and the directFS filesystem that supports atomic write have been given to the open systems community. Fusion-io is giving the specification of the card to other vendors (they will still probably need to buy the controller from Fusion-io), so that the OCF dual-sourcing requirement can be met. Fusion-io are committed to compete aggressively as a hyperscale vendor.

Intel have some compelling technology, such as the Photonic 100Gb optical interconnect (see Figure 2), which was on display at the OCF conference. In order to take the x86 architecture forward, Intel will need to partner with companies such as AMD and Nvidia. Ensuring the survival of the x86 architecture as the de facto standard for servers is more important for Intel than optimizing of pricing or driving out competition. A lesson from history: HP, IBM, SGI & Sun (now Oracle) fought RISC chips wars on the highest performance, whereas the real competition was Intel x86 architecture with the cost advantages from the volume of commodity PC chips. However, Intel's posture at the OCF conference was tepid at best. Intel needs to partner and compete aggressively as a hyperscale vendor. It will not be sufficient to just deliver the x86 chips.

Conclusions

The hyperscale environments are the breeding grounds for new technologies, business models and ideas. The business case around hyperscale is very likely to migrate down to cloud providers and enterprises within two years. Currently the x86 is overwhelmingly the commodity server architecture of choice.

If 64-bit ARM processors are successful, ARM technologies will sweep the board for all smartphones, tablets, chromebooks, PCs, and other client devices. Apple and Microsoft will migrate PC software from Intel to ARM processors. Both Microsoft and Intel will loose their device and PC monopolies and the margins that monopolies bring. Microsoft will still be a successful business, because it has such strong business divisions.

Intel is at higher risk. If the 64-bit ARM processors are widely adopted, they will be much cheaper than Intel because of the commodity client volumes. If ARM gets established in hyper-scale computing with Facebook, Google, etc., Intel is very likely to be in real trouble in the long run. Intel will have to laser-focus on the low-end of the server market and use price and function to compete in the hypermarket arena.

Action Item: Hyperscale is the first instantiation of software-led infrastructure. CIOs, CTOs, and senior executives from both cloud providers and large enterprises should be keeping a close eye on the OpenStack and Open Compute Foundation initiatives. They should experiment with fitting the majority of applications to specific hardware configurations and understanding the potential for swapping long-term operational cost for capital cost depreciated over much shorter periods of time. This will probably be the best (and only) way of reducing costs to be competitive with Amazon. In the short term, vendors that are competing successfully in the hyperscale arena should be high on the list for RPOs.

If Intel executes aggressively as a hyperscale vendor, and uses its technology to create and differentiate server function at the entry server level first, it will be possible for Intel to retain x86 as the default server architecture. Senior data center executives should assume for the time being that Intel will retain market share for servers, and the x86 will continue to be the dominant architecture for servers, even though Intel will very likely loose the smart device (PC & mobile) to ARM. At the same time, the progress of projects such as HP Project Moonshot and AMD's venture into ARM-based servers should be closely monitored.

Footnotes: