#memeconnect #fio

Contents |

Introduction

In November 2009 Wikibon wrote about the impact of flash on future system and storage architectures, and predicted that there would be two additional architectures that would support flash:

- FOS or Flash on Server,

- FOSC or Flash on Storage Controller.

Fusion-io has announced a FOS architecture called Virtual Storage Layer (VSL) that combines the persistent nature of flash cards with a direct memory architecture. This architecture provides significant and sometimes dramatic improvements in the performance and availability of file systems and databases and allows the introduction of new design philosophies for applications and systems with much higher data densities and higher locking rates.

Wikibon predicts that FOS architectures such as VSL will become the dominant systems architecture for high performance, large-scale systems with shared data requirements, particularly for applications demanding high update rates on the files systems or databases.

Technical Recap

Computers are designed to minimize the physical constraints of the materials from which they are assembled. The problematic physical property of RAM memory is lack of persistence – when power is lost, so is the data. And that can happen at any time. In contrast, storage on magnetic disk offers persistent storage but is power hungry and very slow.

Flash storage for the first time allowed persistent storage with microseconds (10-6) as opposed to milliseconds (10-3) access time, potentially one thousand times faster than conventional spinning disks. The great advantage of the way that EMC introduced Flash storage was that it used the existing storage protocols to exploit flash. No change was required to application programs to take advantage of it. Now EMC and most other storage companies have introduced flash storage with sub-volume automated tiered storage; this allows the identification and migration of storage hotspots to flash, again with no change to the server storage management or application code.

However, the disadvantage of treating flash as an SSD is that you have to use storage protocols, and the full potential for flash storage cannot be achieved. The performance improvements are one order of magnitude instead of three. The main reason is that the 30+-year-old SCSI storage protocol was designed with self-sufficient physical storage disk as the distant end-point. The processor can ask and receive data but has no idea what is happening within the disk layer. The TRIM extension to SCSI provides some limited improvement, particularly for SSD write management, but does not solve the fundamental architectural problems. One important constraint on application design imposed by the speed gap in the current system architectures is the I/O density and locking rates that can be sustained. The fact that every update has to wait until at least the changes are committed to log files on disk storage using SCSI protocols before the locks can be released constrains the functionality that can be achieved in today's high performance systems.

In contrast, memory subsystems such as virtual memory and paging systems have a rich set of functions that allow strong two-way communication between the processor and memory. What is needed is a way to combine the persistent capabilities of flash with its direct memory capabilities at the system level. FOS systems such as VSL attempt to achieve that and release the full potential of flash.

VSL in a Nut-shell

The Fusion-io flash memory is PCI Express attached storage (ioDrive), attached directly to the processor bus. It provides both a Block-based method for the OS to access storage and a new memory-based method to support Fusion-io flash memory.

VSL As Storage Subsystem

The VSL subsystem runs in the server and allows the flash memory to act as a Block-based storage device. All the processor cores can be used to complete this work, and the Flash memory controller is communicated with using RDMA. The eliminates the need for separate RAID controllers and metadata processors, and improves latency.

VSL as Memory Subsystem

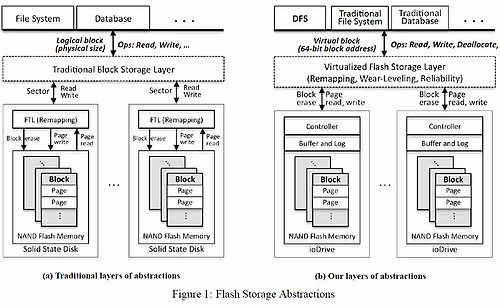

In addition VSL provides a set of functions that allow the flash storage to act as a memory subsystem. The details of the comparison of the new architecture are shown in Figure 1. The key addition is that these functions allow the higher levels of the storage hierarchy to exploit the persistent nature of flash. The architecture pushes down the sector, buffer, and log management of flash to the flash controller, which takes responsibility for guaranteeing any write action or allocation request, and maintaining recoverability. The VSL layer is responsible for block erasures, reliability, and wear-leveling. In the event of a crash, the VSL driver can reconstruct its metadata from the flash device.

Source: http://www.usenix.org/events/fast10/tech/full_papers/josephson.pdf Figure 1, downloaded 8/17/2010

These functions allow the higher level layers such as the file systems and volume managers to work much more efficiently. They can write directly to the PCI flash memory and guarantee in one pass that the data is written and persistent.

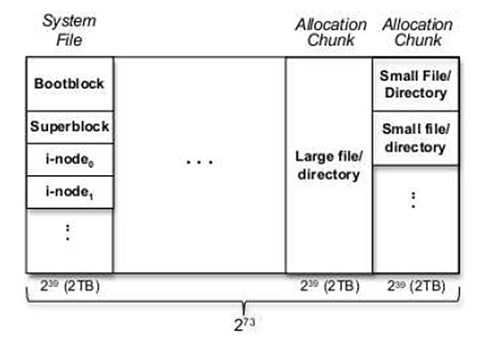

An example of how a file system exploits the VSL memory architecture is shown in Figure 2 using an experimental file system called DFS. From a logical level, the storage systems see large 2TB address space chunks. The first 2TB is used for system files. The remaining 2TB allocation chunks are for user data or directory files. A large file takes the whole chunk, while multiple small files share a single chunk. There can be up to 273 2TB chunks. This allows a massive single level storage architecture much larger than that found, for example, in the IBM iSeries (AS/400) computers. The obvious advantage is applications can be written assuming a large logical, high speed, persistent space, thereby dramatically improving performance and simplifying management. The key point is the combination of high speed memory and persistent storage is a game-changer.

The VSL architecture can completely mask one of the main challenges of flash storage, write erasures (these have to be done to erase any previously held data before new data can be written, and they can take one to two milliseconds to complete). The VSL layer, not the flash controller, is responsible for block erasures, which can be scheduled in advance. All writes are then appended using a log-structured format.

Source: http://www.usenix.org/events/fast10/tech/full_papers/josephson.pdf Figure 3, downloaded 8/17/2010

Research Implementation of DFS on VSL

Figures 1 and 2 are taken from a scientific paper by William K. Josephson et al (Princeton University) entitled “DFS: A File System for Virtualized Flash Storage” David Flynn (CEO of Fusion-io) was also a contributor to the paper and to the research funding.

One of the main advantages of the original flash implementation by EMC as an SSD is that no system changes were required. This is also true of the VSL approach, however, users should understand that to fully exploit the potential of VSL and realize orders of magnitude better performance, changes will need to be made by vendors to file and/or volume management systems. In his paper Josephson compares the performance of a new file system called DFS with the performance with a traditional Linux journaling file system called ext3.

DFS lays out its files directly in a very large virtual storage address space provided by Fusion-io’s virtual flash storage layer, which is also used to perform block allocations and atomic updates. As a result, DFS performs better, and it is much simpler than a traditional ext3 Linux file system with similar functionality. Josephson ran micro-benchmarks that show that DFS can deliver 94,000 I/O operations per second (IOPS) for direct reads and 71,000 IOPS for direct writes to a Fusion-io ioDrive. Josephson comments that this is very close to the theoretical maximum and consistently better than ext3 on the same platform, sometimes by 20%. For buffered access performance, DFS is also consistently better than ext3, sometimes by more than 149%. Josephson also ran a series of application benchmarks (e.g., TCP-H) and showed that DFS outperforms ext3 by 7% to 250% while requiring less CPU power.

The TRIM function was not available on the Intel SSD used in the comparison. Josephson commented to Wikibon that it would be probably worth doing so, especially if the devices were full. He predicted that as the devices were not operated at more than 50%, the benefit of TRIM would not be as significant.

Perhaps the most interesting statistic that drives home the difference between the two approaches is the number of lines of code in DFS (3,289) compared with ext3(24,708). Granted that ext3 is well established code with the usual “time-bloat”, but the DFS is significantly less complex, and all of it is reentrant. This allows excellent exploitation by multi-core processors.

Linux implementations will be first to take advantage of this approach. IBM will be looking to exploit this in its Blue Darter Flash storage OEMed from Fusion-io. HP and Dell are also OEM partners.

The bottom line conclusion of this research is that these improvements at the file system level are substantial and due to the elimination of the need to perform a 2-phase commit process. This is unique to VSL and the Fusion-io implementation and varies dramatically from any device that emulates a disk.

FOS architectures and Hypervisors

Hypervisors, led by VMware, are revolutionizing the efficiency of computing. Hypervisors from VMware, Microsoft’s Hyper-V, IBM LSPR and Open-systems Xen are all making fast strides, with VMware way ahead of the pack. Wikibon’s Infrastructure 2.0 project is helping to define the requirements of the next generation data center, which we see as hypervisor driven virtualization based on X86 architectures; the virtualization being extended to servers, storage and networking. In addition, desktop virtualization solutions from VMware, Citrix and others are making steady progress. Based on discussion with Wikibon users, performance is the number one challenge to the adoption of server virtualization. While most (>80%) Wikibon users expressed a strong desire to virtualized business and mission critical applications (e,g., Oracle, SAP), applications heads are currently reticent to push the environment because of performance concerns.

Some areas of virtualization are notorious for creating IO storms, where IO levels in the system can increase by a factor of 10 or more. This is particularly taxing when systems are fired up or down at particular times of the day, or if multiple patches have to be applied to many systems at the same time.

Virtualization in general adds significant stress to IO systems. There is less efficiency of IO handling withing the hypervisor, and increased chance that IO storms affecting all the virtualized machines can occur. Both FOS and FOSC architectures have their place in being a key improvement to virtualized environments. The power of FOS architectures such as VSL is that virtualization systems can use the persistent high-speed flash memory to simplify the preservation of metadata and state of virtual machines, from simple one-pass paging systems with potentially terabytes of flash to more efficient and secure metadata management systems. Wikibon believes that FOS architectures will simplify the programming, decrease recovery times and improve overall performance to the level that will obviate performance concerns with virtualization.

Constraints to VSL Adoption

One key constraint to VSL adoption is that access to the data stored on a PCI flash device will be lost if the server goes down for any reason. On storage drives this problem is solved by providing access to other servers with dual porting. A similar mechanism has to be introduced on the PCI flash devices to obviate the need for complex clustered systems to avoid a single point of failure. Wikibon believes that Fusion-io will introduce this important piece of their puzzle in 2010.

Another constraint will be the speed at which database and file systems are developed to exploit VSL. Linux implementations will be first to take advantage of this approach. IBM will be looking to exploit this in its Blue Darter Flash storage OEMed from Fusion-io. HP and Dell are also Fusion-io OEM partners and Wikibon expects these firms to use the VSL architecture.

One of the laggards is likely to be Oracle, who will most likely want to support the FlashFire technology it bought with the Sun acquisition. Microsoft is not known for its rapid product cycles but there are compelling reasons to believe that architectures such as VSL can dramatically improve SQL throughput. Wikibon believes that the performance benefits of FOS architectures are so significant that all database and file-system vendors will support FOS architectures.

Conclusions

VSL is a significant announcement that heralds a completely new way that performance systems will be architected in the future. Flash devices such as the iPad are already showing a change in the way that mobile applications are being written, which makes available much more data to the end-user to improve functionality and ease of use. Future server systems will use Flash on Server architectures to increase the data access density and locking rates by a factor of 10 or more. This will significantly improve the functionality and ease of use of applications. Early adopters that use FOS architectures for revenue generating systems can gain significant market advantage. Once users get used to this data access density, there will be no going back to traditional systems.

Flash implemented as an SSD will still continue to play an important part in improving shared data in arrays, but the performance improvements and overhead reductions of FOS will mean that high-function flash memory will migrate to the servers. Large-scale Web systems using scale-out architectures such as Facebook are already re-architecting their systems to use FOS. Wikibon predicts that it will become the de facto design for this type of system, particularly after the introduction of dual-porting of the Flash on Server controllers.

Wikibon expects that Linux systems will be the fastest adopters of Flash on Server technology. High performance file systems, volume systems, and database systems will be the main areas of adoption. Unix systems from HP, IBM and Sun will not be far behind. Microsoft has not yet signaled that it is going to make the changes necessary to its operating system to accommodate FOS however if it doesn't move more quickly it will fall behind in our view.

EMC earned a Wikibon CTO award when it introduced Flash SSDs in 2008. Fusion-io becomes one of the front runners for a Wikibon CTO award in 2010 with its announcement of VSL.

Action Item: Application heads and ISVs need to take a long, hard look at Flash on Server architectures and Fusion-io’s VSL implementation. As the cost of flash comes down, the functionality and ease-of-use improvements that can be made to applications will be game-changing. There should be serious skunk works in all large organizations with the best and brightest to help guide future applications. Good areas for initial exploitation could be temporary tables in data warehouse environments, but all areas of application and system design will be affected. Senior IT managers should initiate these skunk works, focus them on revenue generating systems that will gain competitive advantage, and be prompting database and file system vendors for VSL exploitation time-lines.

Footnotes: