This case illustrates the issues faced by a company that wanted to reduce the risk of losing transactional data. It shows the processes they followed to analyze and estimate that risk, and develop the business case.

Executive Summary

This case study is derived from a real case study, but has been modified to keep the identify of the organization confidential. MFC is a multinational finance company recognized as a market maker in the US and internationally. Business continuance in the event of a disaster is of key concern to all the stakeholders. Customers, partners, shareholders and governance agencies want to be assured that data is not lost and that systems can be restored quickly.

MFC has implemented a state-of-the-art metropolitan recovery system between two data centers situated 15 miles apart in US, with a deployment of storage equipment from two storage vendors. This ensures that no data is lost, and systems are switched seamlessly should there is be any disaster to one of the sites. However, should there be a regional disaster and both the metropolitan sites be taken out of commission, the recovery process would be slow, and over 20 hours of transactional data could be lost. Because data could be lost, this disaster scenario cannot be fully tested by transferring the production system to the remote system; remote recovery can only be partially tested with historical data.

MFC knew from previous studies that the business impact of loosing transactional data was very high. MFC IT has continuously investigated different technologies that would significantly reduce the amount of data lost in the case of a regional disaster. MFC were also well aware that there were significant risks in the current disaster recovery plan. MFC did disaster recovery testing twice a year, but they were concerned that these tests were not robust enough, and that both the amount of data lost and the time to recover could be significantly higher in the case of a real disaster.

MFC IT wanted to radically change the philosophy of remote recovery, and build resilience into both the applications and infrastructure. Rather than testing remote disaster recovery as a special case a few times a year, it wanted to be able to switch applications to any node, local or remote, as a normal part of operations.

After evaluating the available technologies, MFC IT concluded that a three-data center topology was the only technology that could significantly alter the amount of data lost, and provide testing as a normal part of operations. MFC initiated a project to build a business case, test and implement a three-data center topology that would dramatically reduce the amount and probability of loss of data in the event of a regional disaster. The application selected was the Financial Transaction System (FTS) with many million of transactions per day.

Two vendors were selected to participate in the project, with a 50-50 split in responsibilities. The business case analysis determined that the reduction in risk would be worth $84 million per year after implementation of the three-data center topology. The costs of implementation were about $10 million in initial costs, and $5.25 million in yearly operational costs. The implementation scheduled was 6 months, the payback period was estimated as 7 months and the net present value over three years was $161 million with an IRR of 271%.

This case study is designed give guidance to other customers considering justifying and optimizing disaster recovery solutions, and give confidence that there are available products, skill and experience to successfully implement this type of project.

Business case for establishing a three-DC topology

In analyzing the financial impact of a disaster, there are two major contributions to potential financial loss. The first is the unavailability of the systems to its clients and employees for a period. The second is loss of client and MFC data. The primary concern for MFC in this Financial Transaction System application (FTS) was the loss of their customers’ data. The loss of service for a day would be very unpleasant, but the business impact could be contained. However, the damage done to the reputation of MFC if a day’s worth of their customers’ data were lost could be catastrophic.

MFC IT governance executives concluded that if the loss of data were kept to a minimum, this would also significantly improve the recovery time as well. They mandated the team to focus on optimizing the data loss aspects of disaster recovery. The first step was to create a business case.

Steps in creating a business case

MFC is no different from any other large organization; IT had to produce a business case before any project could go ahead with a project. IT had established that there were available technologies that could be implemented from more than one vendor that could reduce the amount of data lost in the case of a disaster from hours to minutes. IT worked with the business executives from a number of different parts of the organization to establish the case. This included the corporate risk manager, the heads of the departments responsible for execution and business processes of the key finance applications and to the audit and governance functions. A summary of the key steps is as follows:

- Defining the key metrics to measure the amount of data lost

- The recovery point objective (RPO) was the key metric established by MFC

- Business impact analysis (BIA)

- Having created a metric for measuring the amount of data lost, the business impact analysis helped quantify the impact of any loss on the business as a whole, and the probability of that event occurring

- Estimating the RPO of the current system

- The purpose of this part of the exercise was the establish the RPO of the current system

- Estimating the expected loss with the current topology

- The previous steps allowed the estimation of the maximum exposure to loss of customer data, and the expected loss. This process allowed a number of different methodologies to be used to “triangulate” on an overall estimate. The methodologies used were:

- Direct estimate of the business impact of lost data and the probability of it happening

- Cost of insuring against lost data

- The reserves required to enable the financial institution to ride out one or more data loss disasters

- Establishing what could be done to improve the RPO and probability of failure of the existing system

- Establishing the risks of the implementation of new technologies to reduce RPO

- Creating the business case for implementing a change to RPO, in this case a three-node data center solution

- The previous steps allowed the estimation of the maximum exposure to loss of customer data, and the expected loss. This process allowed a number of different methodologies to be used to “triangulate” on an overall estimate. The methodologies used were:

The formal elements of a business case were then pulled together in summary form. This allowed the total cost of the implementation and the expected benefits to be analyzed over a three-year time period, and key financial metrics such as ROI, IRR, NPV and breakeven to be established.

These results allowed executive management to make a formal decision to authorizing the project. It also allowed evaluation of the results of the project.

Defining RPO

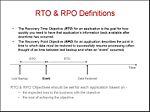

The first task was to establish a metric that established a value for data lost, and that could be set as a standard for the organization. The metric selected to define the average amount of data that is likely to be lost during a disaster was the recovery point objective, or RPO. Traditionally, companies intuitively know they do not want to lose data, but have a difficult time placing a value metric on for transaction losses. Figure 2 below illustrates the concept.

The amount of data lost during any failure is not a fixed specific amount. The amount of data lost has a probability distribution. The definition of RPO for a particular installation needs to include an assumption about the percentage of time that the RPO is achieved.

RPO Example: The finance application has a RPO of 1 hour (90% confidence) means that recovery from a failures will be able to go back to a recovery source that is less that 1 hours old for 90% of all failures.

More information can be found at [1].

Business impact analysis

A key question for MFC was to establish a method to estimate the financial impact of loss of data from the FTS application addressed in this exercise. This method is often known as a business impact analysis, or BIA. While understanding other factors contribute to a BIA, the business executives believed that the simplest estimate was as follows:

The business impact of loss of data equals the value of the business transaction lost.

This allows a simple calculation to determine the impact of losing an hour worth of data, as follows:

- Transaction per second (average)...................500

- Average Value of each transaction................$100

- Business impact of loss of data for an hour....$180M

Estimating the RPO of existing system

The next stage was to estimate the RPO of the existing systems. The current topology was two data centers (A & B) separated by less than twenty miles, with a third data center (C) that was in Europe. The application running on the A data center was synchronously mirrored onto the second data center. By using a synchronous copy, no transaction was complete until written to both sets of disks in the A & B data centers. Any disaster in A meant that no data was lost, and that the systems in center B could recover and continue in exactly the same way as it would in the A center.

In case both data centers were taken out by a rolling disaster, a consistent point-in-time incremental copy of all the data was made twice a day, after the finish of on-line processing, and after the finish of the batch processing. Consistent means that all the volumes were consistent with each other, point-in-time means that all the volumes reflected completed transactions at a certain exact time, and incremental meant that only the changes in the data were copied (about 2 terabytes of the 10 terabytes of storage). The data was then transmitted over high-speed lines to Europe and merged into the remote storage. The backup data took two hours to produce, and another six hours to be transmitted over to Europe over a OC-48 line. If both the A and B data centers were taken out, the maximum amount of data that would be lost is 12 + 2 + 6 hours = 20 hours of data. The average amount of data would be 14 hours of data. The current RPO at a 90% confidence level of achieving is 8 + 12 x 90% = 19 hours.

- Current RPO (90% Confidence level) is 19 hours

- Average Loss from a disaster = $180M (from above) x 14 Hours = $2.52 Billion

Expected loss with current topology

The next stage of establishing the RPO is establishing how often the circumstances would occur that would result in loss of data. Because the two data centers are separated by 20 miles, the probability that both data centers having a total outage simultaneously is significantly reduced compared to the probability of just one of the data centers. MFC then looked at what they could do to reduce the likelihood that both data centers being impacted by disaster and what they could do to decrease the amount of data lost (e.g., by taking incremental backups more often). The best-case scenario they proposed was to reduce the average loss by a factor of three, and the probability of both data centers being taken out could be reduced to once every 10 years. The best case expected loss every year with the current topology was therefore $1.8 billion divided by four divided by 10, or 45 million dollars per year.

- Expected loss with “best case” current topology = $2,520M/3 (from additional backups, etc)/10 years = $84 Million/year

The concept “expected loss” need clarification, because it has a precise statistical meaning. If an insurance company was insuring a large number of companies, it can establish an expected or average loss per company, and ensure that the premiums cover this loss. For MFC there is either a full loss or no loss. For most years, there will be no loss; for some years there could be a full loss of $2.52B.

The next key question business questions is: “What is the confidence level in the estimate?”

Triangulating on BIA impact estimates

- The first was a risk assessment view, as described above.

- The second is an insurance view. If MFC were to insure themselves against such a disaster, the premiums would at least be the expected loss of $84M/year. Reducing expected loss would reduce the premiums paid, and would be a business benefit to MFC. Reduction is expected loss is therefore a business benefit to MFC, and can be used as a line item in a business case.

- The third view is to assess the reserves required to cover extraordinary losses. The International Convergence of Capital Measurement and Capital Standards, known as Basel II, defines operational risk as the risk of loss resulting from inadequate or failed internal processes, people and systems, or from external events. The risks apply to any organization in business it is of particular relevance to the finance regime where regulators are responsible for establishing safeguards to protect against systemic failure of the banking system and the economy. If there were a requirement to keep reserves to cover such a risk, the interest lost on not being able to utilize $2.5Billion would be 5.25% of $2.52Billion, or $132Million.

- A possible forth way is to use Wall Street firms (such as M&A firms) to assess the risk profile of IT, and to assess the impact on share price (short and long term) should there be a disaster. The long term reduction in capitalization would then be another way to “triangulate” of an agreed range of values for disaster impact.

Although a full assessment was not made on the reserve implications, quotes were not asked from insurance companies and wall street firms were not asked to assess the share price impact, executive management agreed that significant budget could be applied to reduce this risk, and that a reasonable estimate of the value of eliminating the risk would be $84million/year.

The business case & business decision

The business questions for MFC were now simple.

- Can a three data center solution be implemented that would reduce the amount of data loss from hours to minutes?

- Is the cost of such a solution significantly lower than the best case expected loss?

MFC had been working with storage vendors for a number of years to establish the practical viability of three data center topologies. The potential cost of such a solution was estimated to be less that $10 million in initial costs and $5 million per year to sustain (network being a significant portion).

MFC IT executives were convinced that at least two vendors had the capability of delivering the hardware, software and implementation skills necessary to make the project work. Even if the estimates of expected loss were out by a long way, the business case was overwhelming. A summary of the business case is shown below. It essentially says that an initial investment of $10 million will return $161 million in three years. This is the correct way of comparing it to other projects for funding. Another way of putting the benefits is that it significantly reduces the risk a losing $2.5 billion that would cost at least $84million in to insurance payments or lost interest in $132M for interest lost on reserves.

The senior executives were fully behind reducing a potential liability of $2.5B that could happen at any time! They were mainly concerned about achieving the easiest possible implementation date.

MFC made the decision to go ahead with two vendors to implement a full three data center solution for both the on-line and the batch parts of the FTS system. Budget and staff were allocated, and an implementation date was set. One vendor was given responsibility for implementing the on-line portion of the workload, and the other was given responsibility for the batch portion of the project. Both portions are considered equally important and equally challenging.

Conclusions

MFC concluded that a three-node solution worked technically, was a sound investment with an excellent return on investment, and offered them significant benefits in:

- Reducing the exposure of customer data loss in the event of a regional disaster

- Enabling them to fully test remote recovery procedures and increase the confidence in their business continuance procedures for all the key stakeholders (shareholders, customers, partners, and governance agencies)

- Make switching workloads between the three data centers a repeatable practice, and enabled them to take a significant step towards implementing a philosophy of building business continuance in as an intrinsic part of application and infrastructure design.

Legal: © Wikibon 2007. This document is copyright protected by Wikibon and does not fall under the GNU general license terms for Wikibon.org. Links to this article from external sources are allowed, however any other re-distribution of this content for commercial purposes is strictly prohibited. Please contact Wikibon for more information.

The cases cited herein are real however the name of the customer is fictitious. Wikibon case studies are developed independently and their development is not initiated for or funded by any single company. Wikibon reports actual customer experiences and results with no attempt to emphasize any one vendor’s strengths or weaknesses. Read the full disclaimer.