#memeconnect #ddn

Contents |

Introduction

Recently Wikibon spoke to PhotoBox about its approach to big data. PhotoBox is a rapidly growing European-based online digital photo printing and developing company, with low-cost digital photo printing, personalized gifts and cards. It is free to join with unlimited photo sharing and storage, with revenue coming from printing and gift services. It was established ten years ago and is now deployed in 29 European countries.

PhotoBox currently stores several billion pictures, and the growth in the number of images is significant. Each picture is a JPEG digital file with an average size of 2.5MB, but this average size is also growing; some professional photographers using the service have images with 100 million pixels.

PhotoBox & Big Data

PhotoBox is a Big-data organization that has to minimize the costs of data processing. It faces two major IT challenges to achieving that in a high-growth environment:

- Scaling data storage to store and retrieve the pictures in the most cost effective way, and

- Scaling and maintaining the data about the pictures (metadata) in a transactional database that will support PhotoBox revenue generation.

Scaling Storage

The major challenges for data storage of photos are density of storage (space taken), the power taken to drive and cool the storage infrastructure, and the efficiency of the storage. The file system used by PhotoBox is GFS (General File System), which allows a cluster of Linux servers to share data in a common pool of block storage. One of the most important features of GFS which impacts the user experience is data consistency; changes made to the file system on one machine will be reflected immediately on all other machines in the cluster.

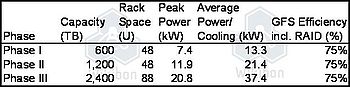

As part of their current strategy for expanding the photo storage they are using DataDirect Networks (DDN) S2A 9900 storage arrays, which are being deployed in three phases as shown in Table 1.

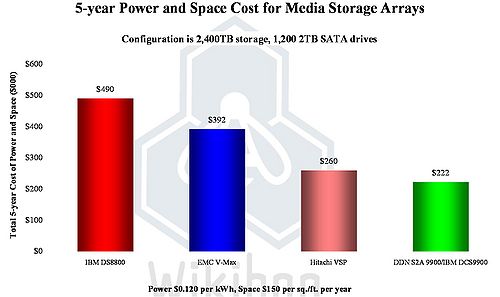

The density of storage and the power efficiency of the DDN arrays using GFS is the highest available in the industry at the time of this case study (January 2011), as shown in Chart 1. The total cost of power, cooling, and space is 15% less for the DDN array compared with the closest competitor. The space requirement is two frames, compared with six from the other vendors compared. The DDN S2A9900 has some special features that make it particularly applicable for media, including 6 Gigabyte read and write rates and hardware implementation of guaranteed data integrity on read. The ability to achieve consistently high bandwidth is a key requirement. This array is also provided by IBM as the DCS9900.

Scaling Big-data Databases

The other challenge for Big-data enterprises such as PhotoBox is how to scale out the transaction databases used to drive revenue, whether from advertising or direct revenue from printing and gift services. The lowest cost option is to use commodity servers and open-source SQL databases. The challenge with this approach is that the scaling of the database infrastructure requires a significant amount of redesign of the database (e.g., sharding) to minimize locking issues across the servers.

Photobox has publicly acknowledged its use of Clustrix1, which takes a different architectural approach to designing transactional, row-based databases that retain ACID SQL properties. By distributing multiple copies of the data using hash keys to the different processor nodes, the data and the locks for that data are held and processed in the same place, and the database calls can be broken down and distributed across all the nodes with the minimum overhead and with much higher levels of scale-out. As is common in big-data design, the query code is moved to the data, rather than the data moved to the processor running the query.

Conclusions

Big-data economics are driving organizations to find innovative ways of addressing the cost structure of large-scale infrastructures. The leading-edge Internet providers are implementing approaches that are likely to become standard for enterprise IT, as they tackle their own big-data implementations.

Footnotes: 1 Clustrix was included in Wikibon's review of outstanding technologies introduced in 2010