Storage Peer Incite: Notes from Wikibon’s October 7, 2008 Research Meeting

Moderator: David Vellante with Analysts: Eric Peterson & Nathan Thompson

SaskEnergy provides natural gas across Canada's huge Saskatchewan Province. A government-controlled company, it works under stringent regulations that among other things require it to maintain archives of all data for a five full years. Tape is the only practical alternative for fulfilling this requirement. However, the normal approach to tape backup - running nightly full tape backups of all disks in the server farm - is also prohibitively expensive when large numbers of direct-attached disks are involved, and it takes hours to perform.

To meet this requirement in a cost-effective manner, SaskEnergy had to implement a strategy that was radically different for its IT department - an "incremental forever" backup solution using Tivoli Storage Manager (TSM). This allows it to make one basic backup of all data on the disk pool and then simply backup any changes to that data every night thereafter. This cuts backup times tremendously and saves media and therefore money. Additionally, it uses the technology to combine data from two or more partially filled tapes onto a single cartridge, further saving in material costs.

Theoretically SaskEnergy never has to do a new full backup of the disk. The disadvantage of this, however, is that the data becomes spread over a huge number of tapes, and a restore would involve a tremendous amount of tape mounting. To limit that problem and ensure that it always has a working copy of the entire data set, it does one full backup of each disk a month.

This creative approach would not work for every company or meet every need. And it requires careful filing and maintenance of the archived tapes so that they are not lost. But for long-term archiving of large amounts of data that with any luck will never be needed again, it offers an inexpensive, simple, and fast solution that among other things solves the problem of perpetually expanding backup windows. G. Berton Latamore

SaskEnergy: Applying an incremental forever backup and archive solution

SaskEnergy is a corporation controlled by the Saskatchewan government, which delivers natural gas to more than 90% of the province, more than 327,000 customers. A few years ago, SaskEnergy had a problem. Its data center was divided into AIX and Wintel server islands. Each server had its own directly attached tape drive that was used nightly for full disk backups. This approach was expensive and time consuming, with backups requiring an eight-hour window to complete.

Compounding the challenge, SaskEnergy policy dictates that data be retained for five years, which increased the costs of daily full disk backups. The key business drivers for the project were to reduce costs, improve backup efficiency, and provide a more scalable and dynamic backup and restore infrastructure that could evolve over time.

The Solution

SaskEnergy required an enterprise solution that would allow it to reduce costs, speed backups, and maintain fast recovery. After reviewing several vendor offerings, it developed the following solution:

- Use Tivoli Storage Manager to provide an ‘incremental forever’ methodology, backing up only changed data;

- Perform backups of large disk pools to a temporary disk-based staging area, migrate data from disk to tape (locally), then move the tapes offsite for long-term retention;

- Use a SpectraLogic library with LTO4 and SAIT (Super Advanced Intelligent Tape) tape technology onsite and Spectralogic’s T120 library with LTO4 and SAIT technology for offsite archiving to take advantage of cartridge capacities in excess of 500GB uncompressed.

SaskEnergy’s five-year retention requirement mandated an extremely high density solution optimizing density in a single rack with a long life. From a cost perspective, disk was not an option for long-term retention, and the T950 combined with LTO4 (800GB uncompressed) and SAIT tape technology fit the requirement.

The tradeoff of this approach is that while perpetual incremental backups are efficient, simple, and fast when restoring recent files, restoring full server images requires 'touching' large numbers of tapes containing incremental backups and therefore is significantly more time-consuming and expensive. To reduce the risk and hassle of recovering a full system image from incremental backups, SaskEnergy performs a full image archive each month, limiting the maximum incremental window to 31 days.

Users should also note that systems like email (e.g. Notes) require a different methodology to be able, for instance, to restore a mailbox of a specific user quickly. SaskEnergy uses TSM for Notes, making a full backup once a week with daily incrementals, to solve this problem.

The Implementation

SaskEnergy started the implementation with AIX, using a methodology to directly attach to the AIX fibre channel SAN. This enables the backup of large disk pools in a few hours with minimal application shut down. Over time, this approach was applied to SaskEnergy’s Intel infrastructure.

Daily backups average 900GB/day (up from 500GB as recently as late last year) with a specialized Notes backup driving more than 1TB for each full backup (once a week)(which can be done on one or two cartridges), with daily incremental backups. To date, most recoveries have been done from the previous night’s changed files, and SaskEnergy has thus far not had to perform a full server recovery.

The Tivoli learning curve was a major implementation challenge. Tivoli Storage Manager is extremely powerful with lots of options. The tradeoff is that effectively implementing the solution requires a focused effort to understand the environment and set the numerous ‘knobs and dials’ to optimize and automate the system. In such a project, organizations are advised to budget for and/or train a domain expert in the area of automated storage management.

Going Forward

In recent years, the tape industry has seen disk encroach on its traditional backup and recovery domain, limiting industry revenues, including media revenue, to around $4B worldwide. However, LTO, higher tape cartridge capacities, longer media life and better reliability will allow the technology to compete effectively for tier 3 archiving and recovery applications. Indeed, estimates based on access and capacity requirements estimate that 60% of digital data is candidates for placement on tier 3 storage.

The perpetual incremental backup approach used by SaskEnergy provided the following benefits to the organization:

- It reduced backup windows from eight to two hours for some applications and minutes for many.

- It supported a 24 x 7 strategy by eliminating the need to quiesce Oracle and other applications during backups.

- It reduced costs by consolidating backup devices across the province.

- It reduced media costs by moving to cartridges with capacities of 50GB to 1TB+.

- It enabled full on and off site data retention, compliant with SaskEnergy’s policies.

SaskEnergy made a sound business decision given the retention requirements imposed on the IT department. It chose to use tape as a long-term archival technology which proved to be vastly less expensive and more efficient than disk-based alternatives. The bottom line is the experience of SaskEnergy and others underscores that tape is not dying but that its role is changing to one of a premier long-term archival technology for data that one will hopefully never be accessed.

Action item: Incremental forever backups may sound crazy. However, organizations with very long retention requirements should consider this philosophy. The perpetual incremental approach is best for storing data where the likelihood of ever having to recover older data is very low. In this instance, for the next ten years tape will be the most cost-effective and efficient technology.

SaskEnergy encourages users to develop their backup strategy

SaskEnergy is a corporation controlled by the Saskatchewan government, which delivers natural gas to more than 90% of the province and more than 327,000 customers. The SaskEnergy case study presented on the Wiki Peer Incite Review of Oct. 7, 2008 reinforces the need to develop a recipe for your backup and recovery strategy rather than using a standardized template. It’s no surprise that many users continue to single out backup and recovery as their biggest storage management problem. Backup and recovery is a set of processes and integrated technologies that meet the requirement to manage data recovery and to ensure a speedy resumption of IT services. The continually changing need for IT and users to understand the business value of the IT function mandates a best practices approach when performing appropriate business value and criticality assessments. These practices include:

- Data classification - define application and user requirements, required data retention periods, RTO/RPOs, archival strategy to determine the overall risk profile. Most effective storage management practices will start here.

- Remote site - determine if backup copies are needed outside the disaster impact zone.

- Tiered storage - understand where various technologies fit and evaluate the economic tradeoffs. A combination of disk and tape is the optimal solution for a backup/recovery strategy. As data retention periods grow longer, high-capacity tape becomes more cost effective and provides portability in the event of electrical outages. Tape cartridges now have capacities of 1 terabyte native or 2 terabytes compressed.

- Teamwork - encourage the stakeholders, the IT and business units, to work together to develop an effective backup and recovery management strategy that unites many related areas of the business. Bring them to the table early and often to clearly set business continuity, availability and disaster recovery goals.

- Simplify - minimize the number of backup products and touch points as SaskEnergy did by choosing TSM as the preferred tool.

- Think - make sure the recovery plan is stored with the backup data!

Implementing a sustainable backup/ recovery process has been a long-standing requirement for IT. A Storage magazine survey in May 2008 estimates that just over half of IT companies ever perform regular testing of the restoration process and 30 percent perform restoration testing once per a year or less frequently. Fifty-eight percent use a combination of tape and disk for disaster recovery. The primary responses given for not doing testing regularly include not having a disaster recovery site and not needing a disaster recovery plan. In a ComputerWorld survey on Oct. 16, 2006, nearly one-third of respondents said their backup procedures aren’t documented and the majority said their staffs aren’t well versed in the department’s backup strategies. Incredible findings given that the IT industry is more than 60 years old and that the IT is a requirement for nearly all businesses to survive.

Action item: The SaskEnergy case study highlights that truly effective backup/recovery plans are comprehensive and cover a range of approaches that maximize the ability of an organization to recover from technology failures and natural or man-made disasters. These plans include business continuity, defining operational procedures, implementing hardware redundancy, using standard backup software, and testing the disaster recovery processes. The days of using a standardized template and simply backing everything up on a daily basis have passed. It’s now time to develop a recipe for the backup/recovery needs of your business.

The paradox of long retention policies

Blanket retention periods that are exceedingly long (e.g. 5, 10, 20+ years), by their very nature, put constraints on organizations. Not only does the IT function need to accommodate such corporate dictates, but often the retention of such information and the corollary policies are the tail that wags the business dog. The conundrum is that without appropriately long retention policies, organizations run legal risks of not knowing what they don't know. In other words, they risk opposing counsel, the news media, the public, or other antagonists gaining access to damaging information that an organization may not know exists.

In many organizations this dynamic results in no one leader owning the retention policies. As such, the policy defaults to either a very long retention period blanketing all data (this often occurs in quasi-regulated and government sectors) or the other end of the spectrum: shred everything after 90 days (less regulated businesses such as retail).

Stakeholders must come together to 1) perform a risk assessment; 2) understand the impact of retention policies on information both as a liability and asset; 3) agree on a policy, strategy, and governance structure that protects the organization and allows for sufficient business agility.

Action item: Organizations must periodically revisit retention policies to ensure they align not only with regulatory implications but also business imperatives. Where legacy policies are outdated and inadequate, leaders must attack the problem head on by organizing cross-functional teams involving records management, legal, IT, business units, and auditing to perform a risk/reward analysis and set cogent policies based on ever-changing business requirements.

Tivoli Storage Manager challenges the kiss principle

Adopting a progressive or perpetual incremental backup strategy using Tivoli Storage Manager (TSM) is a fundamental concept users must accept to fully exploit the architecture. Despite its power, flexibility, and ability to automate cumbersome media management, storage administrators will often resist changing existing backup methods (e.g. weekly full backups) because TSM is a ‘black box’ that must be trusted completely. Despite the perils of TSM complexity, not embracing progressive incremental backups will invariably lead to excessively large databases which are wasteful and will negatively impact performance and costs.

The Wikibon Peer Incite exploring SaskEnergy’s challenges implementing TSM underscores the need for careful planning with regard to embracing progressive incremental backup strategies. TSM is indeed powerful by its very nature because it can be tailored to business requirements.

For example, in the case of SaskEnergy, accommodating a five year blanket retention policy and enabling specialized workarounds for Notes and other applications requires substantial planning and TSM domain expertise. Further complicating the situation at SaskEnergy is a long blanket retention policy mandated by the organization. This type of policy should be avoided if possible with TSM as it will overtax the backup application by unnecessarily backing up data that doesn’t need to be protected.

TSM’s flexibility is also a two-edged sword. For example, TSM can enable customers to accommodate backups for applications with special requirements (e.g. email or other database applications) that need to be available on a 24 X 7 basis. However users should be aware that this capability will require enabling and configuring additional TSM modules, requiring further planning and technical expertise.

The best advice with any IT implementation is where possible, keep it simple. This old adage holds true with TSM as applied to backup and archiving but is often easier said than done.

Action item: These examples and the SaskEnergy Peer Incite demonstrate that TSM is powerful but extremely complex. Prior to implementing a perpetual incremental backup and archiving strategy using Tivoli, users should acquire deep TSM expertise and insist that capability exists as a pre-requisite of assuming responsibility for the project. Having a basic understanding of generic software and backup environments is not sufficient to succeed with TSM in this context.

Disk and Tape: Buddies in Perpetuity with Tivoli Storage Manager (TSM)

SaskEnergy’s implementation of TSM is a shining example of the power of TSM. When deployed properly, TSM and a few similar products orchestrate backup and archive using a combination of disk, tape, and policies. Data or copies of data shuttle from disk to tape and vice versa almost seamlessly.

Tape is certainly not dead at SaskEnergy, and vendors should take note. Moreover, they need to look at their future and integrate tape as part of disk and disk as part of tape. Instead of dedicated appliances, envision a Tier 3 storage management framework that includes disk, VTLs, data dedupe, tape backup,and archive to tape, and evolves to support the broad spectrum of backup and archiving applications. Tape suppliers need to include non-tape technologies in their visions. Disk suppliers need to recognize that the economics of tape continue to be compelling and solve real customer problems. Tape and disk systems should be combined to leverage complimentary strengths. All of this must eventually be integrated into a complete stack. Another message to vendors is perpetual backup is a requirement.

However, TSM is renowned for its complexity and requires accomplished experts to tweak thousands of options to get it functioning to requirements. As one user put it: ”It works; it works great; but I never want to do that again.” TSM also consumes a lot of hardware resources, particularly disk for its storage pools/cache. Other similar products suffer from this complexity and high resource consumption and require similar levels of expertise.

Action item: Users should be pushing vendors toward a solution that integrates a variety of data protection technologies (e.g. disk, tape, de-dupe, etc) with sets of clearly defined standards. Vendors need a vision and roadmap encompassing such a solution and one that keeps it simple.

SaskEnergy: Combining tape and disk to implement a green strategy

Disk vendors have touted the death of tape; tape vendors have pointed out how green tape is. The bottom line of the SaskEnergy story is that a combination of both provides an optimum cost solution that meets SaskEnergy’s specific business needs.

The archiving retention edict from SaskEnergy senior management was that all data had to be retained for five years. The data warehousing solution was considered as separate from the archiving and restore solution. Data created as part of the data warehousing became data that had to be archived and restored if necessary.

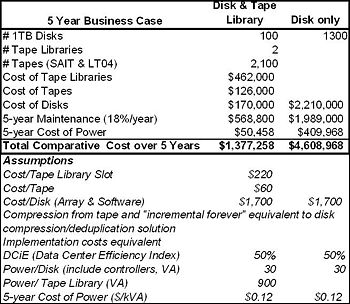

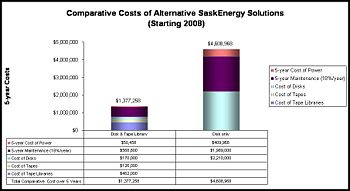

The table and chart below show that the cost of a disk only solution is over four times higher than a mixed disk and tape library solution. The overall business case could change if:

- The probability of having to recover from older data was higher,

- The business benefit of quicker recovery from data in the tape library was more significant,

- There was a significant business benefit in being able to access the archived data (over and above the data warehouse data held).

Also shown in the table are the facilities costs. The facilities cost of disk are about eight times higher, going from ~$50K with tape to over $400K with disk only. The use of advanced MAID features such as AutoMAID from Nexsan might reduce that to about $200K over 4 years, but that is still four time higher than tape.

Action item: The bottom line is there will continue to be a storage hierarchy, with a place for different technologies at each level. Working constructively together with all the technologies available will be more fruitful than second guessing the market.