Contents |

Executive Summary

Data protection or backup/recovery is consistently reported as the most pressing problem by storage managers as well as enterprise architects. More than fifty percent of customers are re-evaluating their backup and recovery practices and looking to evolve processes to enhance the protection of their information. There are a number of drivers influencing how organizations protect information, including:

- Unprecedented data proliferation and ‘copy creep.’

- The economics of disk-based backup relative to tape.

- Rapid adoption of virtualization.

- Heightened awareness of inadequate disaster recovery procedures.

At VMworld 2009, Asigra conducted in-depth surveys of twenty-six customers regarding their backup and recovery strategies. All questions were open-ended and respondents spent approximately 30 minutes answering the survey. Asigra agreed to share the results with the Wikibon community in exchange for an analysis of the results. Wikibon chose to write up a subset of the questions.

What follows is a summary with our initial conclusions.

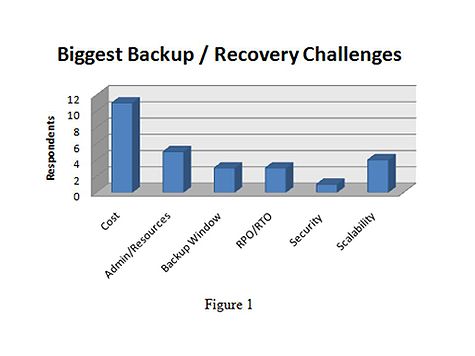

What are your biggest challenges as they relate to backup and recovery?

Figure 1 shows that an overwhelming majority, 41% said “Cost” was the biggest challenge.

Data Growth

Data growth is one of the most obvious drivers of cost, for both primary as well as backup storage. IDC each year conducts a survey of the “Digital Universe” and in 2008 it found that users created 487 exabytes of data. Twenty-five percent of this data was new while 75% of this data was copies of that new data in the form of snapshots, replicas and backups (both disk and tape) for the purpose of protecting the original content. Wikibon estimate indicate that primary data sets in the data center grow between 25% and 65% every year and this has a significant impact on data protection infrastructure and strategies.

For example, consider a 10TB environment. Using traditional backup software and doing weekly fulls and daily incrementals, backing up to tape, utilizing a tape rotation schedule of 14 dailies (~12TB), 4 weekly fulls (~40TB) and 12 monthly fulls (~120TB) – that 10TB primary storage environment represents ~172TB of tape backup capacity. If the primary environment grows by 50% such that in the second year the primary storage environment is 15TB, the backup environment grows 66% and represents 258TB of backup capacity.

Bottom Line: Incremental growth in primary storage translates into significant costs down stream.

Server Virtualization

Data growth however is only one driver. New technologies such as server virtualization are starting to have a measurable impact on the data protection process. While practitioners often focus on the utilization, environmentals and simplified management benefits of server virtualization, storage challenges are often overlooked. Virtualization causes major storage problems including increasing copies, performance I/O bottlenecks and onerous management pain. When it comes to backup and recovery the challenges are even more difficult.

| Wikibon Planning Assumption: | |

| By 2011, 50% of virtualized shops will need to re-architect backup processes to meet SLA's. |

Specifically, server virtualization is taxing backup processes. In particular, server virtualization works so well because non-virtualized servers are underutilized allowing IT organizations (ITOs) to recoup wasted resources. However backup is the one application in any data center with very high I/O rates—backup servers typically aren’t underutilized during backups. By consolidating physical resources into logical machines, virtualized server infrastructure becomes resource constrained during backups, which can often limit physical to virtual consolidation ratios and stress backup windows.

Bottom Line: While no ITO wants to change backup processes, server virtualization is forcing a re-assessment of data protection technologies and methodologies.

RPOs / RTOs

End users are getting smarter. It used to be the line of business manager telling backup administrators how important their data was to the business. Today, end users are asking IT, “Are you backing up my data?” “How quickly can you recover my data?” (RTO) and “When I get my data back, how current is it?” (RPO)? The reality is it would be great if there were some guidelines in every company that allowed IT to set standard RPOs and RTOs but there are not. In fact, when 26 IT users were asked:

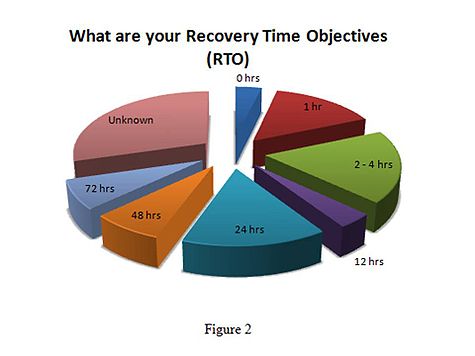

What are your Recover Time Objectives for your various applications & systems?

Nearly 30% of the responses were “I don’t know!”

| Wikibon Planning Assumption: | |

| By 2014, 90% of CIO's will be required to document and maintain RPO and RTO metrics within service level agreements. |

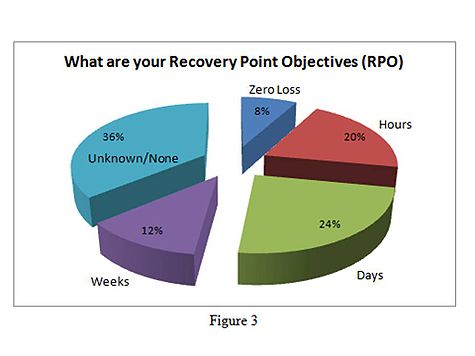

With respect to recovery point objectives (RPO), 36% of respondents indicated that they either had no RPO or didn’t know. Few respondents had zero or near zero data loss requirements.

Bottom Line: Data availability can mean the survival of a company and getting data back fast is critical to business resilience. More organizations are turning to disk-based recovery to simplify data protection and minimize reliance on tape, although tape remains a last resort, ‘deep archive’ technology in most organizations.

Data Security

As reported in the Pittsburg Tribune Review – May 2008

Bank of New York Mellon Corp., the world's largest custodian of assets, reported a second potential breach of customer data this year and said it will provide enhanced fraud-protection services to those affected.

The most recent incident occurred on April 29 when a backup data-storage tape containing images of scanned checks and other payment documents was lost while being moved from Philadelphia to Pittsburgh, spokesmen for the bank said Friday.

Whether it is tapes falling off trucks, lost in a warehouse or sitting in someone’s house, not having access to tapes that have ‘private’ or ‘customer’ data on them is a risk and these days, it’s a risk that can cost companies tens of millions of dollars. Today more than ever IT needs to ensure that all of their backup data is secure. There are a number of ways to ensure data is secure but they all come with a price and security is becoming another factor in why customers are re-thinking their data protection strategies.

Cloud Computing and Backup – Disk-to-Disk-to-Cloud

The new ‘buzz’ word in IT these days is ‘cloud’. Much like all new technology, IT is always willing to try it out on the workloads that no one wants to deal with, namely backup. It’s like the LIFE cereal commercial. None of the ‘cool’ kids wanted to try LIFE cereal so they made Mikey try it. Once he liked it, then the rest would follow.

Technology is no different. If you remember back to the days of the ‘xSP’ a number of the new service providers that popped up were in the managed backup service business. Why? Because backup is usually the one process that people are willing to outsource. It doesn’t store the primary data, only copies so if someone else can manage these copies better at a lower price, than let’s let someone else take a stab at doing backup better.

The reality of turning backup into a utility in order to keep costs low is very attractive and again, another reason IT is looking at new backup capabilities and cloud computing as the first step in leveraging the cloud. Asigra Hybrid Cloud Backup ™

Asigra’s solution is a leading example of a hybrid cloud backup approach. The company’s offering places a software backup appliance on premise at the customer’s site and uses the cloud to backup the changed data from on-premise appliance. Asigra uses an agent-less backup methodology to pull data from a server's primary storage and its software performs data reduction both on the appliance and in the cloud backup stream to minimize network traffic and reduce costs.

Asigra’s client software is provided free of charge and runs on a customer-supplied virtual or physical server. Asigra charges for the backend vault on a per TB basis. By including this on-premise software appliance, Asigra provides the benefit of high speed backup and recovery with excellent RPO and RTO.

The main tradeoff of using the Asigra solution is IT needs to change the backup process and replace existing backup software, which many shops are unwilling to do. However in VMware and virtualized environments, where there’s less physical hardware available for backups, re-architecting backup is what many organizations should consider. This is due to the preponderance of I/O bottlenecks in virtualized environments and the inability of most traditional backup software packages to address this problem. Asigra’s approach only backs up changed data and as such, helps minimize I/O issues.

For organizations with backup window constraint, tight RPO/RTO requirements and virtualized environments, Asigra’s solution warrants consideration. As well, Asigra is proven as the company recently announced its 100,000th location under the protection of its offering.

Remote Office & Desktop / Laptop Backup

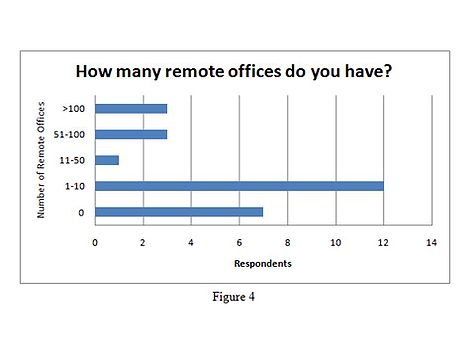

The bigger a company gets, especially through acquisition, the more users they have and these users are in more locations. The one thing that hasn’t changed is IT’s accountability for information. There is a significant need to be able to reach out to all the end points in your company that create and protect that data, for the good of the knowledge worker as well as for the protection of the company. Remote offices and desktops and laptops are creating a big challenge for protecting information. IT has been able to deploy single solutions in the remote office that, at one point, were ‘good enough’ but now, due to all the issues we have discussed, as well as lack of local IT resources at these remote sites, organizations are not meeting their backup needs. Most typically companies in the survey have between 1 and 10 remote offices.

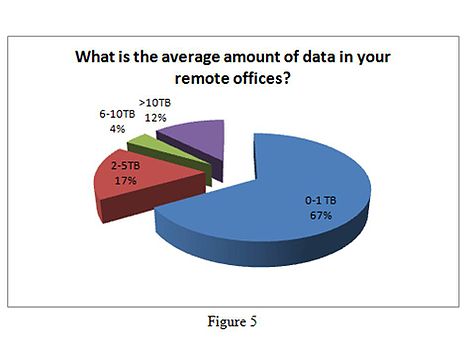

And the dominant amount of data in these remote sites is between 0 and 1 TB.

Bottom Line: Remote office backup and recovery is a significant challenge facing organizations, causing users to re-think backup strategies in an effort to reduce remote infrastructure costs (both CAPEX and OPEX) and speed recovery without clogging the corporate network.

Disaster Recovery

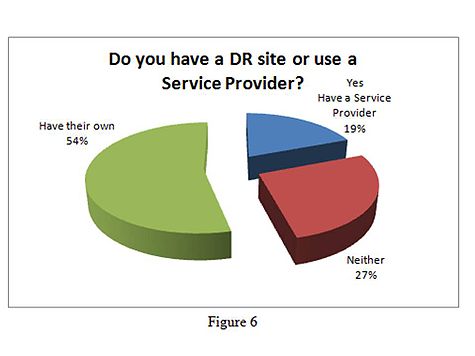

We have already discussed tape as well as costs, and both of these factors play a significant role in disaster recovery. Organizations need to tier data in an effort to focus spending more efficiently when it comes to data protection and technologies are becoming available to automate this process. Replication, for tier 1 applications that generate revenue is prudent. It’s also expensive. The real question is, how can IT get all of the feature benefits of replication such as RAID reliability, disk based recoveries and moving data off site as quickly and efficiently as possible, at a cost competitive to tape? Most customers who responded to the survey have their own remote sites and can somewhat lower the costs of remote data storage by leveraging existing facilities.

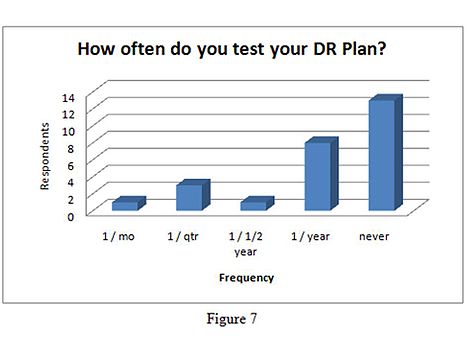

Additionally customers who more regularly test their DR plans tend to have greater success with application recovery, which is the key to DR. In the survey conducted by Asigra, 50% of the respondents stated that they never tested their DR plan because “it was too difficult trying to recover data from tape.” Respondents that tested their DR plan every quarter or more stated “they had greater and greater success with each DR test.”

| Wikibon Planning Assumption: | |

| 70% of IT organizations lack adequate DR testing processes. |

Bottom Line: Approximately 70% of ITO’s inadequately test DR because it is too painful, expensive and risky. The potential to create disasters during disaster recovery testing causes organizations to avoid failover and failback testing and as a result, organizations remain exposed.

The Point

As we stated in the beginning, all roads in IT ultimately lead to a discussion of cost. Each of the areas outlined above are contributing to backing up more data, more efficiently, storing it for longer periods of time, on-line with the ability to quickly restore that data, reliably. The question is how should organizations go forward? The answer is in steps. Organizations must start with a business impact analysis to categorize applications and understand their RPO and RTO requirements. Only then can proper backup and recovery techniques be architected. As well, ITO’s need to understand their data flows and in virtualized environments, the impact of consolidation on the backup window due to constrained physical resources.

Conclusion

No organization wants to alter its existing backup processes. But the reality is in many growing environments, processes developed decades ago for serial tape don’t provide adequate data protection for today’s virtualized and remote office environments. Backup and recovery have typically been a constrained resource because the infrastructure is perceived by CEO’s to provide no tangible business value. However lines of business are increasingly aware of the data protection imperative and boards of directors are becoming more cognizant of the need to adequately secure and protect critical data. A fundamental enabler of modern data protection is data reduction techniques such as incremental backup, compression and data deduplication. This trend is driving a movement toward disk-based backup which will continue as these solutions fall in price, become more efficient and offer functionality such as remote replication and hybrid cloud backup and recovery.

Want to learn more?

Collaborate with your peers inside Wikibon to learn more about what your colleagues are doing to improve their data protection capabilities at http://wikibon.org.